Scott Olson/Getty Images

The deep end of disease

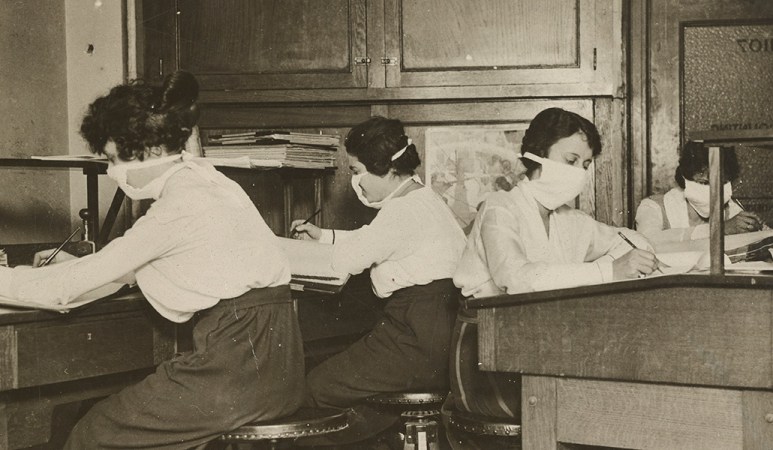

The emergency hospital, a partially demolished building hastily enclosed with wooden partitions, was about to open. It was the fall of 1918 in Philadelphia, and influenza was spreading fast. With many of the city’s doctors and nurses serving in World War I, 23-year-old Isaac Starr and his third-year classmates at the University of Pennsylvania School of Medicine were needed to help tend the sick. They’d had just one lecture on influenza. Their first job was to assemble the hospital beds, about 25 to a floor.

Starr was assigned a 4 p.m. to midnight shift. The beds soon filled with patients who had fevers, he recalled in a 1976 essay for Annals of Internal Medicine. Many who developed influenza recovered. But Starr watched as some patients became starved for air, their skin turning blue. Soon, they were “struggling to clear their airways of a blood-tinged froth that sometimes gushed from their nose and mouth,” Starr wrote. “It was a dreadful business.”

There were no effective treatments. Patients, desperate for breath, became delirious and incontinent and would die within days. “When I returned to duty at 4 p.m., I found few whom I had seen before,” Starr wrote. “This happened night after night.” In October, around the pinnacle of the pandemic, roughly 11,000 Philadelphians perished.

Some who died in the makeshift hospital stuck with Starr. There was Mike the piano mover, who in a frenzy left his bed and was about to leap from a window before medical staff grabbed him. Mike died shortly after. There was the young woman, “flushed with fever,” whose large family kept vigil at her bedside, hoping for a recovery that never came.

Recovery never came for the estimated 50 million people worldwide who died during the 1918 influenza pandemic. The century since has seen many vaccines and treatments become available to combat infectious diseases. But beyond those medical feats, the story of epidemics remains a story about people: people who become sick, people who die, people whose lives are upended, people who care for others. And ultimately, people who remember what happened and people who forget.

The public memory of that 1918 pandemic, which lasted into 1919, faded quickly in the United States, with few historical accounts or memorials to victims in the aftermath. One of the first major histories of the pandemic, by Alfred Crosby, didn’t arrive until 1976, the same year Starr published his reflections on serving in an influenza ward. (Crosby’s book, first called Epidemic and Peace, 1918, was later reissued as America’s Forgotten Pandemic: The Influenza of 1918.)

Since 1918, we have faced many epidemics, but COVID-19 has been the first to rival the great flu in how it has changed people’s daily lives. “We are living through a historic pandemic,” says Anthony Fauci, director of the National Institute of Allergy and Infectious Diseases in Bethesda, Md. But a hundred years’ worth of advances in virology, medical understanding and vaccine development has made a difference. It was 11 months from the discovery of the SARS-CoV-2 virus to “having a vaccine that you could put in people’s arms,” Fauci says, “a beautiful testimony to the importance of investing in biomedical research.”

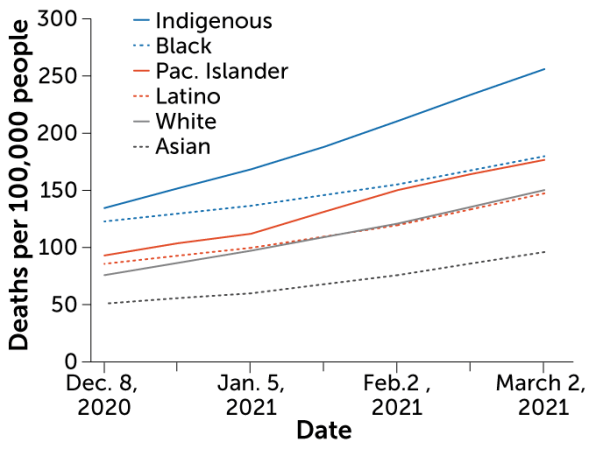

The COVID-19 pandemic is also a stark reminder of what hasn’t changed. “Pandemics just surface all the muck,” says internist, medical humanities scholar and historian of medicine Lakshmi Krishnan of Georgetown University in Washington, D.C. Ever present but often ignored societal inequities become unavoidable. The disproportionate weight of COVID-19 disease and death on Black, Latino and Native American communities in the United States — “we better not forget,” says Fauci. “We really need to address the social determinants of health that lead to these very, very obvious disparities.”

Yet outbreak after outbreak, our collective memory falters. The urgency of the predicament eventually fades. The communities left behind, families mourning the loss of loved ones, people struggling with unmet medical needs, with stigma, become small islands of remembrance ever threatened by vast seas of forgetfulness and indifference.

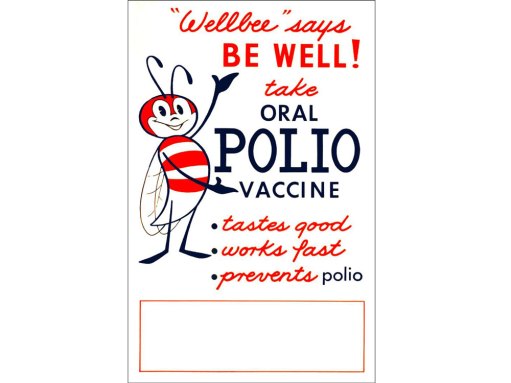

Sometimes forgetfulness comes from a lack of reckoning. The United States moved on from the 1918 pandemic without addressing what was lost. Sometimes the success of vaccines obscures their power. The fear that surrounded polio subsided in the United States after the country rolled up its sleeves for polio vaccines. The dread of many other childhood illnesses has also diminished, leaving some to take prevention measures for granted.

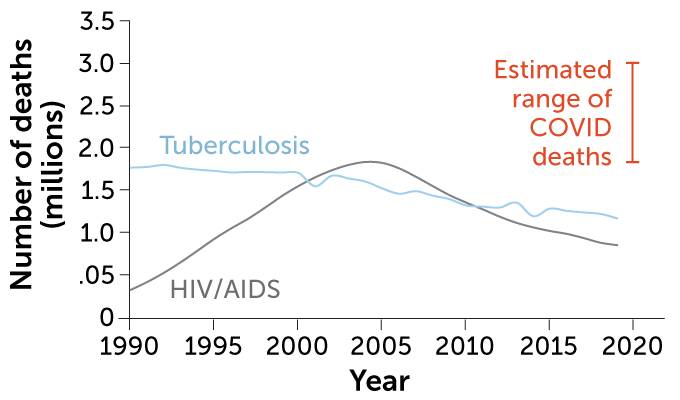

There are infectious diseases that are global terrors but don’t command much attention in the United States. Tuberculosis, an ancient disease found in mummy remains, killed an estimated 1.5 million people worldwide in 2020. Fungal diseases, long neglected and a growing threat, cause an estimated 2 million deaths globally each year. These illnesses often strike the most vulnerable in society, those already struggling to be seen.

To look back at infectious diseases since 1918 is not only to observe what we’ve learned about the viruses, bacteria and fungi with which we share our world, and observe the strides made in lessening their harms. It’s a call to listen to the stories of how infectious diseases have shaped people’s lives.

Outbreaks “have occurred throughout history and they occur now,” says Fauci. “And they will continue to occur.”

— Aimee Cunningham

Virus hunters

The two young men were among the millions serving in the U.S. Army in 1918. The 21-year-old had trained at Camp Jackson in South Carolina and the 30-year-old at Camp Upton in New York. Both were admitted to their camp hospitals with influenza. Both died of the disease on the same September day, the 26th, never reaching the battlefields of the Great War. Lung tissue from each young man was preserved in a formaldehyde solution and embedded in wax for an Army collection of autopsy specimens.

About two months after the soldiers’ deaths, influenza struck a village on the Seward Peninsula in Alaska. In five days that November, 72 of the 80 adult residents died. Their final resting place was a mass grave in the frozen ground. But decades later, lung tissue from one of the villagers, a woman whose name and age at death are unknown, together with the two soldiers’ samples led to the discovery of the virus behind the 1918 influenza pandemic.

Today, we can rapidly identify the culprit of an outbreak. After a cluster of mysterious pneumonia cases arose in December of 2019, it took just weeks for scientists to decipher the pathogen’s genetic letters and identify it as a coronavirus. But decades of earlier research and discovery made such speed possible. The task of uncovering the 1918 virus’s genetic blueprint had to wait for such advances and benefited from an amazing amount of luck.

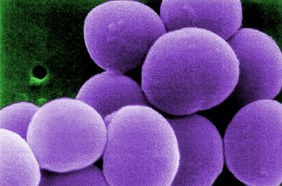

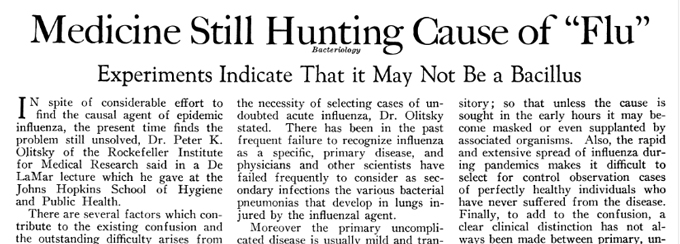

At the start of the 20th century, the idea of viruses was just beginning to form in scientists’ minds. It wasn’t yet known what pathogen caused influenza. Some believed that a bacterium discovered in 1892 was guilty, even giving it the name Bacillus influenzae. That same year, a botanist reported that something smaller than bacteria — because the infectious agent could pass through a filter that bacteria could not — appeared to cause a troublesome infectious disease of tobacco plants.

Scientists couldn’t see viruses, only their effects on living tissue, says A. Oveta Fuller, a virologist at the University of Michigan in Ann Arbor. Light microscopes, long available at the time, could spot bacteria but couldn’t bring viruses into focus. Scientists in London were able to “discover” human influenza virus in 1933 during a flu epidemic by filtering throat washings from symptomatic people and showing that the filtrate infected ferrets.

It took the 1931 invention of the electron microscope — which relied on a beam of electrons to probe the submicroscopic world — to makes viruses visible. By the end of the 1930s, the first electron micrographs of viruses were published, including the rod-shaped particles of the tobacco mosaic virus.

As the 20th century moved on, the study of viruses took off. Scientists learned about the inner workings of these parasitic pathogens, made of genetic material and proteins, that commandeer a cell’s machinery to make more virus. Along the way, laboratory techniques to copy and read genetic material became available. By the ’90s, the know-how was there to try to decipher the 1918 influenza virus. The next step was to reach back in time.

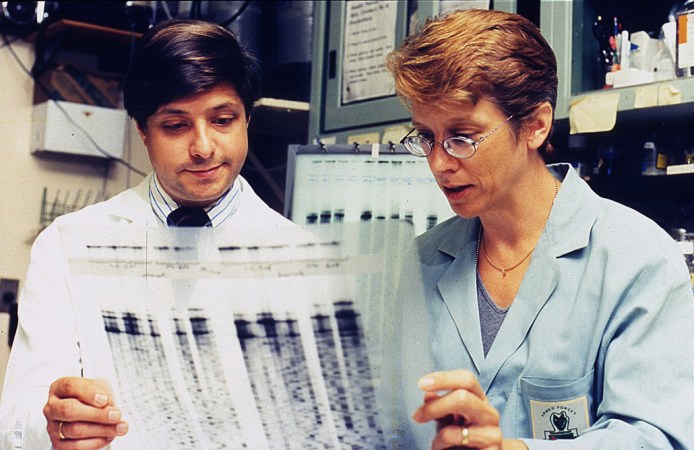

In 1995, a team of scientists at the Armed Forces Institute of Pathology in Washington, D.C., amid talk of a potential closure, set out to showcase the value of the institute’s extensive collection of autopsy specimens, dating to the Civil War. In describing the collection, Ann Reid, a molecular biologist who had been on that team, evokes another treasure trove: “The last scene in Raiders of the Lost Ark, where they’re in this huge warehouse — it’s like that, except the boxes are teeny.”

The scientists decided to look for genetic fragments of the 1918 influenza virus in the specimens, says Jeffery Taubenberger, a pathologist and virologist who was also on the team. “Here was a pandemic that killed tens of millions of people … just an unbelievably severe outbreak,” he says. The team hoped that the 1918 virus’s genetic material would reveal some signpost of its lethality.

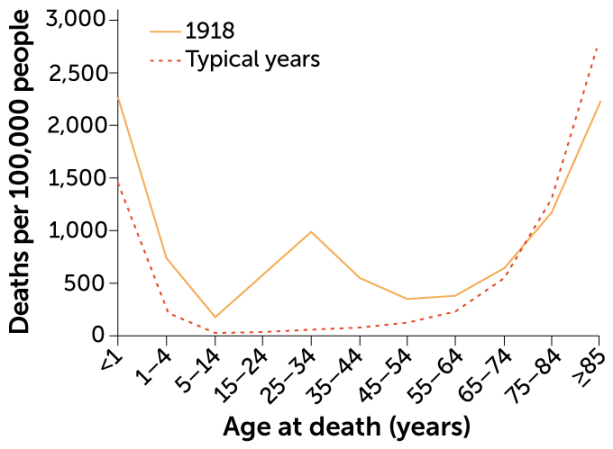

The high mortality and the age distribution of the victims sets the 1918 pandemic apart in influenza history. An estimated 50 million people died worldwide, compared with estimates of up to several million for each of the pandemics in 1957 and 1968. And whereas influenza characteristically strikes the very young and very old, nearly half of the influenza-related deaths in the United States during the pandemic were among 20- to 40-year-olds. Young men in the military, mobilizing for World War I, were at high risk — making the Armed Forces pathology collection a good place to look.

But a 1918 influenza death from the collection wasn’t enough. “Most people who died of the 1918 flu didn’t die directly from the virus,” Reid says. “They died from bacterial pneumonia that set in after the influenza virus injured their lungs.” The patients who succumbed to bacterial pneumonia tended to die a week to 10 days after they first had flu symptoms. By then, the virus would be gone. So the team needed tissue that displayed the pathological signs of a viral pneumonia, which killed its victim within days.

After finding a few samples to work with, it was on to recovering the virus’s genetic material, with fingers crossed it wasn’t hopelessly degraded. The researchers used a technique developed in the 1980s called polymerase chain reaction. With knowledge of more recent influenza viruses, the team designed short strings of genetic letters, called primers, to latch onto the 1918 virus’s genetic material. The technique makes millions of copies of the stretches of letters targeted by the primers. The researchers incorporated a radioactive form of an element into the copies of viral genetic material so that the material would show up on film. It finally did, after a year and a half. “I really developed a lot of blank film,” Reid says.

Once the team had some genetic material, they could begin to determine the blueprint of the virus with a technology called sequencing. First, the researchers determined the sequence of letters for nine fragments of the virus’s genetic material, from the Camp Jackson soldier, work published in Science in 1997. But the tissue sample — a pinkie fingernail–sized piece of lung, maybe an eighth of an inch thick, says Taubenberger — had very little viral genetic material. So the team screened the collection again and, luckily, found the Camp Upton soldier’s sample. Then luck struck again, in the form of a fax from a man named Johan Hultin. “It was this really long handwritten fax in this very old-fashioned script,” recalls Reid.

Hultin, a pathologist, had once gone in search of the 1918 virus. In 1951, he received the blessing of the Alaskan village devastated in the pandemic — today called Brevig Mission — to exhume bodies from the mass grave. Hultin took samples of lung tissue but wasn’t able to retrieve the virus. After reading of the new effort in Science, Hultin contacted Taubenberger to volunteer to return to Brevig Mission. The village council permitted him to reopen the grave. In 1997, Hultin, at 72, excavated a well-preserved woman, buried among other victims whose remains had decomposed during periods of thawing. He removed her lungs and shipped them to the team. The tissue tested positive for influenza.

Taubenberger, Reid and their colleagues now had enough material to continue. The team published the genetic instructions for the proteins the virus uses to get into and out of cells in 1999 and 2000. In 2005 came the last of a series of papers that together provided the complete sequence of the 1918 influenza virus.

It took almost 10 years to complete the project, says Taubenberger, using techniques that at the time “were as cutting-edge as possible, kind of Star Trek–y.” Taubenberger, now at the National Institute of Allergy and Infectious Diseases in Bethesda, Md., still studies influenza. In his laboratory today, he could sequence the 1918 virus from an autopsy case in about a week, he says.

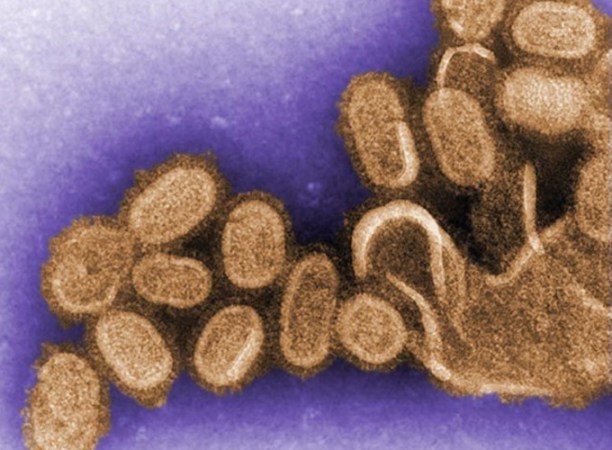

With the genetic blueprint in hand, the researchers were able to reveal some of the virus’s backstory. They compared the 1918 sequence to that of influenza viruses that sicken birds, pigs and people. That way, the team could put together a kind of influenza family tree. It turned out the 1918 virus was the great ancestor. “In some real ways, the 1918 flu never went away,” says Taubenberger.

The 1918 virus was an influenza A virus, the type that causes pandemics. Influenza A viruses’ primary homes are the guts of aquatic birds, but the viruses are also found in pigs, horses, people and other animals. When influenza A viruses that have been circulating in different species infect a single animal, say a duck or a pig, the viruses can swap genes for proteins that are important for getting into and out of cells.

Sometimes bird-specific versions of these genes can end up in influenza viruses that infect people. The proteins from these genes take the human immune system by surprise. If the virus can spread easily, a pandemic can happen. The family tree suggested that the 1918 virus wasn’t just a few different versions of genes in a more familiar package. The whole virus looked birdlike and appeared to be completely unknown to people.

“The 1918 virus was a fundamentally new and different virus that has been able to survive in one form or another for over 100 years,” says David Morens, senior scientific adviser to director Anthony Fauci at the National Institute of Allergy and Infectious Diseases in Bethesda, Md.

But there wasn’t a signpost in the sequence that could explain the 1918 virus’s killer nature. Taubenberger and others turned to research in animals to look for answers. That work has found that the virus induces a strong inflammatory response that harms the lungs. White blood cells called neutrophils swarm into the lungs and cause a lot of bystander damage, says Taubenberger. “It’s not just the virus, it’s the host response to the virus,” he says. “It’s so important to understand the role of the host in this.”

How ill someone becomes after a viral infection is influenced by the circumstances of the infection, a person’s age and genetic instruction book, the intricacies of a person’s immune response and the presence of other pathogens. But as we’ve seen with COVID-19, we still don’t know enough to be able to predict who will do well and who will do poorly when viruses invade or “to figure what’s going on inside their body that’s going to turn the tide to end up killing them,” Morens says.

“It’s eerie going through another pandemic now, having spent so many years of my life really digging through the accounts of the 1918 flu,” says Reid, executive director of the National Center for Science Education in Oakland, Calif. Many of the mysteries about who became severely ill in that long-ago pandemic have cropped up again a century later with COVID-19. “Why do some people get so sick from the coronavirus and other people don’t … [people] who look on paper like they have a similar risk?”

— Aimee Cunningham

Support our next century

100 years after our founding, top-quality, fiercely accurate reporting on key advances in science, technology, and medicine has never been more important – or more in need of your support. The best way to help? Subscribe.

Vaccine victories

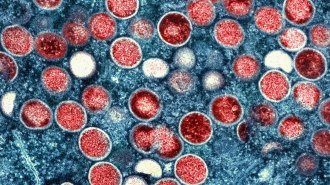

On February 24, 1947, Eugene Le Bar, an American merchant, boarded a bus in Mexico City. During the trip, his head began to ache and he broke out with a rash. He arrived in New York City on March 1 still not feeling himself. Four days later, he was admitted to the hospital. His stay overlapped with a mumps patient named Ismael Acosta and a little girl, almost 2, who had croup.

While doctors searched for a diagnosis, Le Bar deteriorated. He died March 10. Acosta and the little girl had been discharged, but returned later in March with rashes. The results of tests for those two led to a review of Le Bar’s autopsy. All together, the information led to a public health emergency. The diagnosis was smallpox, a disease that kills about 30 percent of those sickened and leaves survivors disfigured by prominent scars.

More victims turned up. A little boy with whooping cough who had been at the hospital developed a rash. So did Acosta’s 26-year-old wife, Carmen; she died days later. Soon the number of people with smallpox reached 12. New York City embarked on a massive vaccination campaign.

At the time, Anthony Fauci was 6 years old, growing up in Brooklyn. Fauci, today the director of the National Institute of Allergy and Infectious Diseases, or NIAID, in Bethesda, Md., remembers his parents talking about a huge event that would be happening in the city. “We all had to get vaccinated, and vaccination means somebody would get a little needle and prick it multiple times in your arm.” (The smallpox vaccine wasn’t given as a shot; instead, a needle poked the skin repeatedly, ushering a drop of vaccine into the skin.) Fauci and his family were among the millions of New Yorkers immunized that spring, bringing the smallpox outbreak to a close without another person added to the count.

Throughout history, smallpox was one of the most feared infectious diseases. A British historian penned this description in writing about the 1694 death of Queen Mary II from smallpox at age 32: “The smallpox was always present, filling the church-yards with corpses, tormenting with constant fears all whom it had not yet stricken, leaving on those whose lives it spared the hideous traces of its power.”

Smallpox is also a starring character in the story of vaccines. At the end of the 18th century, the English physician Edward Jenner extracted fluid from a sore caused by cowpox on a dairymaid’s hand and inoculated a young boy with it, a test of the belief among some farmers that a case of cowpox protected against smallpox. The experiment propelled the concept of vaccination forward. By giving the immune system a preview of the pathogen, the body’s defenses were prepared for the main event.

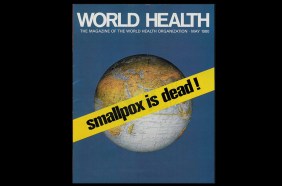

What followed exemplifies the fullest power of vaccination. After a worldwide campaign, smallpox was the first infectious disease to be declared eradicated from the globe, in 1980. A scourge that had plagued humankind for at least 3,000 years was consigned to the history books.

Other vaccine-preventable diseases — especially those that afflict children — harm many fewer around the world today than in the near past. During the 20th century in the United States, cases of nine diseases, including polio, measles and Haemophilus influenzae type b, declined by 95 to 100 percent after vaccines for those diseases became widely used. Yet there can be “a lack of appreciation for what vaccines have done in terms of getting rid of or managing many infectious diseases,” says virologist A. Oveta Fuller of the University of Michigan in Ann Arbor.

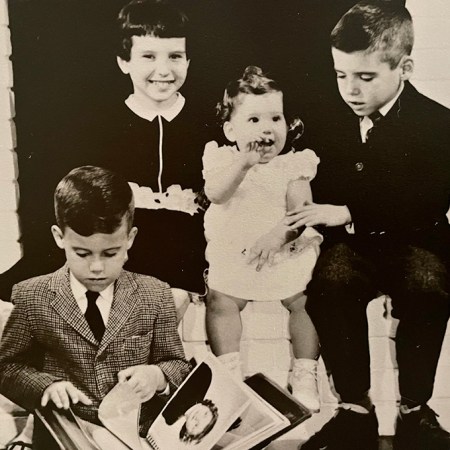

For many today, an understanding of polio also comes from history books. But others who grew up when summers meant polio outbreaks have sharp recollections.

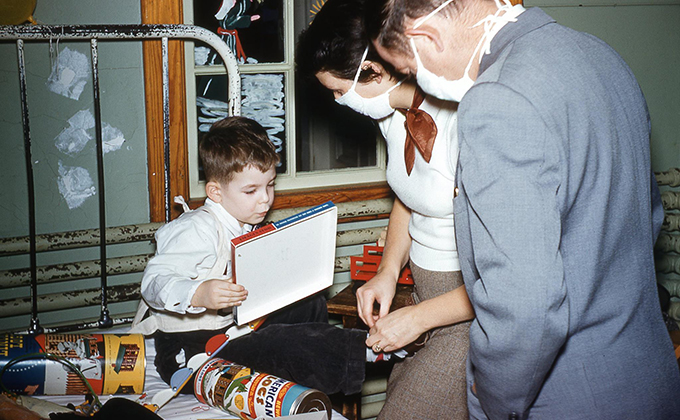

Paul Offit, a pediatric infectious disease specialist at Children’s Hospital of Philadelphia, was 5 years old in 1956. After surgery for a problem with his foot, he stayed for about six weeks in a hospital’s chronic care facility. It was primarily a polio ward. He was surrounded by children whose limbs were suspended in traction or whose bodies were swallowed in iron lungs. The first polio vaccine became available in 1955, but Offit hadn’t been immunized for it yet.

“It was a lonely, frightening experience,” Offit says. Parents were allowed to visit only one hour a week. He could see “how vulnerable and helpless and alone all of those children were.” Offit’s bed was next to a window, which gave him a view of the building’s front door. He’d stare at the entrance, “waiting for someone to come save me.”

As fate would have it, Offit later trained at that same hospital in Baltimore as a medical student. The ward he had languished in as a child was now a suite of offices. The room looked the same, even the molding, “and that window was still there,” Offit says. “I remember walking up and looking out that window and seeing the same thing I saw 20 years earlier and just fighting back tears. I remember it well.”

Memorial Day weekend in the United States used to herald a season of polio fear, as cases rose in summertime. Children were barred from swimming pools and crowds. Anthony Fauci’s parents wouldn’t allow him and his sister to go to the beach at Coney Island. “All of us as kids knew somebody who’d been paralyzed,” says David Morens, who grew up in the 1950s and is senior scientific adviser to Fauci.

Vaccines provided an exit from this recurring nightmare, and the two developed to thwart polio each drew on different advances. First came Jonas Salk’s “killed” polio vaccine, approved in 1955. Made with poliovirus that had been treated with formaldehyde, the virus could no longer cause harm, but the body could still mount an immune response against it. About seven decades earlier, Louis Pasteur had demonstrated that rabies virus could be inactivated to develop a rabies vaccine.

In 1961, Albert Sabin’s oral polio vaccine became available. Often delivered on a sugar cube, Sabin’s easily digested vaccine was the inspiration for the song “A Spoonful of Sugar” in Mary Poppins. Sabin’s approach was to weaken the poliovirus by making it replicate in nonhuman cells. Forced into an unfamiliar environment, the virus made genetic changes that diminished its ability to cause disease. This method, called attenuation, had first been used about 25 years earlier to create a yellow fever vaccine.

Life before and after polio vaccines was like night and day. In 1952, polio paralyzed more than 21,000 people in the United States. Thirteen years later, that number had plummeted to 61. By 1979, polio was eliminated in the country. With new immunizations, one by one, common childhood ailments all but vanished in the United States: measles, rubella, chicken pox, and meningitis caused by bacteria.

When Kathryn Edwards trained in pediatrics in Chicago in the mid- to late 1970s, “we were really in the grips of Haemophilus influenzae meningitis.” (H. influenzae, formerly Bacillus influenzae, is the misnamed bacterium that had once been suspected of causing influenza.) There would often be four or five children at a time hospitalized with this dangerous swelling of the membranes covering the brain and spinal cord, says Edwards, an infectious disease pediatrician and vaccine researcher at Vanderbilt University School of Medicine in Nashville. Some children with H. influenzae meningitis were left with brain damage, while about 5 percent died. Edwards still remembers a young patient lost to the disease the last night of her training.

The first vaccine against H. influenzae type b, the type that most commonly caused meningitis and other severe infections, became available in the United States in 1985. More effective vaccines came a few years later, evaluated by Edwards and colleagues. Again the impact was unmistakable. Before 1985, close to 20,000 children, most of them under age 5, developed severe infections (those that invade usually germfree areas of the body like the blood) from H. influenzae type b each year, including 12,000 with bacterial meningitis. By 1994 and 1995, the incidence of severe disease had fallen 98 percent in children age 4 or younger. With the availability of vaccines against H. influenzae and other pathogens, says Edwards, “the practice of pediatrics is much different now than when I began 40 years ago.”

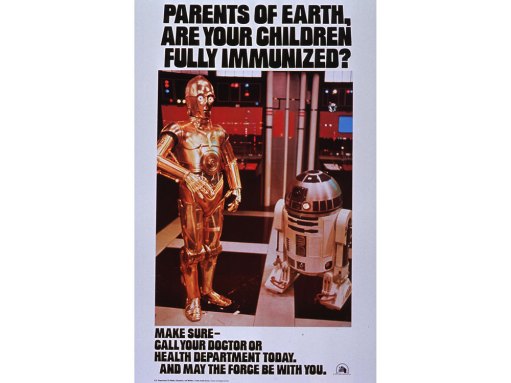

The scope of infectious diseases children face worldwide is slowly changing too. From 2000 to 2018, 23 million deaths globally were prevented by measles vaccination. But there are still millions of children around the world missing out on basic immunizations that are routine in the United States. The COVID-19 pandemic has exacerbated the problem: An estimated 23 million children did not receive childhood vaccines in 2020, about 3.7 million more than 2019.

The work of developing vaccines against COVID-19 began shortly after researchers worked out the genetic sequence of the new coronavirus, SARS-CoV-2, in January 2020. Previous studies of the coronaviruses behind Severe Acute Respiratory Syndrome, or SARS, and Middle East Respiratory Syndrome, or MERS, had identified a viral protein that would effectively ramp up an immune response.

And the basic research that would underpin a new vaccine technology, which would be used for two of the first COVID-19 vaccines, had been going on for decades. The approach is based on messenger RNA, which carries out of the cell nucleus the instructions for making a protein. The vaccines have the guide for the viral protein; the body makes that protein and produces antibodies against it. Some of the crucial work, modifying the instructions for the viral protein so the body wouldn’t see the guide as an invader, came from RNA biologist Katalin Karikó, immunologist Drew Weissman and colleagues in the mid- to late 2000s.

COVID-19 vaccines were created and tested in the shortest timeline for any vaccines yet. But that efficiency wasn’t matched in the distribution. While there haven’t been enough shots available globally, the United States, a country awash in supply, has struggled to immunize everyone eligible. Some people haven’t been vaccinated because they can’t take time off work to recover from side effects or are worried they’ll have to pay for the shots. Others don’t see COVID-19 as a threat and don’t see the need for the vaccine.

COVID-19 has killed millions of people worldwide. Yet perhaps it would be more terrifying if it primarily threatened children. The horror of polio was that it could leave children paralyzed for the rest of their lives, Offit says; it was as though children had been injured in war.

Fuller thinks that seeing the harm that polio could do to children helped make Americans eager for polio vaccines. During the COVID-19 pandemic, “because we were all isolated, we didn’t see each other really suffering or dealing with the effects of this virus,” she says.

When virologist Katherine Spindler of the University of Michigan was growing up, she had measles, mumps and rubella. The vaccines for those afflictions “came along too late for me,” Spindler says. She still remembers the name of her older brother’s classmate who died in eighth grade of an infectious disease. The routine immunizations that are now regular parts of pediatrician appointments mean that most of us “don’t know what it’s like to have polio or to die from measles.”

Spindler found getting the COVID-19 vaccine “so meaningful,” thinking about all of the science that came together to develop it. People have e-mailed her with questions about the vaccine. One woman who had an appointment but wasn’t sure she wanted to keep it wrote several times. Spindler spent a few hours responding. Finally, she got an e-mail back with a picture of the woman in her car getting the shot. “Tears came to my eyes,” Spindler says. “One person vaccinated feels like such a victory.”

— Aimee Cunningham

Spotlight

Vaccine hesitancy is nothing new. Here’s the damage it’s done over centuries

Pockets of people have railed against vaccines as long as the preventives have existed.

Sanatoriums and stigma

When Cynthia Dockrell’s brother was just shy of 4 years old, he had to leave the family. It was December 1955, and he had been diagnosed with tuberculosis. He spent the next two years of his life at a children’s sanatorium, much of it strapped to a bed, the tactic employed to keep young patients at rest.

Patti Barnaby Koltes was in fifth grade in 1964 when her mother left for a sanatorium. Barnaby Koltes remembers being tucked in her bed with the lights out, her mother kneeling next to her, telling Barnaby Koltes she was going away. Barnaby Koltes asked if her mother was going to die.

Dockrell’s brother and Barnaby Koltes’ mother had tuberculosis at a time when old and new treatment approaches overlapped. A diagnosis could still mean a long stay in a sanatorium. But now, finally, there was a cure: antibacterials.

In 1882 the German physician Robert Koch announced his discovery of the germ that causes tuberculosis, Mycobacterium tuberculosis. But it was decades before researchers could target the pathogen.

A chance finding in 1928 by the Scottish bacteriologist Alexander Fleming, that a moldy spot on a petri dish of bacteria had stopped the bacteria’s growth, helped usher in the antibacterial era. By 1940, scientists at Oxford University had demonstrated that penicillin protected animals from several different bacterial infections. By 1945, pharmacies in United States carried the drug.

Antibiotics (derived from molds and bacteria) like penicillin and other antibacterials such as the chemical sulfanilamide changed medicine and extended lives. The drugs meant that people no longer died regularly from infections that set in after injuries or surgeries. Antibacterials also made chemotherapy and organ transplants, during which the immune system is suppressed, possible.

Scientists discovered antibacterials that worked against tuberculosis by the mid-1940s, with more and better options arriving in the next two decades. The death rate from tuberculosis had been declining in some parts of the world since roughly the mid-1800s, likely due in part to improved living conditions and nascent public health measures. People expected that antibacterials would hasten the end of tuberculosis. But the drugs were not a death knell, nor could antibacterials fully do away with the stigma that shadowed the disease. Treatments for tuberculosis are lifesaving, yet this ancient disease and its trauma are decidedly still here.

M. tuberculosis has been infecting and killing people for thousands of years. The ancient Greeks called it phthisis, which translates to consumption. Hippocrates gave the disease this name, and wrote of its deadly nature: “For consumption was the worst of the diseases that occurred, and alone was responsible for the great mortality.” The body’s deterioration tracked the disease’s progression — the pallid sufferer coughed up blood and lost weight, seeming to waste away.

People with tuberculosis have faced stigma throughout history, from when it was thought to be hereditary through the discovery of M. tuberculosis, which focused attention on germs that people carried or those in their homes. Today, there is still the assumption that “you caught it because you were dirty or unhygienic,” says social scientist Amrita Daftary of York University in Toronto.

But a person can be exposed to tuberculosis just by taking a breath. The airborne disease spreads by talking, singing, sneezing or coughing. Five to 15 percent of those infected become sick with tuberculosis in the following months to a few years, developing symptoms such as fever, cough, night sweats, weakness and fatigue. People with the disease are contagious.

The majority of those who become infected don’t develop the disease in this time frame. Instead, they control the infection, don’t have symptoms and aren’t contagious — though the infection can eventually progress to tuberculosis, especially if the immune system stops working well.

Why “some people progress to active tuberculosis and then others can control” the infection and not get sick is still a big research question, says Jyothi Rengarajan, an immunologist and microbiologist at Emory University School of Medicine in Atlanta. As M. tuberculosis and the immune system duel it out in the lungs, a structure called a granuloma forms. It’s a mass of immune cells that walls off the bacteria but can also allow the pathogen to persist inside. In some people, the granuloma contains the infection. In others, it breaks down, destroying lung cells and allowing the bacteria to spread. Without treatment, lung damage continues and can be fatal.

Dockrell’s brother was exposed to tuberculosis by their great-uncle, who lived with their grandparents. Barnaby Koltes’ mother was likely exposed while working as a nurse before her four children were born, becoming ill years later.

Dockrell, a writer and former editor who lives in Newton, Mass., doesn’t recall the day her brother left for the sanatorium. But she has a clear memory of visiting him once. She wasn’t allowed inside, so her brother came to the window. “I can still see his face looking down at me,” she says.

The first sanatoriums opened in the mid-1800s, embracing a treatment of fresh air, nutritious meals and, ultimately, bed rest for tuberculosis. The facilities also separated the contagious sick from everyone else. Regimens were strict. A 32-year-old woman chronicled her time at a tuberculosis sanatorium in England in the 1940s, away from her husband and 15-month-old son. She described the sanatorium’s rules in her diary: “Absolute and utter rest of mind and body — no bath, no movement except to toilet once a day, no sitting up except propped by pillows and semi-reclining, no deep breath. Lead the life of a log, in fact.”

At the Trudeau Sanitarium in Saranac Lake, N.Y., “the initial prescription for most patients was bed rest 24 hours a day,” wrote Gordon Snider, who arrived there in 1949 to train in pulmonary disease. Founded in 1884 by the American physician and tuberculosis sufferer Edward Livingston Trudeau, the facility had two hospital units for new and very sick patients and, for recovering patients, cottages with screened porches to provide fresh air.

The first antibacterial available to treat tuberculosis, streptomycin, was used at Trudeau soon after the drug’s discovery in the early 1940s. But scientists quickly realized that M. tuberculosis could become resistant to the effects of one drug. In 1950, a British clinical trial established that the disease was best treated with a combination of drugs, at that time streptomycin and para-aminosalicylic acid, or PAS.

However beneficial, PAS tasted horrible and left people nauseous and bloated. At Trudeau, “patients hated it,” Snider wrote in Annals of Internal Medicine in 1997. “The window screens of patients’ rooms and the walls below them became white with discarded para-aminosalicylic acid.”

Better antibacterials were on the horizon. In 1952 came news of a “wonder drug” called isoniazid that was safe and well-tolerated. Over the next two decades, three additional, effective drugs for the disease — ethambutol, rifampin and pyrazinamide — arrived. These four drugs are still used today, combined in a six-month regimen.

As studies showed that sanatoriums didn’t add to what antibacterials offered, the facilities began closing, with most shuttered or shifted to other uses by the late 1970s. But the United States was not finished with tuberculosis. The 1980s and ’90s saw a resurgence of the disease, as strains developed resistance to several drugs and as the epidemic of HIV, which weakened the immune system, took off. Multidrug-resistant tuberculosis, caused by bacteria that can fend off isoniazid and rifampin, is still curable but needs a longer treatment with different, more toxic drugs.

When Dockrell’s brother and Koltes’ mother had tuberculosis, they didn’t have to worry about multidrug-resistant tuberculosis. They were cured with the drugs available at the time. But there was no treatment offered for the trauma that remained.

For Dockrell’s parents, the pain of separation and the worry over their son’s health was compounded by stigma. Her parents’ friends stayed away. Dockrell was kept from other kids. Even Dockrell’s maternal grandparents vanished from the family’s lives. Six of her grandmother’s siblings had had the disease, and her grandmother, Dockrell says, “just could not deal with it.”

The day her brother finally came home was joyous. “My parents were very happy in a way that they hadn’t been in a long time,” Dockrell says. Her parents were advised to put the illness and absence behind them and move on. “There was so much trauma surrounding this event that was never processed,” Dockrell says. The grief and fear that remained “had lasting psychological effects for all of us.”

Barnaby Koltes sensed that she couldn’t rely on her mother upon her return from the sanatorium. The experience left Barnaby Koltes’ mother bitter and suspicious. She felt abandoned by friends. She thought she had lost her status as a doctor’s wife, Barnaby Koltes says. Her mother had kept the house clean as an operating room, Barnaby Koltes remembers, yet she had had this “dirty disease.”

Barnaby Koltes’ mother developed dementia later in life. At one point, Barnaby Koltes, a newspaper columnist who lives in Naperville, Ill., was helping her parents choose a Florida retirement community that offered assisted living. Barnaby Koltes suggested a place called The Glenridge. Her mother did not want to see it. “She didn’t want to go because of the name,” Barnaby Koltes says. It was the same name as the sanatorium she had stayed at decades earlier.

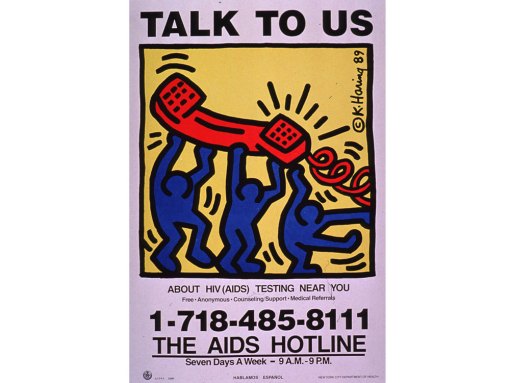

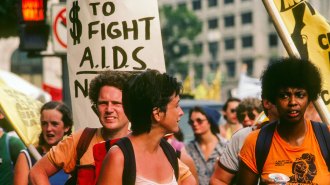

Stigma can be painful, isolating and discriminatory. “Persons who had been treated for tuberculosis were seriously stigmatized,” Gordon Snider wrote. “The fears and myths that grew up around the acquired immunodeficiency syndrome (AIDS) soon after its appearance in the early 1980s reminded me of tuberculosis as I had known it at mid-century.”

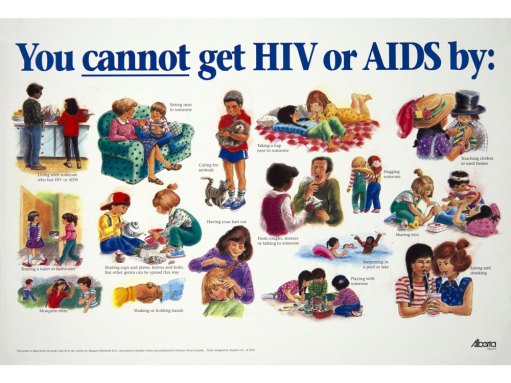

The HIV epidemic is ongoing. People who become HIV positive can still be reluctant to tell their families and friends because of stigma. But decades of research and advocacy have led to powerful antiretroviral drugs that can make HIV a chronic, manageable illness. The effectiveness of the drugs — a person taking antiretroviral therapy who has an undetectable amount of virus in the blood cannot spread the virus through sex — inspired the prominent undetectable = untransmittable campaign, which seeks to reduce HIV-related stigma.

There is no similar stigma-reducing campaign for tuberculosis, says Daftary. She argues that along with messaging about contagiousness and distancing, patients need to hear that tuberculosis is curable. “Yes, it’s contagious, but you can become noninfectious” with treatment, she says. The stigma and fear that still trail tuberculosis can stop people from seeking the care and antibacterials they need.

In 2020, there were an estimated 1.5 million deaths from tuberculosis. (It was the top infectious disease killer from a single pathogen globally in 2019, but COVID-19 took the lead spot in 2020.) Though the worldwide toll of tuberculosis is glaring, the disease remains largely out of view in the United States, where it tends to strike people who are poor, homeless or in prison, or immigrants from countries with high case rates — people who are already facing inequities in society.

In an echo back to the time when sanatoriums kept families apart, the COVID-19 pandemic has separated many from ill loved ones. In the spring of 2020, Dockrell’s mother developed COVID-19 in her nursing home. Visitors weren’t allowed inside. So Dockrell stood by the entrance, eyes lifted to a fifth-floor window to see her mother — much like as a child, Dockrell had looked up to see her brother’s face.

— Aimee Cunningham

Spotlight

After 40 years of AIDS, here’s why we still don’t have an HIV vaccine

The unique life cycle of HIV has posed major challenges for scientists in the search for an effective vaccine.

Emerging fungal threats

Tyson Bottenus once captained an 80-foot schooner called the Aquidneck. He sailed tourists off the coast of Newport, R.I., discussing the area’s history and sites. In January of 2018, he had finished another season at the schooner’s helm and had recently gotten engaged to his partner of many years, Liza Burkin. To celebrate, the couple, avid cyclists who’ve ridden through New Zealand and Japan, set off for a bike tour of Costa Rica.

At one point, riding to Montezuma, on the Nicoya Peninsula, “we were on this very, very dusty road for a long time,” Bottenus says. “It was a dirty, sandy, hard-packed road.” While going downhill, Bottenus crashed, badly scraping his elbow. The next morning, he went to a doctor, who spent about an hour picking out little rocks and cleaning dirt from the wound before she bandaged it up. The injury kept him from swimming but otherwise didn’t disrupt the trip.

About a month after returning to Rhode Island, Bottenus started having headaches. And he couldn’t control his mouth properly — his speech was off, and he was drooling. Eventually his doctor ordered an MRI. It revealed a lesion in his brain. “My first thought was, I must have some sort of cancer,” he says. “I’m only 31…. I’m way too young for this.”

It wasn’t cancer. Nor was it one of a series of infections proposed as doctors searched for a diagnosis. Bottenus had two brain biopsies, but neither provided enough tissue to identify the problem.

In August of 2018, Bottenus became very ill and was hospitalized. He was having problems with his right leg and couldn’t walk. His mouth muscles weren’t working. And he could no longer tie the drawstring of his pants with a square knot. Sailors commonly use a square knot to fasten two ropes together. It’s a knot Bottenus has taught others and could previously do with his eyes closed. “I’m a captain of boats, supposedly,” he remembers thinking. “I am not the person I think I am.”

Doctors performed a third brain biopsy, taking a slightly larger amount of tissue this time. Finally, Bottenus got a diagnosis: He had a fungus growing in his brain. It was a black mold called Cladophialophora bantiana that can breach the blood-brain barrier. The best medical guess is that Bottenus was exposed in Costa Rica — likely from the dusty air he inhaled, or perhaps from his debris-laden elbow wound.

People are exposed to fungi all the time, and usually we get along just fine. Fungi are in the air, in the soil and in us, part of the community of microbes that live in our bodies. Root-associated fungi form crucial partnerships with more than 90 percent of land plant species, helping the plants absorb water and nutrients from the soil in exchange for food. But some fungi are also infectious pathogens that can cause pneumonia, blindness and meningitis.

An estimated 2 million people globally die from fungal diseases each year. And pathogenic fungi tend to harm those with the least means. Fungal keratitis, an infection of the cornea, strikes more than a million people annually, estimates suggest, and blinds around 600,000. Found in tropical and subtropical areas, the disease — usually treatable with early diagnosis — often impacts young, poor agricultural workers. Chronic pulmonary aspergillosis, as another example, is a lung infection that commonly occurs in people already burdened with lung damage from tuberculosis.

Fungal infections are also a threat to people with HIV, a virus that weakens the immune system. When defenses are down, typically benign fungi can take advantage of the gaps in our immunological armor and cause an infection. In resource-rich areas, fungal infections have decreased with earlier HIV diagnosis and the availability of antiretroviral drugs. But where people can’t get early, consistent HIV care, fungal infections have stubbornly persisted as dangerous complications to HIV and AIDS.

Advances in modern medicine that have extended lives have also led to opportunities for fungi to infect. Organ transplantation and treatments for cancer and autoimmune diseases have created a large population of people who regularly take drugs that inhibit components of the immune system, leaving these patients susceptible to fungal invaders.

Some fungi have emerged more recently as threats to human health. First reported in 2009, as a source of an ear infection, Candida auris unexpectedly caused outbreaks of invasive infections — those that reach the blood or spinal fluid or other sterile areas of the body — on three continents a few years later. Hospital patients are at risk from the yeast, which has proved resistant to certain antifungal drugs.

Scientists have proposed that the emergence of C. auris as a danger to people may be tied to our warming climate, with the yeast gaining temperature tolerance in the environment. That could explain why C. auris can replicate inside human bodies, a niche that’s usually too hot for many fungi.

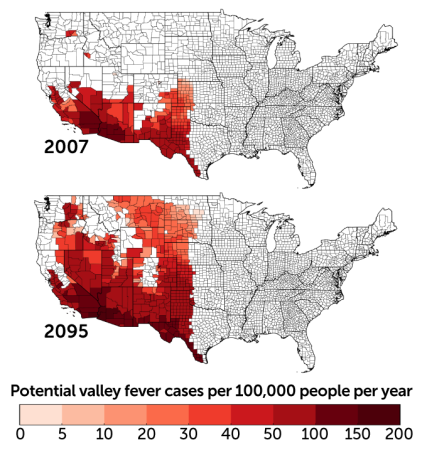

The warming climate may make other fungal pathogens more widespread. Coccidioides, a fungus that shape-shifts from a soil-dwelling, fluffy mold to aerosolized spores to round, parasitic cells at the site of infection, causes a disease called valley fever. Someone who breathes in the spores might not have symptoms at all or could become fatigued, short of breath and develop a fever, cough and night sweats. It’s among the fungal pathogens that also strike healthy immune systems. In the United States in 2019, 18,407 cases were reported to the Centers for Disease Control and Prevention.

Coccidioides is primarily found in southwestern states, but global warming could change that. “The entire western United States has been identified as potential habitat,” says medical mycologist Bridget Marie Barker of Northern Arizona University in Flagstaff. The fungi thrive in hot, dry environments where there are brief, heavy rains. Global warming is expected to make western states hotter and alter precipitation patterns. Research reported in GeoHealth in 2019 predicts that by 2100 the areas impacted by Coccidioides could expand north in the western half of the country and annual cases could increase by 50 percent.

Despite the heavy burden of fungal diseases and the potential for that burden to grow, there are no approved vaccines against fungi, and the medicine cabinet of fungal remedies does not go deep. There are only a few different types of antifungal drugs. It’s difficult to develop antifungals in part because fungi are eukaryotes — like us, and unlike bacteria, their cells have distinct membrane-bound compartments, including a nucleus that holds their genetic material. That shared biology means that if a drug can kill fungi, it could harm us too.

The antifungals that do exist “are not spectacular,” says pediatric infectious disease physician and medical mycologist Damian Krysan of the University of Iowa Carver College of Medicine. Even with treatment, invasive fungal infections are difficult to survive. For example, an invasive infection with the mold Aspergillus has a mortality rate ranging from 40 to 90 percent in immunocompromised patients, depending on where the infection is, among other factors. For a cancer patient with a great prognosis who develops an Aspergillus infection, Krysan says, “you can be cured of your cancer and die of your fungal infection.”

Even diagnosing a fungal infection is challenging. For one, there are fewer diagnostic tests specific to fungi than there are for viruses and bacteria. Another problem is that the symptoms of a fungal infection can resemble those caused by other pathogens. And fungi grow slowly, so it takes longer to confirm that a blood or tissue sample is infected with a fungus. A delayed diagnosis can mean delayed treatment, which complicates the chances of recovery.

Into this uncertain medical terrain landed Bottenus, a previously healthy young man who acquired an exceedingly rare fungal infection in his brain.

Bottenus has been treated with antifungal drugs and steroids. It’s been a “vicious cycle,” says Robbie Goldstein, an infectious disease physician at Massachusetts General Hospital who has been part of Bottenus’ team of doctors. The steroids reduce the inflammation from the infection, which puts dangerous pressure on Bottenus’ brain. But the steroids also suppress his immune system, impairing his ability to clear the fungus. Whenever Bottenus’ doctors have tried to taper his steroids, the inflammation in his brain has gotten worse.

As the COVID-19 pandemic began, Bottenus became nervous that being immunocompromised put him at greater risk of getting sick from the virus. He decided to stop taking the steroids. The resulting inflammation triggered a stroke at the end of March 2020. Pandemic restrictions prevented Burkin from going to the hospital with Bottenus or visiting him during the weeks he was there.

Bottenus is still coping with the effects of the brain infection and the stroke. He has lost most of his peripheral vision. His speech is altered. Navigation is a struggle — he has a hard time finding his way around even in his home. Bottenus kept telling himself that he’d wake up one day and be back to where he used to be, “but of course that’s a fantasy,” he says.

Yet other brain functions don’t seem to have been harmed. Bottenus was able to begin graduate school in the fall of 2020, and he has done well in his classes. “He can’t really bike across our neighborhood by himself,” Burkin says, “but he can write like a 20-page research paper.”

The whole experience has changed Bottenus’ personality, says Burkin. Her adventurous partner has become reliant on structure and routine. “I think that’s really normal for people who’ve gone through a life-threatening disease, where you have no control,” she says. Bottenus says, living “is as much adventure as I can get.”

In the published cases of C. bantiana infections, the death rate can be as high as 70 percent. Many times the infection isn’t diagnosed until a patient’s autopsy, Goldstein says. For those who live with this infection, he says, the studies “don’t say what that life is like.” Bottenus and Burkin are crossing uncharted medical territory in real time.

For now, Bottenus is a passenger on friends’ boats. When he bikes, he follows Burkin, or they ride a tandem. He makes sure to have his cell phone when he goes out, in case he gets lost. “He used to literally be able to do celestial navigation and lead us on bike tours across continents,” Burkin says. “I don’t know that it is permanent, these changes. I don’t think there’s any way to know.”

But “I can’t blame this fungus,” she says. “We were out on a bike ride and some dirt got into his body.”

Sometimes a doctor meets a patient after they’ve already been seriously harmed by an infection, Goldstein says. But he got to know Bottenus from the beginning, when Bottenus was a sailing captain, searching for a diagnosis. Goldstein says this experience has made him really aware, when he’s talking to a patient, of how devastating an infection can be to the life they had before.

“Before there was an infection,” he says, “there was a person.”

— Aimee Cunningham

The aftermath

At the start of the COVID-19 pandemic, Lakshmi Krishnan and S. Michelle Ogunwole were internists at Johns Hopkins Hospital in Baltimore. Patients were dying. There wasn’t enough personal protective equipment for staff. So much about COVID-19 was still unknown. “It was terrifying,” says Krishnan, now at Georgetown University in Washington, D.C. Some of her colleagues were making wills.

And the patients were largely alone. Ogunwole, also a health disparities researcher at Johns Hopkins University School of Medicine, remembers an older Black woman who had been hospitalized with COVID-19 for months. She was well enough to move out of the intensive care unit but was extremely debilitated because she hadn’t walked in so long, Ogunwole says. A tube in her windpipe made it difficult to talk.

The woman’s family was not there. “There was nobody to speak for her,” Ogunwole says. It made Ogunwole think of her mother, “an incredibly vibrant person … the life of the party.” If it was her mom in that bed, no one would have known who she is. That she likes to dance for no reason. That she picks up a different sport every year.

Ogunwole felt her patient’s sorrow and loss. Millions are reckoning with the pandemic’s cost to their physical and mental health. The toll is not unlike that of tuberculosis, AIDS, fungal diseases, childhood infectious diseases or the many other outbreaks and epidemics of the last century.

We should know by heart how lives — and whose lives — are changed by infectious disease outbreaks. But time and again, there’s a clamor to leave outbreaks behind us. And the trauma and the inequities stay. The question this time, says historian Nancy Bristow of the University of Puget Sound in Tacoma, Wash., is, “Can we do a better job of continuing to hear the stories of those who have been affected?”

The stories of children who have lost one parent or both, for example. For her book American Pandemic: The Lost Worlds of the 1918 Influenza Pandemic, Bristow interviewed Lillian Kancianich, born in a small town in North Dakota. Kancianich’s mother died of influenza when Kancianich was a baby. The story was that her mother was out sweeping the porch on Armistice Day and then was never seen again, Bristow says.

Kancianich bounced among extended family members’ houses for two years before she was able to call one home. She told Bristow that as a child, when asked what she wanted to be when she grew up, her answer was a stepmother. “The loss of that parent changed her life completely,” Bristow says.

These stories have continued. In roughly the first year of the COVID-19 pandemic, an estimated 1 million children worldwide experienced the death of a mother, a father or both, researchers reported in July in the Lancet. The number is staggering, and yet a fraction of the estimated 17 million children who have lost one or both parents to AIDS in roughly the last three decades, according to the United States Agency for International Development. Ninety percent of those children live in sub-Saharan Africa.

Nearly 105,000 children in the United States are facing life without one or both parents due to COVID-19, the study in the Lancet found. “Evidence from previous epidemics shows that ineffective responses to the death of a parent or caregiver, even when there is a surviving parent or caregiver, can lead to deleterious psychosocial, neurocognitive, socioeconomic, and biomedical outcomes for children,” the authors write.

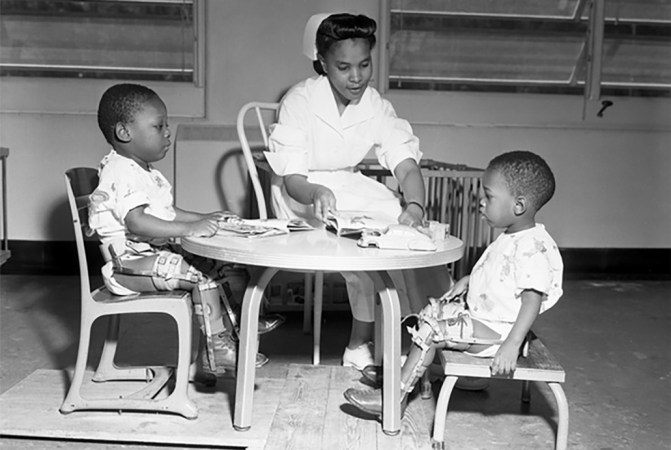

An earlier study on parental death due to COVID-19 in the United States took race and ethnicity into account. While Black children make up only 14 percent of children in the United States, they made up 20 percent of those who had lost a parent, researchers reported in April in JAMA Pediatrics.

When Krishnan was treating patients early in the pandemic, she remembers noting, “It’s all my Black and brown patients that are getting upgraded to the intensive care unit.” Research has revealed a disproportionate impact among Black, Latino and Native Americans in terms of infection, hospitalization and death.

“COVID has unveiled disparities in health,” but the pandemic didn’t create them, says A. Oveta Fuller, a virologist at the University of Michigan in Ann Arbor. “This is not new.”

At the turn of the 20th century, Black Americans’ death rates were higher than white Americans for tuberculosis, pneumonia and, in children, diarrheal disease and diseases of malnutrition, wrote W.E.B. Du Bois in 1906, “an indication of social and economic position.” Racism and segregation restricted access to health care, housing and wealth for Black Americans.

The accounts from 1918 in Black newspapers in Philadelphia and Chicago leave the impression that the rates of influenza in Black communities were lower than in white communities, says medical historian and physician Vanessa Northington Gamble of George Washington University in Washington, D.C. Yet it was clear that the number of Black people who did have flu “overwhelmed the medical care facilities that were available to them,” she says.

White hospitals wouldn’t accept Black patients or sent them to the basement or other segregated areas. Black hospitals didn’t have the capacity for everyone who needed care. So Black communities came together to provide for their own, Northington Gamble says. When every one of the 75 beds at Frederick Douglass Memorial Hospital in Philadelphia filled up, its medical director, with no support from the city’s board of health, managed to open an emergency annex for Black patients. Black women volunteers in Chicago made house visits to tend to the sick.

Black nurses also cared for Black influenza patients in their homes. Bessie B. Hawes, a 1918 graduate of the Tuskegee Institute’s nursing program, described her experience caring for a family of 10, “dying for the want of attention,” in a rural area of Alabama. “As I entered the little country cabin, I found the mother dead in bed, the father and the remainder of the family running temperatures of 102 to 104˚. Some had influenza and others had pneumonia…. I saw at a glance that I had much work to do…. I milked the cow, gave medicine, and did everything I could to help conditions.”

One of the legacies of the 1918 pandemic is “this long-standing tradition of the Black community of standing up and taking care of itself,” says Northington Gamble. The tradition continues, she says, with the work of organizations including the Black Doctors COVID-19 Consortium, created to make it easier for Black communities in the Philadelphia area to receive COVID-19 testing and vaccination.

When Black people did become ill with influenza in the 1918 pandemic, they were more likely to die than white people with influenza, researchers reported in 2019 in the International Journal of Environmental Research and Public Health. That higher likelihood of death “could be attributed to several factors still present today: higher risk for pulmonary disease, malnutrition, poor housing conditions, social and economic disparities, and inadequate access to care,” Krishnan, Ogunwole and their colleague Lisa Cooper of Johns Hopkins University wrote last year in Annals of Internal Medicine.

The societal systems that foster racial discrimination and lead to health inequities must be addressed, says Ogunwole. “There is no amount of resilience that overcomes that.”

The suffering of the 1918 pandemic was overshadowed by the end of World War I, says Bristow. The war was publicly memorialized with monuments and a holiday. “The pandemic goes unspoken,” she says, because it doesn’t fit with the victorious narrative of the war. Only a few fictional works that drew upon the 1918 pandemic were published in the following decades.

But those books — such as Pale Horse, Pale Rider, by Katherine Anne Porter, who survived her influenza illness but whose fiancé died — as well as private letters reveal deep loss, Bristow says. “It’s very clear that the trauma stayed with people, even if there’s not public grieving, and that’s what’s so sad about it,” she says. “There was no public acknowledgement of all of this loss.” The country marched ahead, and people “were expected to hop in line and march along too.”

Krishnan, also a medical humanities scholar and a historian of medicine, says we must preserve a record of the COVID-19 pandemic. A hopeful sign that stories of how people felt, lived, loved and died won’t be lost are the many oral history projects spearheaded by universities and libraries. “We cannot forget,” Krishnan says, “because it will happen again.”

— Aimee Cunningham

Editor’s note: This story was published October 27, 2021.

From the archive

-

Smallpox increases where laws are lax

A Science News Bulletin report describes an increase in the prevalence of smallpox in the United States, attributing the increase at least in part to “laxity in the enforcement of vaccination laws.”

-

Bacteria-eaters discovered

The discovery of a parasite on bacteria, dubbed a “bacteriophage,” or bacteria-eater, could lead to cures for dysentery, typhoid fever, hemorrhagic septicemia and bubonic plague, according to an announcement from the Pasteur Institute.

-

Penicillin for All

A researcher shares a detailed recipe so that any doctor could make at home “a supply of crude penicillin for treatment of … infections in or near the surface of the skin.”

-

Polio Research Brings Hope

“Sometimes crippling and occasionally killing, poliomyelitis, also called infantile paralysis, is perhaps the most terrifying of any disease to parents and children alike.”

-

Vaccines: Past to Future

Science News medical writer Faye Marley looks back at the development of vaccines and expresses hope for what’s ahead.

-

Antibiotics in Animal Feeds: Threat to Human Health?

Medical sciences writer Joan Arehart-Treichel asks whether antibiotics in animal feeds are a threat to human health.

-

Cancer Virus Redux

Scientists struggle to understand the role of viruses in cancer — “after a decade out of the limelight, they’re back again.”

-

AIDS: Disease, Research Efforts Advance

A report from the first International AIDS conference, held in Atlanta, describes “a grim picture of a disease that remains one step ahead of the researchers seeking ways to stop it.”

-

Modern Hygiene’s Dirty Tricks

Science writer Siri Carpenter introduced Science News readers to the hygiene hypothesis – the idea that microbe-free environments can lead to weakened immune systems.

-

Know Your Enemy

Researchers begin teasing out the molecular underpinnings that make the tuberculosis bacterium so infectious and enable it to evade the body’s immune response.

-

Experimental drugs and vaccines poised to take on Ebola

Writer Nathan Seppa describes the experimental drugs and vaccines that might halt the Ebola epidemic.

-

A 5,000-year-old mass grave harbors the oldest plague bacteria ever found

Bacterial DNA recovered from a Scandinavian woman buried in a mass grave around 5,000 years ago could yield clues to the origins of the plague.

-

For people with HIV, undetectable virus means untransmittable disease

Science News biomedical writer Aimee Cunningham covers the strides made in treating and preventing HIV, as well as the effort to get lifesaving medications in the hands of those who need them.

The latest

-

COVID-19 has killed a million Americans. Our minds can’t comprehend that number

We intuitively compare large, approximate quantities but cannot grasp such a big, abstract number as a million U.S. COVID-19 deaths.

-

At a long COVID clinic, here’s how doctors are trying to help one woman who is struggling

As more people experience long-term health problems from COVID-19, long COVID clinics try to help patients manage symptoms, like brain fog and fatigue.

-

The world is ‘losing the window’ to contain monkeypox, experts warn

As the global monkeypox outbreak surges, the world is giving the “virus room to run like it never has before,” researchers say.

-

Fungi that cause serious lung infections are now found throughout the U.S.

Doctors should be on the lookout for three types of fungi that, when inhaled, can lead to serious infections, researchers say.

-

WHO declares an end to the global COVID-19 public health emergency

Global COVID-19 deaths are down and immunity is up. But with the virus here to stay, it’s time to shift to more long-term health measures.

-

The United States was on course to eliminate syphilis. Now it’s surging

Science News spoke with expert Allison Agwu about what’s driving the surge and how we can better prevent the disease.