Computers can do all sorts of amazing things, from searching the Web at an incredible rate to playing chess at a grandmaster level. Yet some tasks that are easy for people to perform remain remarkably difficult for computers. For example, computer programs have a hard time reading distorted text or deciphering images.

In the last few years, computer scientists have worked out an ingenious security scheme that takes advantage of such a mismatch. The scheme relies on computer programs that can, without further human intervention, automatically generate and grade tests that the computer programs themselves can’t easily pass. Yet most people generally have no difficulty passing the same tests.

It’s an intriguing way of distinguishing between the actions of a computer program and those of a person.

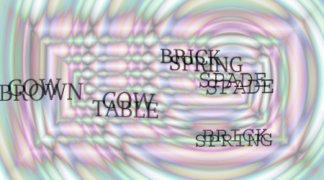

Here’s an example. The following computer-generated image contains seven different words, randomly selected from a dictionary and displayed so that they overlap and fall against a complex, colored background pattern. A person can almost always identify at least three of the words. A computer program would typically have great difficulty doing so.

Such a puzzle is known as a CAPTCHA. The word was coined by computer scientist Manuel Blum of Carnegie Mellon University. It stands for “Completely Automated Turing Test to Tell Computers and Humans Apart.”

Traditionally, a Turing test is one in which a person is allowed to ask questions of two “participants,” one of which is a person and the other is a computer, in an effort to distinguish human from computer responses. In the case of CAPTCHAs, a computer is actually the judge.

Blum, his students Luis von Ahn and Nicholas J. Hopper, and John Langford of IBM originally developed CAPTCHAs in response to a practical problem. Companies such as Yahoo! allow people to sign up for free e-mail accounts. However, people interested in sending out mass mailings (spam) can take advantage of the situation by using computer programs (or bots) to sign up for hundreds of accounts automatically in a very short period of time. They can then use the accounts to distribute spam.

The answer is to deploy some sort of CAPTCHA. For example, a person can readily identify words or letters in a distorted image, retype the message, and confirm that a human is truly in the loop. This would be much more difficult for a typical bot to do.

Indeed, Yahoo! and a variety of other companies and organizations now use this strategy to control registration, confirm transactions, check voting in online polls, and perform other chores in which it’s important to make sure that only people are directly involved. Without such a safeguard, for instance, it’s easy to imagine how cleverly deployed computer programs could repeatedly vote in online polls to greatly distort the results.

CAPTCHA puzzles come in a variety of forms. You can try examples of a type known as the GIMPY test, which is based on distorted text, at http://www.captcha.net/cgi-bin/gimpy. Other variants involve pattern recognition problems, distorted visual images, or even sounds. In each case, the aim is to serve up instances of puzzles that people can solve but current computer programs can’t.

Most CAPTCHAs involve some sort of visual image. “Unfortunately, images and sound alone are not sufficient: There are people who use the Web that are both visually and hearing impaired,” von Ahn, Blum, and Langford note in the February 2004 Communications of the ACM. “The construction of a CAPTCHA based on a text domain such as text understanding or generation is an important open problem for the subject.”

Then there’s the challenge of breaking CAPTCHAs. How easily can they be solved by a computer?

From the time the first CAPTCHAs were proposed, people expended a great deal of effort developing computer program that could break them. In the end, these efforts eventually succeeded, particularly those that relied on advanced image recognition techniques.

One recent paper in the College Mathematics Journal, by Edward Aboufadel, Julia Olsen, and Jessie Windle, suggests that certain types of text-based CAPTCHAs are vulnerable to attacks that use fairly elementary mathematical techniques. To avoid such failures, it’s best to use nonstandard fonts, the authors warn.

Von Ahn and his coworkers regard this game of hide and seek as a win-win situation. “Either the CAPTCHA is not broken and there is a way to differentiate humans from computers,” the researchers write, “or the CAPTCHA is broken and a useful [artificial intelligence] problem is solved.”

Indeed, some of the efforts that have gone into solving visual CAPTCHA puzzles may yet lead to improved optical-character recognition technology, making it easier to convert printed into digital text with far fewer errors and snags.