An audio compact disc (CD) holds up to 74 minutes, 33 seconds of music, just enough for a complete recording of Ludwig van Beethoven’s Ninth Symphony on one disc. Each second of music is stored as a string of about 1.5 million bits, represented as tiny, narrow pits on the disc’s surface. These pits range from 0.9 to 3.3 micrometers in length and correspond to the 1s and 0s of the digital signal encoding the sound. It takes more than 6 billion bits, recorded on a spiral track several kilometers long but only 0.5 micrometer wide, to capture the entire symphony.

Mathematical elements make significant contributions to the crisp digital sound delivered by an audio CD. Sampling theory, for instance, guides how often sound waves should be measured to generate a digital signal that adequately reproduces the original sound.

For a CD, sound waves are measured at a constant rate of 44.1 kilohertz. Once sampling has taken place, each measurement is represented as a sequence of bits. In a 16-bit audio format, the signal voltage, averaged over the sampling interval, is converted to a discrete value–one of 216 (or 65,536) levels.

Mathematically, the process of going from a continuous analog signal (sound waves) to a discrete digital representation (pits on a CD’s surface), then back to a continuous signal (sound waves) is analogous to sampling a function to obtain a set of discrete values, then recovering the original function from the samples.

In principle, infinitely many functions can have the same sampled values. So, recovering a function successfully from a given set of values generally requires assumptions about the nature of the functions involved in any particular signal-processing application.

Mathematicians have been interested in quantifying the conditions under which it is possible to recover particular classes of functions, given various sampling strategies. For example, for certain signals, it may be better to vary the sampling interval instead of sampling uniformly.

Such studies could lead to the development of alternative sampling schemes to achieve clearer digital recordings, sharper pictures, and more precise MRI images than possible with conventional techniques, which usually invoke uniform sampling.

Mathematicians Akram Aldroubi of Vanderbilt University and Karlheinz Gröchenig of the University of Connecticut describe one such effort in that direction in the current issue of SIAM Review.

No sampling procedure can fully capture every detail of an analog signal. However, “our [sampling] theory. . .can produce more accurate digital representations of all kinds of samples, including those that [conventional] methods handle poorly or cannot handle at all,” Aldroubi explained in an article in Exploration, Vanderbilt University’s online research magazine.

The backbone of conventional methods is the so-called Nyquist-Shannon sampling theorem, which was first articulated by Harry Nyquist (1889–1976) in 1928 and mathematically proved by Claude E. Shannon (1916–2001) in 1949. For uniform sampling, the highest signal frequency that can be captured accurately is half the sampling frequency. This limit means that an audio CD, for instance, cannot reproduce sounds having frequencies greater than 22,050 hertz.

Aldroubi and Gröchenig have developed a new sampling theory that can handle situations where having such a cut-off frequency is undesirable. Their algorithms allow the fast and robust reconstruction of signals from digital samples even when sampling is nonuniform, measurements are imperfect, and noise disturbs the signals. By using nonuniform sampling, it’s possible to sample at frequent intervals when the signal changes rapidly and less often when the signal changes slowly.

The new reconstruction algorithms are iterative. The first step provides just a rough approximation of what the original function is like–more the type of function (or mathematical “space” in which it resides) than its actual shape. The approximation is then compared to the data samples to determine how well they match. With each iteration, the function becomes better defined, and the discrepancies between function and data diminish.

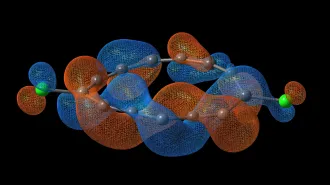

Technically, the reconstruction methods bring together “wavelet theory, frame theory, reproducing kernel Hilbert spaces, approximation theory, amalgam spaces, and sampling,” the mathematicians report.

The ability of the theory developed by Aldroubi and Gröchenig to handle nonuniform sampling suggests a variety of applications. For example, even when a signal is uniformly sampled, data may be lost (say, scratches on a CD or missing data from an MRI image). The result is a nonuniform sequence of samples. The new algorithms allow you to fill in the missing information in such a way that the major features of the original are restored.