To Err Is Human

Influential research on our social shortcomings attracts a scathing critique

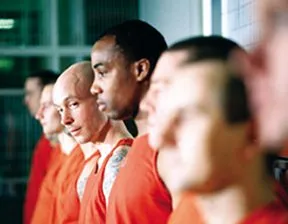

It’s a story of fear, loathing, and crazed college boys trapped in perhaps the most notorious social psychology study of all time. In the 1971 Stanford Prison Experiment, psychologist Philip G. Zimbardo randomly assigned male college students to roles as either inmates or guards in a simulated prison. Within days, the young guards were stripping prisoners naked and denying them food. The mock prisoners were showing signs of withdrawal and depression. In light of the escalating guard brutality and apparent psychological damage to the prisoners, Zimbardo halted the study after 6 days instead of the planned 2 weeks.

Zimbardo and his colleagues concluded that anyone given a guard’s uniform and power over prisoners succumbs to that situation’s siren call to abuse underlings. In fact, this year, in a May 6 Boston Globe editorial, Zimbardo asserted that U.S. soldiers granted unrestricted power at Iraq’s Abu Ghraib prison inevitably ended up mistreating detainees—just as the college boys did in the famous experiment.

Rot, says psychologist S. Alexander Haslam of the University of Exeter in England. No broad conclusions about the perils of belonging to a powerful group can be drawn from the Stanford study, in his view. Abuses by the college-age guards stemmed from explicit instructions and subtle cues given by the experimenters, Haslam asserts.

However one interprets the Stanford Prison Experiment, it falls squarely in the mainstream of social psychology. Over the past 50 years, researchers have described how flaws in study participants’ behaviors and thinking create all sorts of mishaps in social situations.

This accounting of our monumental aptitude for ineptitude and cruelty has appealed both to social scientists and to the public; these experiments are among the most celebrated products of social science.

They’re also profoundly misleading, say Joachim I. Krueger of Brown University in Providence, R.I., and David C. Funder of the University of California, Riverside. Mainstream social psychology emphasizes our errors at the expense of our accomplishments, the two psychologists contend. Also, because the work uses artificial settings, it doesn’t explain how social behaviors and judgments work in natural situations, they believe.

In an upcoming Behavioral and Brain Sciences, Krueger and Funder compare much of current social psychology to vision research more than a century ago. At that time, visual illusions were considered reflections of arbitrary flaws in the visual system.

In 1896, a French psychologist proposed that visual illusions arise from processes that enable us to see well in natural contexts. For instance, during their years of visual experience, people come to achieve accurate depth perception by perceiving a line with outward-pointing tails as farther away than a line with inward-pointing tails. The result is the illusion that a line is longer when adorned with outward-facing tails than with inward-facing ones.

Subsequently, vision scientists unraveling illusions focused on elements of visual skill rather than on apparent shortcomings in the visual system. Krueger and Funder propose what they say is a similar shift—in which psychologists will consider how different behaviors and thought patterns might have practical advantages, even if they sometimes lead to errors.

Many social psychologists continue to search for what they regard as inherent flaws in people’s behaviors and attitudes. In several responses published with Krueger and Funder’s critique, these researchers describe their work as a necessary first step toward identifying ways to improve our social lives by learning to avoid errors in behavior and thought.

Krueger prefers an alternative approach. “A more balanced social psychology would seek to understand how people master difficult behavioral and cognitive challenges and why they sometimes lapse,” he says.

Today, investigators interested in how evolution, culture, and the brain shape mental life are already on this track, Krueger says, as they probe the accuracy and practical impact of various social behaviors and beliefs.

Group peril

Social psychology’s pioneers, with few exceptions, believed that individuals lose their moral compass in groups and turn into “irrational, suggestible, and emotional brutes,” Krueger and Funder argue. Laboratory studies in the 1950s and 1960s laid the groundwork for the Stanford Prison Experiment by probing volunteers’ conformity in the face of social pressure, chilling obedience to cruel authority figures, and unwillingness to aid needy strangers.

Yet the underlying complexity of such findings has often been ignored, Krueger and Funder say. In 1956, for instance, Solomon Asch placed individual volunteers in groups where everyone else had been instructed to claim that drawings of short lines were longer than drawings of long lines. Most of the time, volunteers acceded to the crowd’s bizarre judgments.

Asch also studied conditions where volunteers simultaneously tended to resist conformity’s pull. For example, when two volunteers entered a group, they often supported each other in a minority opinion. However, the story of individuals’ submission to strange group beliefs received far more public attention than the other findings did.

Stanley Milgram upped the ante in 1974 by studying obedience to a malevolent authority figure. In his notorious work, an experimenter relentlessly ordered participants to deliver what they thought were ever-stronger electrical shocks to an unseen person whose moans and screams could be heard. As many as 65 percent of participants administered what they must have thought were highly painful, and perhaps even lethal, voltages (SN: 6/20/98, p. 394).

In further experiments, Milgram found that in certain conditions, obedience to the instruction to shock someone dropped sharply. These included situations in which two authorities gave contradicting orders or the experimenter gave instructions to shock himself. Yet these variations were overlooked, and Milgram’s work achieved fame as a dramatic exposé of our obediently brutal natures.

A third set of studies demonstrated a seemingly uncaring side to human nature. This work grew out of the infamous 1964 murder of a New York City woman. Dozens of apartment dwellers did nothing as they heard the woman’s screams and watched her being stabbed to death.

Bibb Latané of Florida Atlantic University in Boca Raton and John Darley of Princeton University set up simulated emergency situations in the late 1960s and demonstrated that the proportion of people attempting to aid a person in need declined as the number of bystanders increased. The presence of others depresses a person’s sense of obligation to intervene in an emergency, as each bystander waits for someone else to act first, the researchers concluded. The nature of certain social situations encourages people to act in unseemly ways, Darley says.

Krueger and Funder don’t draw that conclusion from these studies and others like them. They counter that there may be underlying factors, such as concerns for personal safety derived from real-life experiences, that make that behavior more sensible than it seems.

Our responses to real-life situations remain poorly understood, they contend. In their view, researchers have yet to probe thoroughly how behaviors emerge from the interplay between individuals’ personalities and the situations in which they find themselves.

Guards gone wild

The Stanford Prison Experiment offers a dramatic example of how scientists who agree with Krueger and Funder’s critique can revise and draw new lessons from a classic social psychology investigation.

After noting the scarcity of research inspired by Zimbardo’s report, Exeter’s Haslam organized his own exploration of group power in December 2001. Because the 1971 Stanford experiment later attracted charges of ethical transgressions, an independent, five-person ethics committee monitored the new study.

Haslam built an institutional environment with three cells for 10 prisoners and quarters for five guards inside a London film studio, where cameras recorded all interactions. In May 2002, the British Broadcasting Corporation, which funded the project, aired four 1-hour documentaries on the “BBC Prison Study.” Academic papers on the study are currently in preparation.

Haslam’s interests differed significantly from those of Zimbardo. He wanted to draw conclusions about groups with hierarchies and, after setting up some ground rules, he removed himself from what went on.

Before the study got under way, guards were asked to come up with their own set of prison rules and punishments for violators. Haslam had stipulated that on the study’s third day guards could promote any prisoners to guard status who showed “guardlike qualities” and that the prisoners would know about that provision.

In contrast, Zimbardo in the earlier experiment told guards that it was necessary to exert total control over prisoners’ lives and to make them feel scared and powerless.

From the start, the guards in Haslam’s experiment found it difficult to trust one another and work together. Moreover, unlike the guards in the earlier experiment, they were generally reluctant to impose their authority on prisoners.

On the third day, one prisoner received a promotion to guard. The remaining prisoners then began to identify strongly as a group and to challenge the legitimacy of the guards’ rules and punishments.

A new prisoner entered the study on day 5 and began to question the guards’ power and certain aspects of the study, such as what they regarded as excessive heating of the prison. From then on, in a rapidly shifting chain of events, prisoners broke out of their cells, and guards joined prisoners in what they called a commune.

However, two guards and two prisoners fomented a counterrevolution to set up strict authoritarian rule. Instead of opposing that group, the members of the commune became demoralized and depressed. As the situation deteriorated, Haslam’s team stopped the experiment after 8 days, 2 days before its scheduled conclusion.

The BBC Prison Study indicates that tyranny doesn’t arise simply from one group having power over another, Haslam says. Group members must share a definition of their social roles to identify with each other and promote group solidarity. In his study, volunteers assigned to be prison guards had trouble wielding power because they failed to develop common assumptions about their roles as guards.

“It is the breakdown of groups and resulting sense of powerlessness that creates the conditions under which tyranny can triumph,” Haslam holds.

Imperfect minds

During the past several decades, social psychology has moved from reveling in people’s bad behavior to documenting faults in social thinking, according to Krueger and Funder. This shift reflects the influence of investigations, launched 30 years ago, by psychologists studying decision-making. That work showed that people don’t use strict standards of rationality to decide what to buy or to make other personal choices.

Investigators have uncovered dozens of types of errors in social judgment. However, methodological problems and logical inconsistencies mar much of the evidence for these alleged mental flaws, Krueger and Funder assert.

For instance, volunteers who scored poorly on tests of logical thinking, grammatical writing, and getting jokes tended to believe that they had outscored most of their peers on those tests, according to a 1999 report by Cornell’s Justin Kruger and David Dunning. In contrast, high scorers on these tests slightly underestimated how well they had done compared with others.

Kruger and Dunning concluded that people who are incompetent in a task can’t recognize their own ineptitude, whereas expertise in a task leads people to assume that because they perform well, most of their peers must do the same.

Krueger, however, says, “This study exemplifies the rush to the conclusion that most people’s social-reasoning abilities are deeply flawed.”

Krueger and Funder hold that the results are undermined by a statistical effect known as regression to the mean. If participants take two tests, each person’s score on the second test tends to revert toward the average score of all test takers. Thus, a person who had scored high on the first test is more likely to score lower on a second test. Similarly, low scorers from the first test will tend to come in higher on a second test than on the first. So, the Kruger and Dunning finding doesn’t necessarily indicate that low scorers lack insight into the quality of their performance, Krueger adds.

In a published response, Dunning doesn’t counter the statistical challenge but says that his results have been confirmed by further studies. He contends that, in general, social psychologists “would do well to point out people’s imperfections so that they can improve upon them.”

Krueger and Funder follow another scientific path. Instead of assuming that behavior and thinking are inherently flawed, they see errors as arising out of adaptive ways of interacting with the world.

Krueger studies whether individuals see themselves in an unduly positive light and, if they do, whether such self-inflation contains benefits as well as costs.

Funder observes exchanges between pairs of volunteers to study how each individual assesses the other’s personality. He determines which of the cues emitted by one person are detected by the other person and how they lead to an opinion about the first volunteer’s personality.

While encouraging psychologists to look at positive aspects of social interactions, neither researcher harbors any illusions that what the two call the “reign of errors” in social psychology is about to end. They’d rather not add another mental mistake to the list.

The Hot Hand Gets an Assist

An irrational belief scores in basketball

Professional basketball players and coaches rarely pay much attention to psychology experiments. Yet they scoffed in 1985 after psychologist Thomas Gilovich of Cornell University and two colleagues published a report that claimed to debunk the popular belief that hoopsters who hit several shots in a row have a “hot hand” and are likely to score on their next shot, as well.

Over an entire season, individual members of the Philadelphia 76ers exhibited constant probabilities of making and flubbing their shots, regardless of whether the immediately preceding shots had been hits or misses, Gilovich’s team found. So, strings of successful shots don’t reflect a shooter’s hot hand.

Although belief in the hot hand is irrational, there may still be good reason to heed an illusory hot hand in a basketball game, says psychologist Bruce D. Burns of Michigan State University in East Lansing. Teams that preferentially distribute the ball to players on shooting streaks score more points than teams that don’t, Burns asserted in the May Cognitive Psychology.

Gilovich’s National Basketball Association (NBA) data show that scoring streaks occur more often among the best overall shooters, Burns notes. When players regard a string of scores by a teammate as a cue to set him up for more shots, they are favoring the strongest player.

Using a model of hot-hand behavior and computer simulations of basketball games based on the NBA data, Burns estimated that a professional team that follows the hot-hand rule scores one extra basket every seven or eight games. That’s a small advantage, but enough to make a difference over a season.

Hot-hand beliefs probably boost team scoring to a greater extent in pick-up basketball games, where players know much less about their teammates’ shooting abilities than NBA pros do, Burns says.