Global neuro lab

Giant brain-training dataset attracts scientists

a-r-t-i-s-t/Getty Images, adapted by M. Atarod

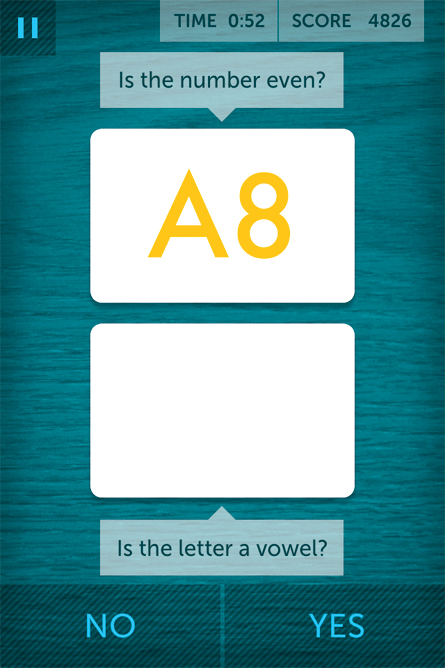

If you own a television, a computer or a smartphone, you may have seen ads for Lumosity, the brain-training regimen that promises to sharpen your wits and improve your life. Take the bait, and you’ll first create a profile that includes your age, how much sleep you get, the time of day you’re most productive and other minutiae about your life and habits. After this digital debriefing, you can settle in and start playing games designed to train simple cognitive skills like arithmetic, concentration and short-term recall.

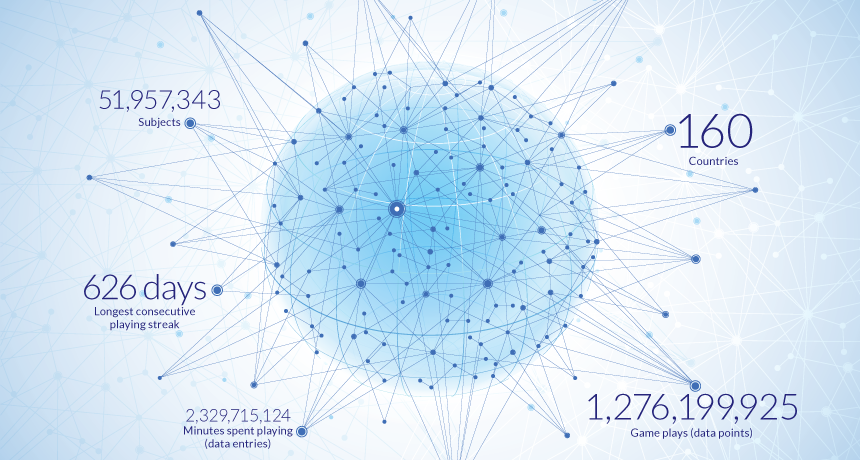

The 50 million people signed up for Lumosity presumably have done so because they want to improve their brains, and these games promise an easy, fun way to do that. The program also offers metrics, allowing users to chart their progress over weeks, months and years. Written in these personal digital ledgers are clues that might help people optimize their performance. With careful recordkeeping, for example, you might discover that you hit peak brainpower after precisely one-and-a-half cups of medium roast coffee at 10:34 a.m. on Tuesdays.

But you’re not the only one who has access to this information. With each click, your performance data will fly by Internet into the eager hands of scientists at Lumos Labs, the San Francisco company that created Lumosity.

Giant datasets like this one, created as a by-product of people paying money to learn about and improve themselves, will revolutionize research in human health and behavior, some scientists believe. Lumos Labs researchers hope that their brain-training data in particular could reveal deep truths about how the human mind works.

They believe that they have a nimble, customizable and cheap way to discover things about the brain that would otherwise take huge amounts of money and many years to unearth with standard lab-based studies. Other researchers have also taken note, and some have gotten permission to use Lumosity data in their own research. Some of these researchers are hunting for subtle signatures of Alzheimer’s in the data. Others are investigating more fundamental mysteries with cross-cultural studies of how the brain builds emotions and how memory works.

Data collected outside the confines of a pristine, sterile lab can be grubby, lacking the safeguards and quality control that traditional studies impose. And giant datasets can be unwieldy and opaque, burying true results under a mountain of irrelevant information. But even with these flaws, the promise is as big as the datasets themselves, says Anett Gyurak of Stanford University, who has just started studying Lumosity data. “It’s an incredible opportunity scientifically,” she says.

Thinking big

The first hint that Lumosity might provide an easy means to collect massive amounts of useful brain data came in 2006, when Scanlon and colleagues designed some prototype games for testing. A small test group of about 30 people played some games online and provided feedback and scores. After it was over, Scanlon realized that he had collected the testing data in a fraction of the time it would have taken to bring those people into a lab. “And this wouldn’t get any harder if there were 2,000 people instead,” Scanlon says.

Or 50 million people. With almost no extra work, the database can grow and grow.

Lumosity user data are primarily used internally by Lumos Labs scientists to refine the company’s product. But outside users can also get their hands on the data by submitting a proposal to the Human Cognition Project, Lumos Labs’ academic outreach arm. So far, most of these research projects have focused on whether the games can help people at risk for brain problems, such as certain cancer survivors, young people with psychotic symptoms and people who have suffered a stroke. But some projects focus not on the product’s potential benefits, but on what the data have to say about the brain.

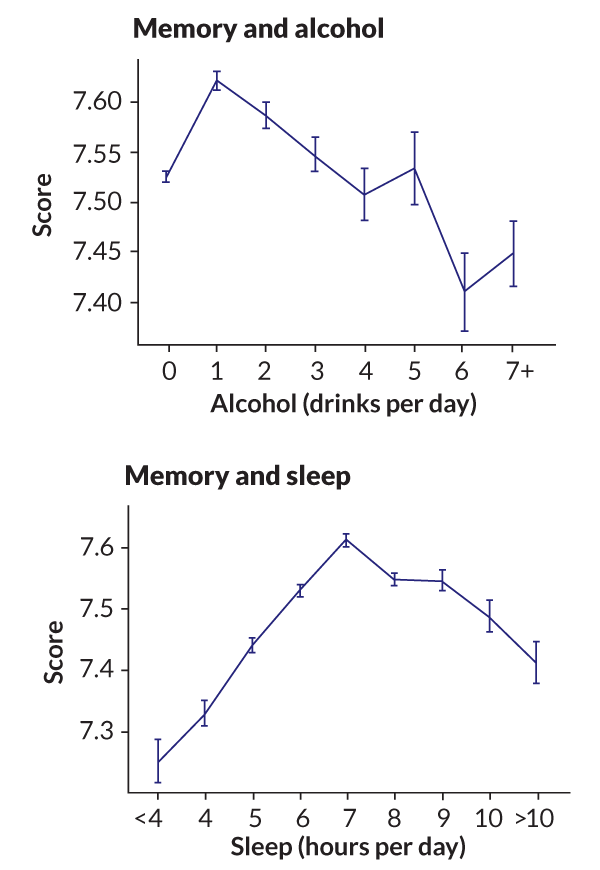

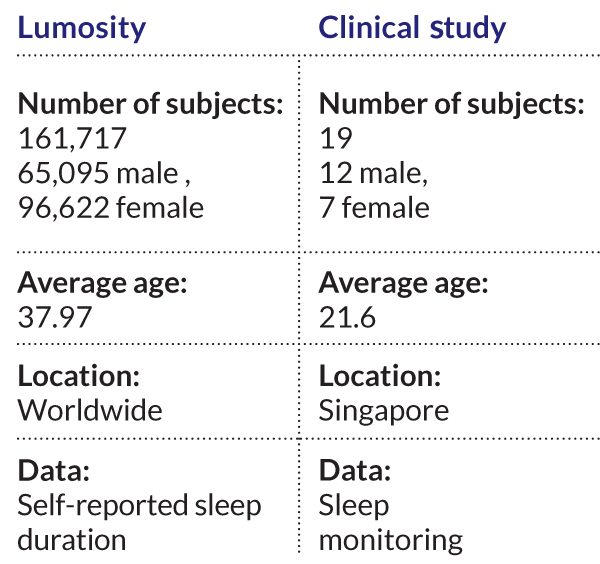

P. Murali Doraiswamy, a neuroscientist at Duke University, was one of the first scientists to see the potential in Lumosity’s dataset. He teamed up with Lumos Labs scientists to study the effects of sleep, alcohol and age on performance in three categories: working memory, spatial memory and quick arithmetic. For each task, Doraiswamy and colleagues analyzed the performance of over 120,000 people who had also reported how much sleep they get and how much alcohol they drink.

These results don’t mean that good sleep and moderate alcohol make you smarter. Lots of other associations — like the fact that casual drinkers might have richer social lives than both teetotalers and heavy drinkers — might be causing the effect. But the scientists hope these findings will inspire others to dive into the data to sort some of those things out. In future studies, Doraiswamy plans to measure people’s Lumosity performance before and after surgery to see whether he can spot cognitive decline after anesthesia.

Michael Weiner, an Alzheimer’s researcher at the University of California, San Francisco, has just begun combing through Lumosity data to look for subtle signs of Alzheimer’s. He and his colleagues plan on following people’s game performance over time, looking for signs of cognitive decline that might serve as harbingers of approaching Alzheimer’s. If the team is successful and game performance does serve as a marker of decline, then the games could be used diagnostically. “You could imagine your doctor might say ‘Four times a year, you should go to this website and play these games,’ and we’ll be using this to determine how your brain is functioning,” Weiner says.

Large, cheap datasets may ultimately change the way Alzheimer’s research is done, he says. Right now, the problem isn’t a lack of ideas. The problem is that there’s no money to test them. “It’s tens of millions of dollars for one clinical trial,” Weiner says. Lumosity’s data aren’t collected as carefully as they would be in a clinical trial. “It’s not the same pristine quality,” he says. “But the beauty is there’s so much of it. And it’s free.”

It’s free, and it comes from everywhere. Users span the globe, residing in 160 countries at the last tally. In contrast, the typical neuroscience study conducted in a lab enrolls a handful of local college students. Such an international community might reveal regional brain quirks among people who live in different states or countries.

Preliminary results from Bradley Voytek of the University of California, San Diego suggest that people who live in countries with high rates of traffic fatalities are more distractible, as measured by a particular Lumosity game that requires people to tune out distracting birds. “We have no idea what the underlying cause is,” he says. “We don’t know if it’s education or nutrition or whatever. But we find that more people per capita are likely to die in fatal car accidents if people in their state or country are more distractible. And it’s measured by this really simple game.”

These results may come from a game, but they are far from frivolous. Road laws could be changed in states or countries at risk in a way that strengthens safety measures. And it’s possible that distractibility can be trained away: First-person shooter games improve players’ ability to focus on visual information, Voytek says.

“In theory, we’re trying to find global general principles. How do people behave?” Voytek says. “But we’re basing these global inferences on a very biased group of people.”

For example, research has shown that people tend to have similar limits to working memory, the ability to hold a certain number of things in mind simultaneously. With the Lumosity dataset, Voytek plans to look for geographical differences in those limits.

Of course, datasets this large come with challenges. While the dataset spans the globe and boasts impressive numbers, it comes with its own selection biases. “The people who come to our site are people interested in cognitive training,” says Lumos Labs research scientist Daniel Sternberg. People not interested in brain training and people with less money to burn aren’t well represented in the dataset.

Doraiswamy counters that all studies succumb to this problem. Lab studies also tend to enroll relatively well-off people who are interested in neuroscience, he says. “There’s always been a selection bias in research,” he says.

For the Lumosity tests, the experimental setting is far from controlled. A person could be playing the game on the noisy train or after an all-nighter. The Lumosity subscriber’s 8-year-old sister could be taking a turn. The data are inherently messy. But because the dataset is so big, much of this noise averages out, Doraiswamy says.

The Lumosity dataset is just starting to find its voice, but in some ways, that voice lacks depth. The only thing Lumosity knows is what people enter on a screen. There’s no brain scanning, no genetic information, no face-to-face assessment.

Incorporating brain scans and genetic testing into datasets with millions of participants remains a far-off dream. But a few efforts have managed to assemble more extensive collections with thousands of people that are already proving useful.

A company called Brain Resource has collected cognitive questionnaires and tests, EEG results, multiple types of brain scans and genomic data on about 5,000 people. The National Institutes of Health is also funding a similar dataset that will be available to researchers and currently has a huge range of data on 1,500 subjects. “It’s one of the richest, most detailed datasets that’s available,” says Bob Bilder, a UCLA researcher who is leading the NIH-funded effort.

Brain Resource is collaborating with scientists to figure out which people with depression will respond best to which antidepressants. “That is huge,” says Alvaro Fernandez, CEO of the neuroscience analytics company SharpBrains in San Francisco. “Right now, we’re wasting billions of dollars on medications that people don’t respond to, and the process is trial and error.” The hope is that some reliable combination of the 165 traits they test, such as certain kinds of brain activity and memory function, could reveal who needs which drugs. In July, Brain Resource submitted a proposal to the U.S. Food and Drug Administration for a proprietary 30-minute cognitive test of memory, attention and emotion that can predict which of three common antidepressants would be most effective. The company is working on a similar tool to predict responses to drugs that treat attention-deficit/hyperactivity disorder.

These approaches still rely on people coming into the lab, but the march of ever-advancing technology might change even that. The Australian neuroengineering company Emotiv has designed a cheap headset that can unobtrusively and accurately record brain waves as people go about their business. The company says its goal is to democratize brain research by putting brain tools in the hands of the people. On September 16, Emotiv wrapped up a Kickstarter campaign that raised over a million dollars to design a better EEG machine. “The technology is not only escaping the lab,” Fernandez says, “it’s already way out there in the world. People are devouring these things.

The ideal situation is one in which multiple lines of evidence are all combined, offering a holistic view of a person’s health, Doraiswamy and others say. Merging genetic data, cognitive performance, medication and health histories, daily activity logs and symptoms for millions of people would lead to an amazingly rich health resource.

“If all 30 million people with Alzheimer’s in the world were on a database, I could instantly give you statistics,” Doraiswamy says. “Are rates of Alzheimer’s increasing or decreasing? What is the rate of depression? What are the medications that people are reporting as most helpful? Least helpful? How many people are available this month to sign up for a research study?”

We are not there yet, but Doraiswamy’s vision could be made real. We already live in a world where much of what we do is tracked, charted and analyzed. Our digital lives reveal where we shop, who we talk to, what songs we like and how we invest. As new biometric apps emerge that can measure the heart’s electrical rhythms, analyze sleep patterns and parse a person’s genetic code, their data could be merged with databases like the one at Lumos Labs to create unimaginably powerful tools for discovery.