Random numbers are a precious commodity, whether expressed as strings of decimal digits or simply 1s and 0s. Computer scientist George Marsaglia of Florida State University, however, likes giving them away.

Marsaglia has spent most of his career producing and testing random numbers. He published his first paper on the topic more than 40 years ago. Since then, Marsaglia and his collaborators have identified a variety of flaws in computer-based random number generators, invented more robust versions of existing generators, and developed a suite of rigorous tests to check for randomness.

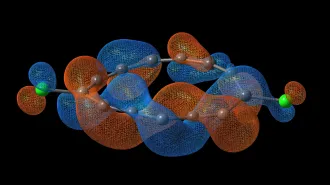

Scientists, engineers, and others use random numbers for tackling a wide range of problems, from modeling molecular behavior and sampling opinion to solving certain equations and testing the efficiency of algorithms.

Random numbers are also used in computer graphics for rendering realistic-looking images, such as mottled surfaces and other textures. They play a crucial role in a wide variety of games, including electronic versions of slot machines, lotteries, and other forms of gambling. Indeed, the world of gambling inspired use of the term “Monte Carlo method” to describe any algorithm that employs random numbers.

A century ago, researchers who needed random numbers in their scientific work actually tossed coins, rolled dice, dealt cards, or picked numbered balls out of urns. They also combed census records, directories, and other lists to glean the digits they required.

In the early 1900s, L.H.C. Tippett, a member of the Biometric Laboratory at University College in London, went to the trouble of compiling a table of 10,000 random numbers, obtained by selecting 40,000 single digits from census records giving the measured areas of parishes in England. He did it as a service to others who needed a convenient source of random numbers.

Nearly 30 years later, experts at the RAND Corporation used a special machine—in effect, an electronic roulette wheel—to generate random bits, which were then converted into a table of a million random decimal digits and published as a book. To remove slight biases uncovered in the course of rigorous, intensive testing and attributed to faulty wiring, the digits were further randomized by adding all pairs and retaining only the final digit.

Shortly after the introduction of computers in the late 1940s, researchers began to search for efficient ways of obtaining random numbers to use in computer programs. They developed random-number generators that scramble the bits of a given number in such a way that the digits of the scrambled result appear to have no connection with any previously generated numbers.

In actuality, however, the numbers in the resulting sequences typically don’t meet all the criteria to establish randomness. Patterns often remain because the computer follows a set procedure to generate the numbers, and restarting the process produces the same sequence. Eventually, the sequences themselves begin repeating. It’s all quite deterministic.

During the 1990s, in a project funded by the National Science Foundation, Marsaglia packed his hard-won expertise onto a CD-ROM to create a catalog of random bits for the information age.

Marsaglia’s primary purpose was to provide a source of what he termed “unassailable random numbers” for serious Monte Carlo studies and simulations, which often require billions of such numbers. In smaller doses, these apparently patternless strings of bits are available to provide reliable and verifiable random choices in designing experiments and clinical trials, for running sample surveys and choosing jury panels, and in other applications of a statistical nature.

The Marsaglia Random Number CD-ROM contained some 5 billion random bits, divided into 60 10-megabyte files, which could be read 1, 8, 16, or more bits at a time, depending on the application. The random bits were made by combining three sources of electronic white noise with the output from the best of the latest crop of deterministic random number generators.

The result of combining a truly random string with any other bit string—no matter how patterned—is still random. So Marsaglia also mixed digital tracks from rap and classical music and even a few digitized pictures into some of the 10-megabyte files on the CD-ROM.

Both the unadulterated and the mixed files passed Marsaglia’s “Diehard” battery of randomness tests, which was also on the disk (along with text files explaining the theory underlying the tests and the random number generators used to create the random bits).

“They seem to pass all tests I have put to them—and I have some very stringent tests,” Marsaglia says.

Loosely speaking, any number that belongs to a sequence of random digits is a member of that string by chance. Ideally, it has no connection with any other number in the sequence, and each number has a specified probability of falling in a given range. If the probability distribution is uniform, you would expect each of the 10 digits from 0 to 9 to occur about one-tenth of the time.

For binary digits, 1s and 0s should come up just about equally often in the long run. It’s also useful to check whether the four pairs of digits 00, 01, 10, and 11 each come up roughly 25 percent of the time, and whether the eight triples 000, 001, . . . 111 each appear about 12.5 percent of the time. There are equivalent checks for larger groups of bits and for various groupings.

Such traditional tests, however, aren’t sufficient to guarantee a reasonable degree of randomness. Over the years, Marsaglia has developed additional tests to weed out hidden patterns.

For example, in one of his Diehard tests he considers a string of random bits as a succession of overlapping 20-letter “words,” made up of the “letters” 1 and 0. The first word starts with the first bit of the string, the second word with the second bit, and so on.

Given that there are 220 possible 20-letter words, the test involves examining the first 2 million overlapping words derived from the random string to count how many of the possible words appear in the sequence. For a truly random sequence, the number of missing words should be very close to 142,000, according to probability theory.

Marsaglia calls this a “monkey test,” based on the proverbial monkey sitting at a keyboard and randomly hitting keys. “It’s one of my most stringent tests,” he says. “No commercial electronic generator of random bits that I know of can pass this test.” Interestingly, generators that rely on electronic noise to produce random digits also fail.

The playful names of other tests hint at alternative strategies for rooting out patterns in sequences purported to be random: the birthday-spacing test, the count-the-1s test, the parking-lot test, the minimum-distance test, the overlapping-sums test, and the craps test.

Checking for randomness is difficult partly because it’s the nature of randomness that anything can happen. Ironically, if a generator produces sequences of random numbers that always pass a given test, then it’s probably flawed!

As mathematician Robert R. Coveyou of Oak Ridge National Laboratory once put it, “The generation of random numbers is too important to be left to chance.”

Originally posted: May 4, 1996

Updated: Oct. 16, 2004