Cloudy Crystal Balls

Computer models may never be able to predict climate accurately

- More than 2 years ago

Climate models may never produce predictions that agree with one another, even with dramatic improvements in their ability to imitate the physics and chemistry of the atmosphere and oceans. That’s the conclusion of a report by James McWilliams, an applied mathematician and earth scientist at the University of California, Los Angeles. The mathematics of complex models guarantees that they will differ from one another, he argues. Therefore, says McWilliams, climate modelers need to change their approach to making predictions.

All climate models predict that the Earth will continue to warm, but when pressed to provide more detailed information, they rarely agree. The best predictions vary by 10 to 20 percent or more. For some phenomena, the variations are even more dramatic. For instance, climate models disagree on whether dry spells will, on average, lengthen or shorten.

A more basic test of models’ reliability than their agreement with one another is their ability to reproduce past climate patterns. They’re not fully able to do this either. They can reproduce some climate trends fairly closely, but each model has its own inaccuracies. For instance, one model might reproduce temperature very well but do a poor job of reproducing precipitation patterns.

Although climate models have improved enormously in recent years and have grown more sophisticated, discrepancies among their predictions remain as wide as ever. Some of those differences reflect disagreement among researchers over the science that goes into the model, but even models that purport to depict climate in essentially the same way do not generate precisely the same outcomes from some given starting point. “This is to be understood as an inherent limitation of models of this class on a question of this type, rather than a measure of the immaturity or inaccuracy of the models,” McWilliams says.

The issue is similar to the famous “butterfly effect,” but at a different level. In 1972, the mathematician and meteorologist Edward Lorenz commented that a butterfly flapping its wings in Brazil could set off a tornado in Texas. The image encapsulates the notion that in chaotic systems like the weather, tiny differences in initial conditions can lead to dramatically different outcomes.

That phenomenon is well-known in weather forecasting, and it explains why even the best weather forecasts are useless after a week or two. But in forecasting climate, the butterfly effect plays a smaller role, because over the course of a year or a decade, the unpredictable outcomes tend to balance out one another.

McWilliams argues, however, that climate models are subject to a similar chaotic effect on a different level. Slight variations in the way that physical effects are approximated and calculated, rather than variations in the initial conditions, can lead to very different future scenarios. This phenomenon is called “structural instability.” If climate models are indeed inherently structurally unstable, then two very precise simulations of the physical processes of the atmosphere and the oceans will nearly always generate predictions that differ substantially. In that case, it’s unlikely that climate prediction models will come to agree with one another over time.

McWilliams cannot prove that climate models are structurally unstable, but he argues in the May 22 Proceedings of the National Academy of Sciences that the evidence points in that direction. “Even though we don’t have a set of all possible reasonable models that we or our children might make,” McWilliams says, “we can begin to see that that set will not converge to an exact answer, and the climate forecasts are not likely to come to significantly greater mutual agreement as we go forward into an era of climate change.”

Even so, climate models produce critically valuable information. Models have brought about major improvements in scientists’ understanding of the dynamics of climate. Furthermore, McWilliams says that discrepancies among models do not undermine the most crucial conclusion of climate modeling—the notion that increased levels of greenhouse gases emitted by people are causing the Earth to warm and will continue to do so. He notes that every credible climate model ever made has pointed to that same conclusion. “All sorts of smart climate scientists have tried to produce a model that doesn’t show future warming,” he says, “and no one has been able to in a credible way.”

McWilliams argues, however, that climate modelers need to change their approach to generating quantitative predictions. “The practical implication is that people shouldn’t expect or aspire to model perfection,” he says. Instead, modelers should explore the range of possible behaviors that climate models can have by systematically varying the way the models are built to see the full range of predictions they might make.

In this vision, researchers would not simply generate a single number to predict, say, the average global temperature that would result from a doubling of carbon dioxide levels in the atmosphere. Rather, an ensemble of thousands of models would produce the probabilities for a wide range of possible temperatures.

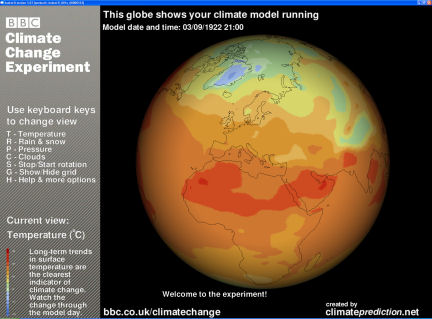

Some researchers have already begun acting on this vision. A group at the University of Oxford in England runs a project called Climateprediction.net which uses the computing power of volunteers around the world to run about 150,000 variations on a climate model that the researchers have developed. Their first round of results showed that climate models can predict a much broader range of possible future warming than models have previously shown. Under some plausible versions of the Oxford group’s model, global temperature could rise by as much as 11°C if carbon dioxide levels were to double. That’s far greater than the 2°C to 5°C rise predicted by the Intergovernmental Panel on Climate Change. Such an extreme outcome is quite unlikely, however.

Nevertheless, McWilliams’ argument is controversial in the modeling community. Reto Knutti, a climate modeler at the University of Bern in Switzerland, says that he expects models to produce increasingly similar answers over time. He argues that scientists have developed new models in the last five to ten years that are not as sophisticated as some older models that have been developed over decades, which makes the spread seem wider than it might otherwise.

But McWilliams says the modeling community needs to grapple with this issue now to make sure that models are capable of providing answers to the kinds of questions being asked of them. He compares his argument with Kurt Gödel’s proof that some mathematical statements are neither provably true nor provably false. Gödel’s theorem, says McWilliams, “is understood as a strong cautionary result about making sure that you’re asking the right questions before you exhaust yourself trying to answer them.”

If you would like to comment on this article, please see the blog version.