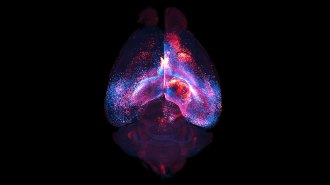

Imaging scans show where symbols turn to letters in the brain

A functional MRI study maps the path symbols take as they gain meaning and sound

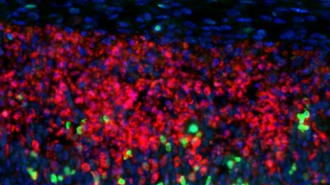

READING MINDS The brain activity of people learning to read reflects a journey letters take as they gain meaning and sounds in the mind.

Seb_ra/iStock/Getty Images Plus