Radiometric dating puts pieces of the past in context. Here’s how

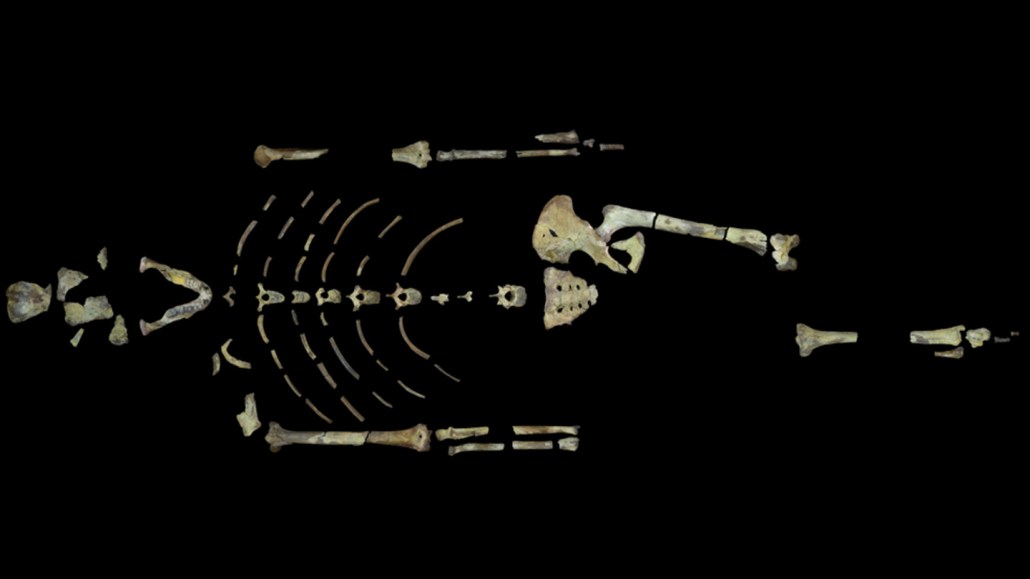

The famous skeleton Lucy is too old for radiocarbon dating. But using argon-argon dating on tiny crystals in layers of volcanic ash sandwiching the sediments where Lucy was found, researchers have put the fossils at 3.18 million years old.

John Kappelman/University of Texas at Austin (CC BY)

When a researcher picks up an object — whether it’s a scrap of leather from a dig site, a fossil from a museum drawer or a newly fallen meteorite — their first question might be, “What is this thing?” A natural follow-up: “How old is it?” The first question is fundamental, no doubt. But the second is powerful, too. It helps place the object in its proper archaeological, geologic or cosmological context. “Without knowing the ages of things, there is no narrative,” says Rick Potts, a paleoanthropologist at the Smithsonian’s National Museum of Natural History in Washington, D.C.

Up until a century or so ago, researchers studying rocks and the fossils they contain could answer the age question only vaguely if at all. Using guidelines established by geologists in the 1600s, they could gauge a rock’s age only in relative terms: For example, Sample A was considered older than Sample B if it came from a lower and presumed older layer of sediment or rock. But Earth is a dynamic place. Missing layers, as well as disturbances from earthquakes, landslides or other upheavals, meant even relative ages for rocks could be difficult to determine. Ditto for the bones, tools and other artifacts within the earth: Previous excavations, or even the day-to-day activities of a site’s ancient residents, could churn the soil and thus disrupt the layers.

To celebrate our 100th anniversary, we’re highlighting some of the biggest advances in science over the last century. For more from the series, visit Century of Science.

The discovery of radioactivity in the mid-1890s paved the way for scientists to ascertain the absolute ages of some objects, says Doug Macdougall, a geochemist formerly at the Scripps Institution of Oceanography and the author of Nature’s Clocks. Within less than a decade, he notes, several physicists had proposed methods for doing so. The methods are based on the finding that each type, or isotope, of a radioactive atom has its own particular half-life — the time that it takes for one-half of the atoms in a sample to decay. Because radioactive decay occurs in the nucleus of the atom, half-life doesn’t change with environmental conditions, from the hellish heat and crushing pressures deep inside Earth to the frigid realm of the far solar system. That makes radioactive isotopes wonderful clocks.

Today, radiometric dating spans the ages, from recent times to the birth of our solar system. Carbon-14 dating is most suited to something that lived during the last 50,000 years or something made from such organisms — the wooden shafts of arrows, the leather in a moccasin or the plant fibers used to weave fabrics or baskets. Longer-lived isotopes of uranium and thorium can help peer deep into Earth’s past — back to when our planet’s first rocks were forming, or even further, to when our solar system was coalescing from gas and dust.

There are several different methods for estimating ages using half-lives, Macdougall explains. For isotopes with relatively rapid decay rates, researchers determine the proportion of a radioactive isotope relative to other atoms of the same element and compare it with how much of that isotope a fresh sample would be expected to have. With that information, along with the known half-life, it’s possible to estimate the age of the original sample.

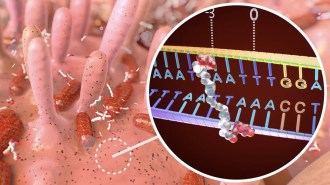

This approach works well for carbon-14, possibly one of the most familiar isotopes used in radiometric dating. While a plant or animal is alive, it takes in carbon from the environment. But when the organism dies, that intake stops. Because carbon-14 is created high in Earth’s atmosphere at a fairly constant rate, scientists can readily estimate the amount of that isotope that should be present in a living organism.

Carbon-14 has a half-life of about 5,730 years — which means that 5,730 years after an organism dies, half of the isotope present in the original sample will have decayed. After another 5,730 years, half of the carbon-14 that remained has decayed (leaving one-fourth of the amount from the original sample). Eventually, after 50,000 years or so (or almost nine half-lives), so little carbon-14 remains that the sample can’t be reliably dated.

Besides carbon-14, this technique can be used for short-lived isotopes of sulfur, silicon, phosphorus and beryllium, Macdougall says.

Another method is more suitable for isotopes with long half-lives (and therefore slow rates of decay), Macdougall says. In this approach, scientists measure the amount of a particular isotope in a sample and then compare that with the amounts of various “daughter products” that form as the isotope decays. By taking the ratios of those amounts — or even the ratios of amounts of daughter products alone — and then “running the clock backward,” researchers can estimate when radioactive decay first began (that is, when the object formed).

Scientists still have to be careful. A radiometric clock can be “reset” if either the original isotope or its daughter products are lost to the environment. Robust crystals called zircons, for example, are long-lasting and present in many rocks. But extreme temperatures can drive lead, a daughter product of radioactive uranium and thorium, out of the crystal.

Despite the potential challenges, scientists have used radiometric dating to answer all sorts of questions. Researchers used lead-lead dating — which looks at two lead isotopes, both daughter products of a uranium isotope — to analyze an inclusion inside an ancient meteorite; in 2010, they reported that the tiny bleb was about 4.568 billion years old, making it one of the earliest building blocks of our solar system. The team used an aluminum-magnesium dating technique to confirm that great age. Others have used similar techniques to estimate the age of Earth’s oldest known rocks (about 4.4 billion years) and when plate tectonics might have begun (more than 4 billion years ago, according to one study).

And even though some of these techniques are estimating ages billions of years in the past, “they can do so with error bars of only 100,000 years or so,” says Marc Caffee, a physicist at Purdue University in West Lafayette, Ind. “I marvel at the precision these chronometers have,” he adds.

Dating techniques that rely on isotopes with half-lives measured in millions of years can be used to estimate long-term rates of erosion — to help gauge how quickly a canyon was carved, for example — or to infer the onset of glacial activity during recent ice ages.

At least half a dozen radiometric dating techniques can be applied to the last few million years when humans and our kin evolved, says Potts. For example, by using argon-argon dating to pin down the age of tiny crystals in ancient layers of volcanic ash — crystals that had formed during the eruptions themselves — researchers have estimated that the Australopithecus dubbed Lucy lived about 3.18 million years ago. Today’s archaeologists and paleontologists also benefit from another half dozen or so absolute dating techniques beyond radiometric approaches, expanding the types of materials that can be dated, Potts says.

Advances in techniques over time have let researchers analyze increasingly smaller and smaller samples. That, in turn, is less destructive of rare — or even one-of-a-kind — artifacts or fossils. Whereas once researchers had to destroy large samples of material to perform an analysis, “now we can date a single kernel of maize,” says Ryan Williams, an anthropological archaeologist at the Field Museum in Chicago.

Other advances, which have made radiometric dating techniques cheaper and more precise, send researchers back to the lab to reanalyze artifacts, says Suzanne Pilaar Birch, an archaeologist at the University of Georgia in Athens. And more samples and more precision yield more refined chronologies. By radiocarbon dating nearly 100 samples from a mountaintop site in southern Peru, for instance, Williams and his colleagues determined that the site was occupied for more than four centuries.

The results of all this dating, Pilaar Birch notes, are “changing our understanding of the past.”

Sign up for our newsletter

We summarize the week's scientific breakthroughs every Thursday.