Artificial intelligence challenges what it means to be creative

This portrait, "Edmond de Belamy," sold in 2018 for $432,500. It was created by an algorithm.

TIMOTHY A. CLARY/AFP via Getty Images

When British artist Harold Cohen met his first computer in 1968, he wondered if the machine might help solve a mystery that had long puzzled him: How can we look at a drawing, a few little scribbles, and see a face? Five years later, he devised a robotic artist called AARON to explore this idea. He equipped it with basic rules for painting and for how body parts are represented in portraiture — and then set it loose making art.

Not far behind was the composer David Cope, who coined the phrase “musical intelligence” to describe his experiments with artificial intelligence–powered composition. Cope once told me that as early as the 1960s, it seemed to him “perfectly logical to do creative things with algorithms” rather than to painstakingly draw by hand every word of a story, note of a musical composition or brush stroke of a painting. He initially tinkered with algorithms on paper, then in 1981 moved to computers to help solve a case of composer’s block.

To celebrate our 100th anniversary, we’re highlighting some of the biggest advances in science over the last century. To see more from the series, visit Century of Science.

Cohen and Cope were among a handful of eccentrics pushing computers to go against their nature as cold, calculating things. The still-nascent field of AI had its focus set squarely on solid concepts like reasoning and planning, or on tasks like playing chess and checkers or solving mathematical problems. Most AI researchers balked at the notion of creative machines.

Slowly, however, as Cohen and Cope cranked out a stream of academic papers and books about their work, a field emerged around them: computational creativity. It included the study and development of autonomous creative systems, interactive tools that support human creativity and mathematical approaches to modeling human creativity. In the late 1990s, computational creativity became a formalized area of study with a growing cohort of researchers and eventually its own journal and annual event.

Soon enough — thanks to new techniques rooted in machine learning and artificial neural networks, in which connected computing nodes attempt to mirror the workings of the brain — creative AIs could absorb and internalize real-world data and identify patterns and rules that they could apply to their creations.

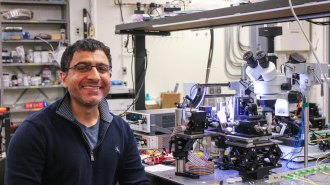

Computer scientist Simon Colton, then at Imperial College London and now at Queen Mary University of London and Monash University in Melbourne, Australia, spent much of the 2000s building the Painting Fool. The computer program analyzed the text of news articles and other written works to determine the sentiment and extract keywords. It then combined that analysis with an automated search of the photography website Flickr to help it generate painterly collages in the mood of the original article. Later the Painting Fool learned to paint portraits in real time of people it met through an attached camera, again applying its “mood” to the style of the portrait (or in some cases refusing to paint anything because it was in a bad mood).

Similarly, in the early 2010s, computational creativity turned to gaming. AI researcher and game designer Michael Cook dedicated his Ph.D. thesis and early research associate work at Goldsmiths, University of London to creating ANGELINA — which made simple games based on news articles from The Guardian, combining current affairs text analysis with hard-coded design and programming techniques.

During this era, Colton says, AIs began to look like creative artists in their own right — incorporating elements of creativity such as intentionality, skill, appreciation and imagination. But what followed was a focus on mimicry, along with controversy over what it means to be creative.

New techniques that excelled at classifying data to high degrees of precision through repeated analysis helped AI master existing creative styles. AI could now create works like those of classical composers, famous painters, novelists and more.

One AI-authored painting modeled on thousands of portraits painted between the 14th and 20th centuries sold for $432,500 at auction. In another case, study participants struggled to differentiate the musical phrases of Johann Sebastian Bach from those created by a computer program called Kulitta that had been trained on Bach’s compositions. Even IBM got in on the fun, tasking its Watson AI system with analyzing 9,000 recipes to devise its own cuisine ideas.

But many in the field, as well as onlookers, wondered if these AIs really showed creativity. Though sophisticated in their mimicry, these creative AIs seemed incapable of true innovation because they lacked the capacity to incorporate new influences from their environment. Colton and a colleague described them as requiring “much human intervention, supervision, and highly technical knowledge” in producing creative results. Overall, as composer and computer music researcher Palle Dahlstedt puts it, these AIs converged toward the mean, creating something typical of what is already out there, whereas creativity is supposed to diverge away from the typical.

Sign up for our newsletter

We summarize the week's scientific breakthroughs every Thursday.

In order to make the step to true creativity, Dahlstedt suggested, AI “would have to model the causes of the music, the conditions for its coming into being — not the results.”

True creativity is a quest for originality. It is a recombination of disparate ideas in new ways. It is unexpected solutions. It might be music or painting or dance, but also the flash of inspiration that helps lead to advances on the order of light bulbs and airplanes and the periodic table. In the view of many in the computational creativity field, it is not yet attainable by machines.

In just the past few years, creative AIs have expanded into style invention — into authorship that is individualized rather than imitative and that projects meaning and intentionality, even if none exists. For Colton, this element of intentionality — a focus on the process, more so than the final output — is key to achieving creativity. But he wonders whether meaning and authenticity are also essential, as the same poem could lead to vastly different interpretations if the reader knows it was written by a man versus a woman versus a machine.

If an AI lacks the self-awareness to reflect on its actions and experiences, and to communicate its creative intent, then is it truly creative? Or is the creativity still with the author who fed it data and directed it to act?

Ultimately, moving from an attempt at thinking machines to an attempt at creative machines may transform our understanding of ourselves. Seventy years ago Alan Turing — sometimes described as the father of artificial intelligence — devised a test he called “the imitation game” to measure a machine’s intelligence against our own. “Turing’s greatest insight,” writes philosopher of technology Joel Parthemore of the University of Skövde in Sweden, “lie in seeing digital computers as a mirror by which the human mind could consider itself in ways that previously were not possible.”