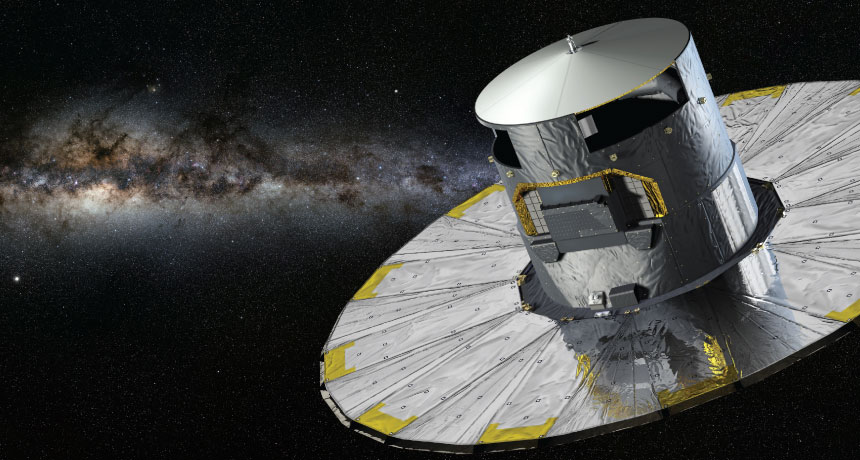

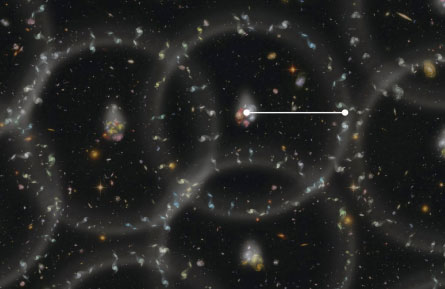

STAR TRACK The Gaia spacecraft (illustrated above) is collecting data on position and motion for about 1 billion stars in the galaxy. The data may help resolve controversy over the pace of the universe’s expansion.

Milky Way: Serge Brunier/ESO; Gaia: D. Ducros /ESA

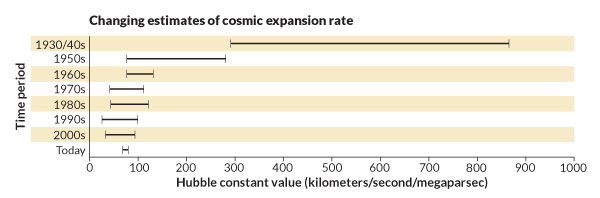

For as long as humans have wondered about it, the universe has concealed its vital statistics — its age, its weight, its size, its composition. By the opening of the 21st century, though, experts began trumpeting a new era of precision cosmology. No longer do cosmologists argue about whether the universe is 10 billion or 20 billion years old — it was born 13.8 billion years ago. Pie charts now depict a precise recipe for the different relative amounts of matter and energy in the cosmos. And astronomers recently reached agreement over just how fast the universe is growing, settling a controversy born back in 1929 when Edwin Hubble discovered that expansion.

Except now the smooth path to a precisely described cosmos has hit a bit of a snag. A new measurement of the speed of the universe’s expansion from the European Space Agency’s Planck satellite doesn’t match the best data from previous methods (SN: 4/20/13, p. 5). Just when all the pieces of the cosmic puzzle had appeared to fall into place, one piece suddenly doesn’t fit so perfectly anymore.

“Something doesn’t look quite right,” says astrophysicist David Spergel of Princeton University. “We can no longer so confidently go around making statements like all our datasets seem consistent.”

In other words, different ways of measuring the universe’s expansion rate — a number called the Hubble constant — no longer converge on one value. That calls into question the whole set of numbers describing the properties of the cosmos, known as the standard cosmological model. Accepting the new Hubble constant value means revising the recipe of ingredients that make up the universe, such as the dark matter hiding in space and the dark energy that accelerates the cosmic expansion.

Over the years, the Hubble constant’s value has been as elusive as it is important. Hubble himself badly overestimated the expansion speed, which depends on distance — the farther away two objects are, the faster space’s expansion pushes them apart. Hubble calculated that objects separated by a million parsecs (roughly 3 million light-years) would fly apart at 500 kilometers per second. At that rate, the universe would be, paradoxically, younger than the Earth.

Refined measurements gradually reduced the estimate to a more realistic realm. By the 1970s, experts argued over whether the Hubble constant is closer to 100 or to 50. By the late 1990s, Hubble Space Telescope observations of supernovas and other data placed the expansion rate value in the 70s, eventually settling in at around 73 km/s/megaparsec.

Confidence in that value was enhanced by measurements of the radiation glow left over from the Big Bang, primarily by a satellite probe known as WMAP. Its value for the Hubble constant was about 70, close enough to 73 that the margins of error for the two values overlapped (SN: 3/15/08, p. 163).

But last year, the Planck satellite reported even more precise measurements of that glow — known as the cosmic microwave background radiation — implying a Hubble constant around 67. That was about 10 percent lower than the Hubble telescope value, a difference that most physicists found too big to ignore.

“We seem to be having some disagreement,” says Wendy Freedman of the Carnegie Observatories in Pasadena, Calif., and leader of the team that measured the expansion rate using the Hubble telescope.

An inconvenient discrepancy

In 2013, Planck satellite data suggested a slightly lower rate than other data sources for the expansion of the universe, prompting revisions in estimates of the composition of the cosmos.ScienceNews/YouTube |

Freedman, Spergel and other experts expect that further refinements of the measurements will eventually resolve the conflict with no major repercussions. Nevertheless, the discrepancy was a constant topic of discussion in December at the Texas Symposium on Relativistic Astrophysics, held in Dallas. Krzysztof Górski of the Planck team acknowledged the disagreement during his talk at the symposium, but he noted that much of the Planck data has not yet been analyzed. “I think we should just stay calm and carry on,” he said.

It may just be that unknown problems in calibrating instruments afflict one or the other of the two methods. On the other hand, the chance remains that something might be wrong with science’s basic model of the universe.

“The latter possibility would be the most exciting,” says Spergel, “because it would point potentially to something about dark energy or some new physics that we could study.”

Already several speculative proposals have appeared offering novel ways to reduce or eliminate the disparity. Perhaps gravity itself has mass, leading the supernova method to overestimate the Hubble constant, one group proposes. Or maybe dark energy and dark matter, supposedly independent ingredients in the cosmic recipe, interact in a way that favors a Hubble constant higher than the Planck results suggest. And possibly an uneven distribution of matter in the universe means that the Hubble constant based on supernovas and other objects in the nearby (and therefore recent) universe won’t match the value derived from the cosmic microwave background, which dates to nearly the beginning of time.

Most experts are betting that more data will relieve the tension without the need for major cosmological surgery. And plenty more data are on the way. Planck’s report, for instance, was based on about 15 months of observations, corresponding to two sweeps of the sky. Eventually the Planck team will analyze 50 months’ worth of data, which should further reduce the margin of error when the analysis is released later this year.Meanwhile, Freedman and colleagues with the Carnegie Hubble project have continued to refine the Hubble constant estimate. That endeavor combines data from the Hubble telescope, the Spitzer Space Telescope and ground-based telescopes to establish the cosmic distance scale.

Since the universe’s expansion rate depends on how far apart two objects are, measuring it depends on accurate knowledge of cosmic distances. Nearby, distances are calculated directly from parallax. Simple geometry can tell how far away a star is by viewing it from opposite sides of the Earth’s solar orbit. Farther out, distance measurements rely on “standard candles” — objects of known brightness whose distance can be inferred by how bright they appear.

Traditionally, astronomers have exploited the standard candle potential of a particular class of stars known as Cepheid variables. Cepheids periodically brighten and dim on a regular schedule, with the timing depending on their intrinsic average brightness. Parallax establishes the brightness-distance relation for nearby Cepheids; that relation can then be used to estimate distances for those much farther away.

For measuring the Hubble constant, though, even more distant standard candles are needed, and that’s a job for supernovas. Supernovas of one category, known as type 1a, are not exactly all equally bright, but their intrinsic brightness can be calculated based on how fast their light dims over time. Currently Freedman and colleagues are combining data on type 1a supernovas in galaxies that also contain Cepheids, allowing a calibration of the distance scale. Along with how fast objects are receding — inferred from the colors of light they emit — those distances determine the expansion rate. Combining supernova and Cepheid data “at present offer the best opportunity to measure the Hubble constant and minimize the systematic errors,” Freedman says.

She points out that this method offers a direct measure of the expansion rate, while the cosmic microwave background readings give merely indirect estimates. Planck measured temperature differences in space, relics of variations in the matter density in the baby universe. Combined with other data and assumptions, those measurements can be used to deduce other properties of the cosmos. Planck’s value for the Hubble constant is the best fit for a whole model of the universe, including a model for the nature of the dark energy.

“So maybe the disagreement is a clue that the dark energy is not quite as simple as you think,” says Harvard University cosmologist Robert Kirshner. “But it’s too soon to conclude that.”

If the Hubble constant is truly on the high side, as supernova data indicate, then the expansion of the cosmos is now a little faster than it has been, on average. That could imply that the dark energy’s strength changes over time. “It’s an interesting possibility,” says Kirshner. Dark energy that changes in strength has implications for the fate of the cosmos. Rather than expanding forever, the universe could someday get torn to shreds in a “Big Rip.”

If the Planck value for the expansion rate is correct, though, then the standard model of the universe’s ingredients needs to be adjusted to make all the numbers fit. Those ingredients include a little ordinary matter, a lot more dark matter and considerably more dark energy. Taken together, these components produce a consistent model of the universe in which the geometry of space is just about perfectly flat (meaning that ordinary Euclidean plane geometry describes it accurately).

Before Planck, the universe’s mass-energy recipe consisted of 4.5 percent ordinary matter, nearly 23 percent dark matter and almost 73 percent dark energy. Planck’s estimates shift the dark energy down to about 68 percent, with dark matter nearly 27 percent and ordinary matter at close to 5 percent.

Speculative solutions

Since the Planck results came out in March 2013, physicists have searched for ways that tinkering with the standard model could explain the Hubble constant inconsistency. One proposal calls for modifying Einstein’s general relativity, the theory of gravity that provides the foundation for all cosmological science. In this approach gravity itself would be massive: Gravitons, the supposedly massless particles that transmit gravitational force, would possess a small mass, adding a new field to space. That field could influence the acceleration of the universe’s expansion attributed to dark energy, Douglas Spolyar of the Institute of Astrophysics in Paris proposed in a paper published December 11 in Physical Review Letters with Martin Sahlén and Joe Silk of the University of Oxford.

If graviton mass diminishes the effect of dark energy, they point out, then dark energy would be stronger in regions with less gravity, such as nearby voids in space. More vigorous, expansion-producing dark energy in local voids could explain why data from nearby supernovas would yield a higher value of the Hubble constant than measures of the more distant universe probed by Planck.

In another departure from standard cosmology, André Costa of the University of São Paulo and colleagues propose a conspiracy between dark matter and dark energy, the two most mysterious ingredients in the cosmic recipe. In the standard view, the dark sides of the universe are independent components. One adds to gravitational attraction (playing an important role in forming galaxies); the other exerts gravitational repulsion, causing the cosmic expansion rate to accelerate. But if they renounce independence and interact in some way — like two medications that cure separately but kill in combination — Hubble constant measurements could be contaminated.

If, for instance, energy can flow from dark matter to dark energy, then the Planck data would underestimate the true Hubble constant, which would be closer to the value measured by supernova studies, Costa and colleagues pointed out in a paper posted last November at arXiv.org.

It’s possible, other researchers suggest, that resolution will come without such drastic challenges to current orthodoxy. It may just be that the two measurements differ because they probe different parts of the universe. And not only do the two methods measure the expansion at different eras of time, they also probe physics on different scales. Supernovas are big by human standards, but on the cosmic scale they’re like points in space. Measurements of the cosmic microwave background probe vastly bigger patches of sky, as a team of French cosmologists pointed out in a paper last year in Physical Review Letters.

Waiting for more data

Ultimately all the speculations will be filtered by more data — from Planck, supernova studies and other methods. In December, for instance, the European Space Agency’s Gaia probe was launched; it will produce more precise Cepheid parallaxes to feed into measurements of the Hubble constant using the supernova method. Already the BOSS project, part of the Sloan Digital Sky Survey, has contributed new fodder for the Hubble debate using clues called baryon acoustic oscillations.

Interaction of matter and light in the very young universe produced concentric pressure waves, or acoustic oscillations, much like the ripple in a pond produced when a rock falls in the water. The ridges of these sound-wave ripples would have deposited matter — small seeds that would grow into galaxies — at specific separation distances. Thus the signature of those ripples ought to be reflected in the distribution of galaxies in space today. BOSS observations show that the preferred distance between two galaxies is about 150 megaparsecs (roughly 450 million light-years). Combined with a redshift measurement, which reveals how fast a galaxy is moving away from Earth, BOSS data provide a new check on the cosmic distance scale. Adding in data from cosmic microwave background probes allows another computation of the Hubble constant.

In January, at the American Astronomical Society meeting near Washington, D.C., BOSS scientists presented their latest results, which yield a Hubble constant of 68 to 69. That result remains lower than the value from supernova measurements, said BOSS team member Daniel Eisenstein of the Harvard-Smithsonian Center for Astrophysics.

“It’s not in sharp disagreement, but it is interesting, so there are a lot of groups trying to track this problem and trying to understand what the resolution might be,” he said. “Today we still have this mild tension. We’ll see where we’re at in six to 12 months.”

In any case, as Freedman points out, the current Hubble constant debate is about a drastically narrower range than in the days when competing camps championed values as high as 100 or lower than 50.

“Fortunately the range of values for the Hubble constant that are now being measured, and the uncertainties in those measurements, have come down quite considerably,” she said at the Texas symposium. “And there’s also a huge amount of progress planned for the future that will set this controversy to rest. There remains the exciting opportunity that there is new physics. Or not.”

Tom Siegfried is the former editor in chief of Science News.