FAST FIGURES Scientists have reported a quicker way to multiply extremely large numbers — calculations vastly more complex than this one.

graja/Shutterstock

Multiplying 2 x 2 is easy. But multiplying two numbers with more than a billion digits each — that takes some serious computation.

The multiplication technique taught in grade school may be simple, but for really big numbers, it’s too slow to be useful. Now, two mathematicians say that they’ve found the fastest way yet to multiply extremely large figures.

The duo claim to have achieved an ultimate speed limit for multiplication, first suggested nearly 50 years ago. That feat, described online March 18 at the document archive HAL, has not yet passed the gauntlet of peer review. But if the technique holds up to scrutiny, it could prove to be the fastest possible way of multiplying whole numbers, or integers.

If you ask an average person what mathematicians do, “they say, ‘Oh, they sit in their office multiplying big numbers together,’” jokes study coauthor David Harvey of the University of New South Wales in Sydney. “For me, it’s actually true.”

When making calculations with exorbitantly large numbers, the most important measure of speed is how quickly the number of operations needed — and hence the time required to do the calculation — grows as you multiply longer and longer strings of digits.

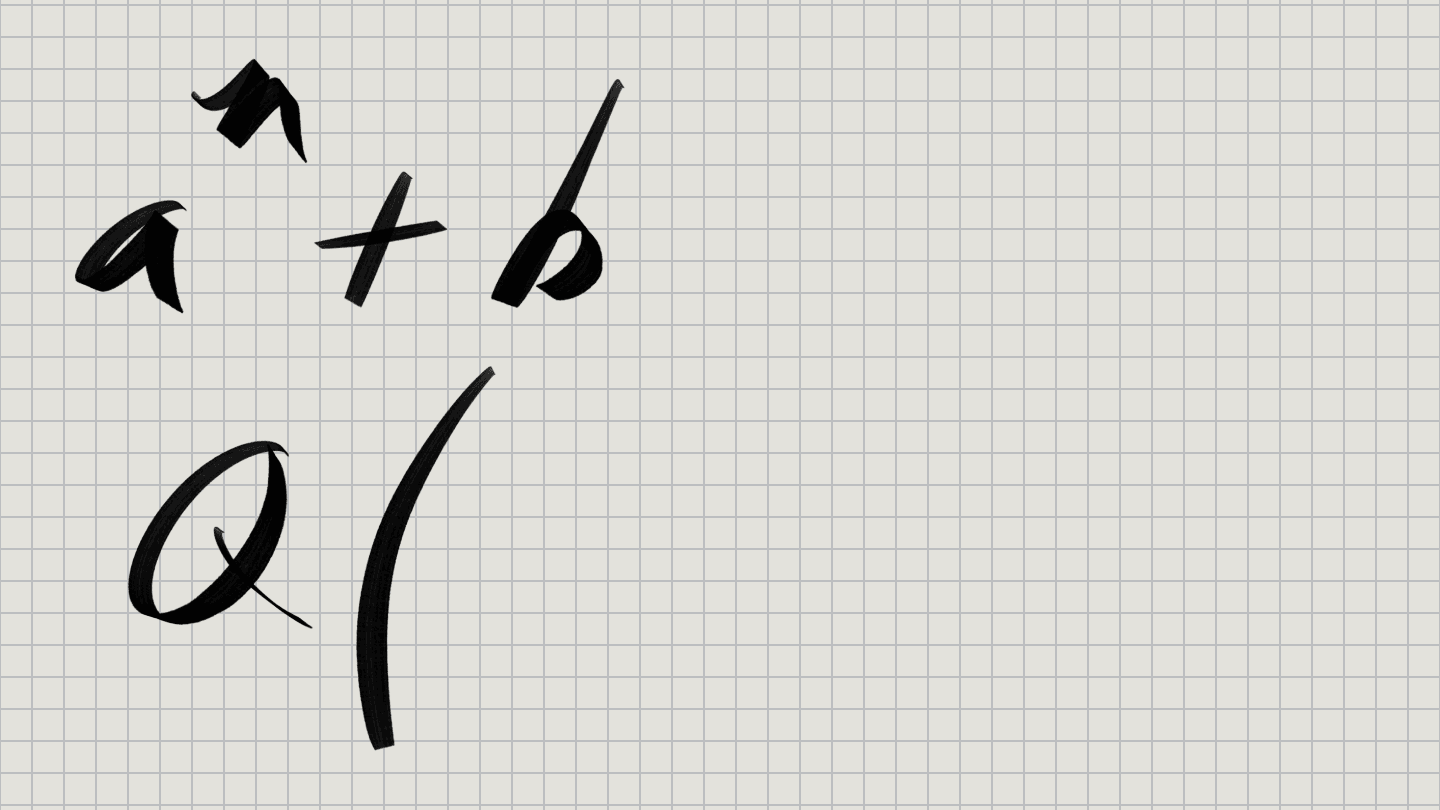

That growth is expressed in terms of n, defined as the number of digits in the numbers being multiplied. For the new technique, the number of operations required is proportional to n times the logarithm of n, expressed as O(n log n) in mathematical lingo. That means that, if you double the number of digits, the number of operations required will increase a bit faster, more than doubling the time the calculation takes.

But, unlike simpler methods of multiplication, the time needed doesn’t quadruple, or otherwise rapidly blow up, as the number of digits creeps up, report Harvey and Joris van der Hoeven of the French national research agency CNRS and École Polytechnique in Palaiseau. That slower growth rate makes products of bigger numbers more manageable to calculate.

The previously predicted max speed for multiplication was O(n log n), meaning the new result meets that expected limit. Although it’s possible an even speedier technique might one day be found, most mathematicians think this is as fast as multiplication can get.

“I was very much astonished that it had been done,” says theoretical computer scientist Martin Fürer of Penn State. He discovered another multiplication speedup in 2007, but gave up on making further improvements. “It seemed quite hopeless to me.”

The new technique comes with a caveat: It won’t be faster than competing methods unless you’re multiplying outrageously huge numbers. But it’s unclear exactly how big those numbers have to be for the technique to win out — or if it’s even possible to multiply such big numbers in the real world.

In the new study, the researchers considered only numbers with more than roughly 10214857091104455251940635045059417341952 digits when written in binary, in which numbers are encoded with a sequence of 0s and 1s. But the scientists didn’t actually perform any of these massive multiplications, because that’s vastly more digits than the number of atoms in the universe. That means there’s no way to do calculations like that on a computer, because there aren’t enough atoms to even represent such huge numbers, much less multiply them together. Instead, the mathematicians came up with a technique that they could prove theoretically would be speedier than other methods, at least for these large quantities.

There’s still a possibility that the method could be shown to work for smaller, but still large, numbers. That could possibly lead to practical uses, Fürer says. Multiplication of these colossal numbers is useful for certain detailed calculations, such as finding new prime numbers with millions of digits (SN Online: 1/5/18) or calculating pi to extreme precision (SN Online: 12/10/02).

Even if the method is not widely useful, making headway on a problem as fundamental as multiplication is still a mighty achievement. “Multiplying numbers is something people have been working on for a while,” says mathematical physicist John Baez of the University of California, Riverside. “It’s a big deal, just because of that.”