What Donkey Kong can tell us about how to study the brain

Unconventional test turns up weaknesses in brain research tools

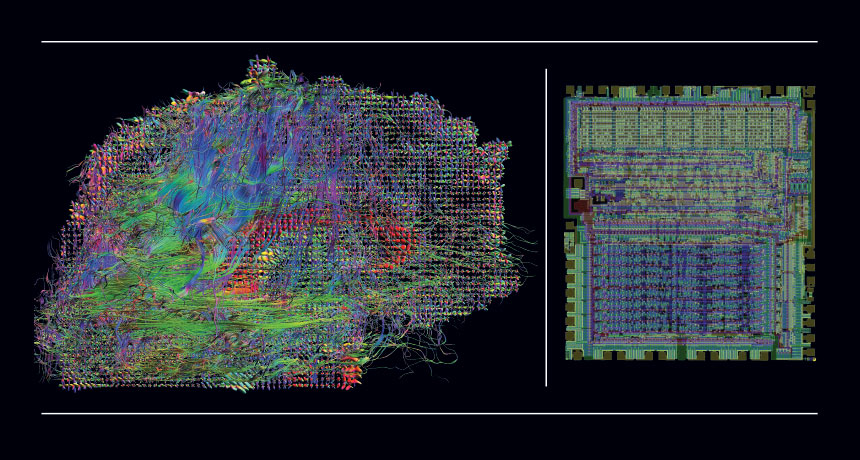

GAMING THE BRAIN Neuroscience tools didn’t reveal much about the inner workings of a simple microprocessor (right), so how can they grasp the much more complex brain?

From left: Courtesy of Lab. of Neuro Imaging and Martinos Ctr. for Biomedical Imaging, Consortium of the Human Connectome Project; Greg James of the Visual 6502 project.

Brain scientists Eric Jonas and Konrad Kording had grown skeptical.