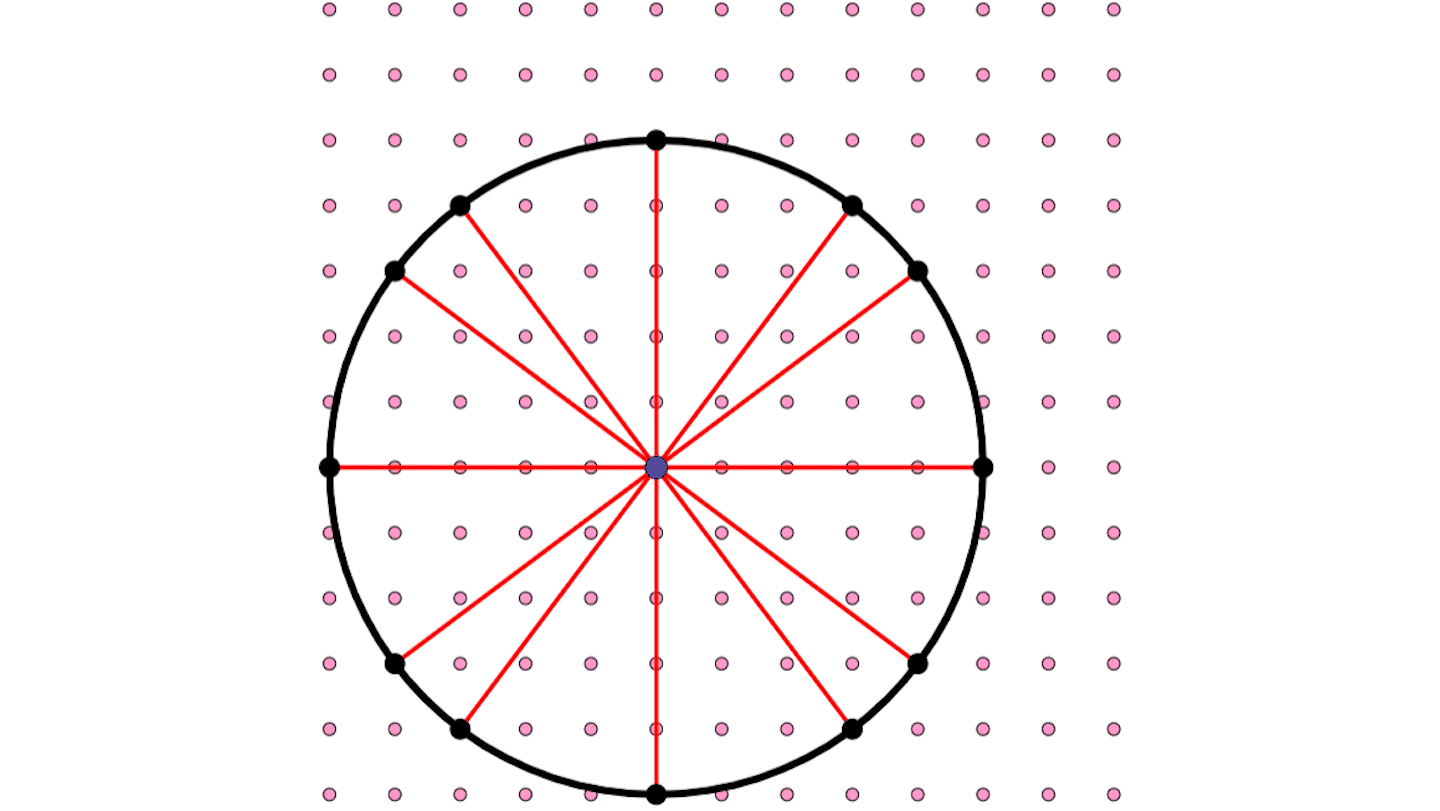

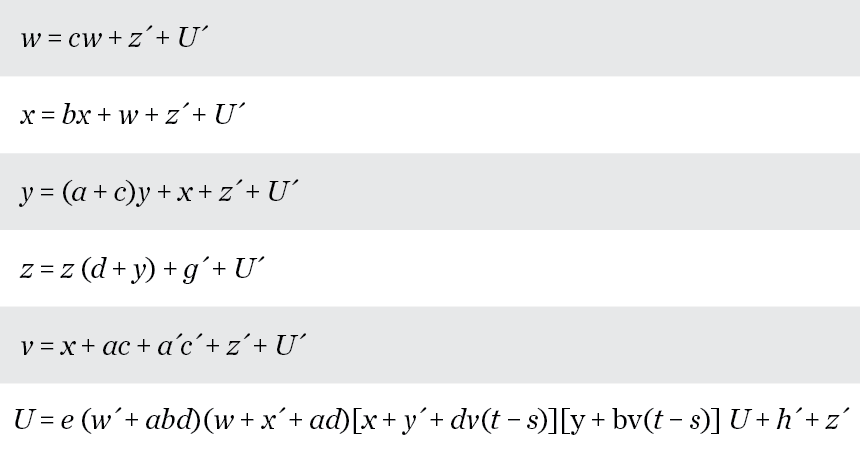

Claude Shannon, born 100 years ago, devised the mathematical representation of information that made the digital era possible. Shown above are the equations he developed to mathematically describe how the circuitry in an electronic combination lock functions.

C.E. Shannon. "A symbolic analysis of relay and switching circuits." Transactions of the American Institute of Electrical Engineers, Vol. 57, 1938, p. 713. Adapted by E. Otwell

Before anybody even had a computer, Claude Shannon figured out how to make computers worth having.

As an electrical engineering graduate student at MIT, Shannon played around with a “differential analyzer,” a crude forerunner to computers. But for his master’s thesis, he was more concerned with relays and switches in electrical circuits, the sorts of things found in telephone exchange networks. In 1937 he produced, in the words of mathematician Solomon Golomb, “one of the greatest master’s theses ever,” establishing the connection between symbolic logic and the math for describing such circuitry. Shannon’s math worked not just for telephone exchanges or other electrical devices, but for any circuits, including the electronic circuitry that in subsequent decades would make digital computers so powerful.

It’s now conveniently a good time to celebrate Shannon’s achievements, on the occasion of the centennial of his birth (April 30) in Petoskey, Michigan, in 1916. Based on the pervasive importance of computing in society today, it wouldn’t be crazy to call the time since then “Shannon’s Century.”

“It is no exaggeration,” wrote Golomb, “to refer to Claude Shannon as the ‘father of the information age,’ and his intellectual achievement as one of the greatest of the twentieth century.”

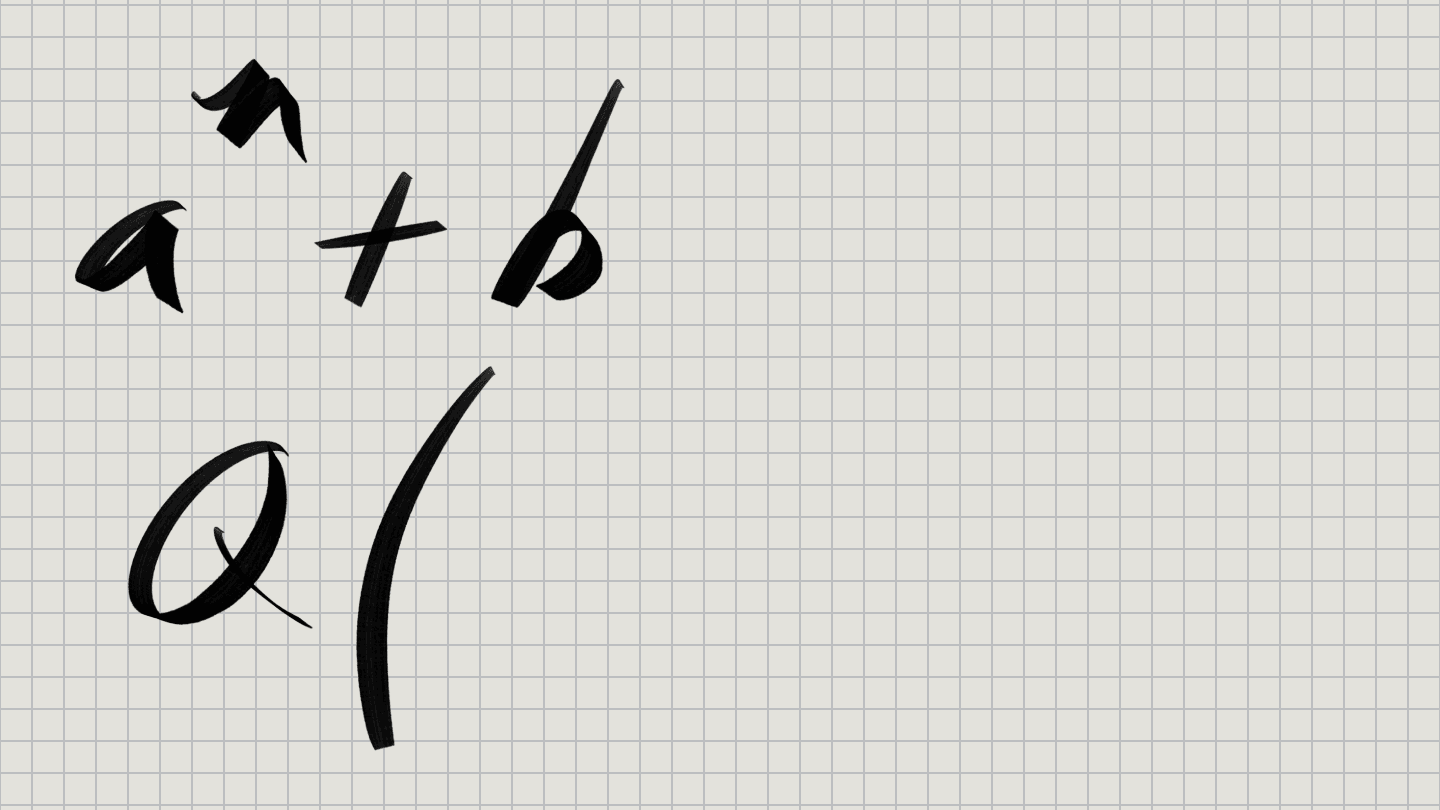

Shannon is most well-known for creating an entirely new scientific field — information theory — in a pair of papers published in 1948. His foundation for that work, though, was built a decade earlier, in his thesis. There he devised equations that represented the behavior of electrical circuitry. How a circuit behaves depends on the interactions of relays and switches that can connect (or not) one terminal to another. Shannon sought a “calculus” for mathematically representing a circuit’s connections, allowing scientists to be able to design circuits effectively for various tasks. (He provided examples of the circuit math for an electronic combination lock and some other devices.)

“Any circuit is represented by a set of equations, the terms of the equations corresponding to the various relays and switches in the circuit,” Shannon wrote. His calculus for manipulating those equations, he showed, “is exactly analogous to the calculus of propositions used in the symbolic study of logic.”

As an undergraduate math (and electrical engineering) major at the University of Michigan, Shannon had learned of 19th century mathematician George Boole’s work on representing logical statements by algebraic symbols. Boole devised a way to calculate logical conclusions about propositions using binary numbers; 1 represented a true proposition and 0 a false proposition. Shannon perceived an analogy between Boole’s logical propositions and the flow of current in electrical circuits. If the circuit plays the role of the proposition, then a false proposition (0) corresponds to a closed circuit; a true proposition (1) corresponds to an open circuit. More elaborate math showed how different circuit designs would correspond to addition or multiplication and other features, the basis of the “logic gates” designed into modern computer chips.

For his Ph.D. dissertation, Shannon analyzed the mathematics of genetics in populations, but that work wasn’t published. In 1941 he began working at Bell Labs; during World War II, he wrote an important (at the time secret) paper on cryptography, which required deeper consideration of how to quantify information. After the war he developed those ideas more fully, focusing on using his 1s and 0s, or bits, to show how much information could be sent through a communications channel and how to communicate it most efficiently and accurately.

In 1948, his two papers on those issues appeared in the Bell System Technical Journal. They soon were published, with an introductory chapter by Warren Weaver, in a book titled The Mathematical Theory of Communication. Today that book is regarded as the founding document of information theory.

For Shannon, communication was not about the message, or its meaning, but about how much information could be communicated in a message (through a given channel). At its most basic, communication is simply the reproduction of a message at some point remote from its point of origin. Such a message might have a “meaning,” but such meaning “is irrelevant to the engineering problem” of transferring the message from one point to another, Shannon asserted. “The significant aspect is that that actual message is one selected from a set of possible messages.” Information, Shannon decided, is a measure of how much a communication reduces the ignorance about which of those possible messages has been transmitted.

In a very simple communication system, if the only possible messages are “yes” and “no,” then each message (1 for yes, 0 for no) reduces your ignorance by half. By Shannon’s math, that corresponds to one bit of information. (He didn’t coin the term “bit” — short for binary digit — but his work established its meaning.) Now consider a more complicated situation — an unabridged English dictionary, which should contain roughly half a million words. One bit would correspond to a yes-or-no that the word is in the first half of the dictionary. That reduces ignorance, but not very much. Each additional bit would reduce the number of possible words by half. Specifying a single word from the dictionary (eliminating all the ignorance) would take about 19 bits. (This fact is useful for playing the game of 20 Questions — just keep asking about the secret word’s location in the dictionary.)

Shannon investigated much more complicated situations and devised theorems for calculating information quantity and how to communicate it efficiently in the presence of noise. His math remains central to almost all of modern digital technology. As electrical engineer Andrew Viterbi wrote in a Shannon eulogy, Shannon’s 1948 papers “established all the key parameters and limits for optimal compression and transmission of digital information.”

Beyond its practical uses, Shannon’s work later proved to have profound scientific significance. His math quantifying information in bits borrowed the equations expressing the second law of thermodynamics, in which the concept of entropy describes the probability of a system’s state. Probability applied to the ways in which a system’s parts could be arranged, it seemed, mirrored the probabilities involved in reducing ignorance about a possible message. Shannon, well aware of this connection, called his measure entropy as well. Eventually questions arose about whether Shannon’s entropy and thermodynamic entropy shared more than a name.

Shannon apparently wasn’t sure. He told one writer in 1979 that he thought the connection between his entropy and thermodynamics would “hold up in the long run” but hadn’t been sufficiently explored. But nowadays a deep conceptual link shows up not only between Shannon’s information theory and thermodynamics, but in fields as diverse as quantum mechanics, molecular biology and the physics of black holes.

Shannon’s understanding of information plays a central role, for instance, in explaining how the notorious Maxwell’s demon can’t violate thermodynamics’ second law. Much of that work is based on Landauer’s principle, the requirement that energy is expended when information is erased. In developing that principle, Rolf Landauer (an IBM physicist) was himself influenced both by Shannon’s work and the work of Sadi Carnot in discerning the second law in the early 19th century.

Something Shannon and Carnot had in common, Landauer once emphasized to me, was that both discovered mathematical restrictions on physical systems that were independent of the details of the system. In other words, Carnot’s limit on the efficiency of steam engines applied to any sort of engine, no matter what it was made of or how it was designed. Shannon’s principles specifying the limits on information compression and transmission apply no matter what technology is used to do the compressing or sending. (Although in Shannon’s case, Landauer added, certain conditions must be met.)

“They both find limits for what you can do which are independent of future inventions,” Landauer told me. That is, they have grasped something profound about reality that is not limited to a specific place or time or thing.

So it seems that Shannon saw deeply not only into the mathematics of circuits, but also into the workings of nature. Information theorist Thomas Cover once wrote that Shannon belongs “in the top handful of creative minds of the century.” Some of Shannon’s original theorems, Cover noted, were not actually proved rigorously. But over time, details in the sketchy proofs have been filled in and Shannon’s intuitive insights stand confirmed. “Shannon’s intuition,” Cover concluded, “must have been anchored in a deep and natural theoretical understanding.” And it seems likely that Shannon’s intuition will provide even more insights into nature in the century ahead.

Follow me on Twitter: @tom_siegfried