Data Recycling and Other No-No’s

An editorial in this month’s Journal of Clinical Investigation takes authors to task for recycling text — and occasionally data — in different journals.

<!—->

JCI executive editor Ushma S. Neill notes that she was driven to take up the topic when she learned from a reader about the “nearly verbatim” publication of a JCI paper in a second journal. The core authors were the same on both papers. In other words, these scientists had essentially self-plagiarized their earlier work.

JCI contacted the authors and asked why they had violated copyright by submitting the contents of the first paper for publication elsewhere. Their answer: They’d been invited to give a talk (presumably based on that earlier paper), but a condition for being awarded the opportunity to speak at this conference was also agreeing to include their talk in a proceedings volume to be issued by another publisher.

JCI contacted the second publisher and got the unnamed publisher to retract the paper. JCI also contacted the authors’ institution and left it to the researchers’ bosses to take any punitive action.

I wish, however, that Neill had offered a bit more clarification on specifics of the incident and of her concerns. It might catalyze a more meaningful discussion amongst her subscribers — and ours.

After all, serious ethical lapses deserve rich censure. So why, for instance, did Neill shield the authors and second journal from public exposure?

To find out, I phoned Neill and learned that her staff had discussed identifying the guilty parties. In the end they didn’t, she said, because “we thought that keeping that information confidential was more appropriate” — even if it did deny JCI’s editors their “own self-satisfaction.”

That may have been the polite way to handle things, but I’d argue that shining a public light on such actions would offer a slap-on-the-hand painful enough to deter similar behavior in the future — either by the original scientists or anyone who read about their situation.

I also asked Neill if there was any way the authors’ could have recounted their JCI data for the second paper that she would have found acceptable. Review papers republish ideas and often some data. Was the “nearly verbatim” language in the second paper the crux of the problem? Would she have been incited to a similar rant (her words) if the authors had instead paraphrased, synopsized, or abstracted the ideas from the first paper?

“We wouldn’t have had any major beef,” Neill says, “even if [the authors] had used one or two of the same figures in some two-page summary of the JCI paper — and “labeled it as such.” But the authors’ second paper “was actually presented as if it was a new research article with graphs and Western blots, etc.” The authors cited their original JCI publication — but just once, and only buried in the section describing their research methods.

Passing off published research as something new — or even allowing naïve readers to mistake it as such — is unquestionably egregious. In fact, Neill told me, the new paper contained “exactly the same data, with some [details] removed. So there was less data in the other publication. Though puzzlingly enough, there were additional authors listed.”

In other words, there exists just cause for Neill to rant. JCI wants researchers to cite its paper as the source of data and ideas first published in its pages. If the same material is available elsewhere, others might choose to cite the latter source — especially since it would appear to be an update, not effectively just some reprint.

Neill suggests that perhaps the conference organizers that demanded a proceedings paper from the authors should bear some of the blame for putting researchers in this bind. It’s something they certainly should be mindful of — especially now.

But what about that second journal: If it doesn’t accept recycling another paper as ethical behavior (and presumably it doesn’t if it retracted the reused JCI paper), what measures does it employ to see that this doesn’t happen again? At a minimum, it should require all authors, even those submitting conference proceedings, to sign some pro forma document guaranteeing their material is substantially new and will not violate copyright. If no such document exists, the JCI incident should trigger that journal — and others — to generate one, and pronto.

What about JCI: Does it have such an author-signed pledge that the material being submitted to it is truly novel? “There’s an authorship agreement form that we require all of our authors to sign,” Neill says. When it was developed five years ago, such ethical behavior was “implied,” she says. “But I need to go back and make sure that that is explicit.”

Too bad that it has to be explicit. Unfortunately, she and others have found the hard way, ethical behavior must be policed.

Neill pointed to some especially hard-to-fathom ethical lapses in an earlier editorial titled “When did everyone become so naughty?” It recounted researchers re-using control data from an earlier study for publication in a later report. Or publishing data on animal experiments that had never received approval from the authors’ institutional animal-care-and-usage committee.

And in a 2006 rant, Neill complained of being “continually aghast at the amount of time I spend policing misconduct and at the different ways some authors have found to manipulate data, undermine colleagues, and push the limits of respectability.”

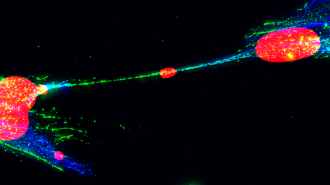

One growing problem, she and her colleagues find, is the use of image-enhancing software to doctor images that being presented as data. Where confirmed, such revelations by her eagle-eyed staff have forced the retraction of papers and caused scientists to receive public censure. The most recent example of the latter: a University of Pennsylvania post-doctoral fellow was outed on the front page of the June 6 Chronicle of Higher Education for, among other things, copying part of an image and then flipping or reversing it “to make it look like a new finding.”