Deepwater Horizon damage footprint larger than thought

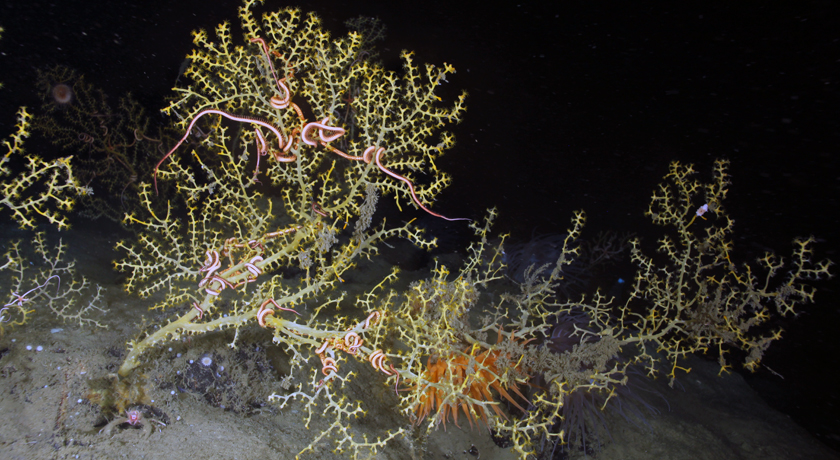

Healthy coral usually has a gold color. Patchy brown growths on colonies, such as this one located 6 kilometers from the Deepwater Horizon site, offer evidence of damage from the oil spill.

Fisher Lab/Penn State Univ.