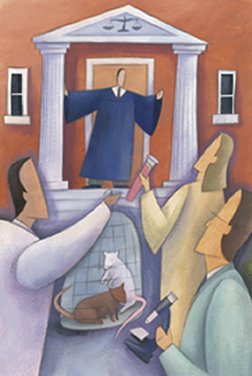

In television courtroom dramas, prosecutors and defendants’ attorneys parade expert witnesses who dazzle juries with insightful forensic analyses, new theories of mental incapacity, data suggesting dangerous flaws in technology, and assessments as to whether an individual’s sickness traces to toxic chemicals. In real life, however, many such scientific experts—and the data that their opinions draw upon—never make it to a jury.

Particularly in cases known as torts, in which victims claim injury from a product or circumstance, judges increasingly have been screening expert witnesses to decide whether the scientific evidence they might recite before a jury is reliable and relevant to the litigation. Affected cases have been primarily in civil courts, where the injured parties, or plaintiffs, claim that some actions by an individual, a company, or a government have caused the plaintiffs harm.

Although judges have always been permitted to preview and exclude expert evidence, relatively few exercised this right prior to a trio of U.S. Supreme Court decisions between 1993 and 1999, notes economist Lloyd Dixon of the RAND Institute for Civil Justice in Santa Monica, Calif. Beginning with the first of those decisions, known as Daubert v. Merrell Dow Pharmaceuticals or simply Daubert, rulings by the high court formally instructed federal judges to assume a gatekeeping role for the admission of science into trials.

The result has been a radical transformation of the rules of evidence in torts, says Margaret A. Berger of the Brooklyn (N.Y.) Law School. In more than a dozen analyses in a July 20 supplement to the American Journal of Public Health (AJPH), she, other legal scholars, academics, and attorneys outline the impacts of these judicial changes.

The reports describe an increase since Daubert in the likelihood that scientific evidence will be challenged and great variability from court to court in what potential testimony gets excluded. One leading contention among these analysts: The increased likelihood that a judge will bar plaintiffs’ evidence from court reduces the chance that their case will ever reach trial.

Yet some legal scholars argue that these changes largely reflect a healthy winnowing of spurious and unsound evidence that before Daubert would have confused a jury. Judges now look for a better fit between scientific evidence and the issue being litigated, says Joe S. Cecil of the Federal Judicial Center’s Program on Scientific and Technical Evidence in Washington, D.C.

“I believe that, overall, Daubert was a step in the right direction,” he says, and that judges’ rulings “now more accurately reflect the scientific process than before Daubert.” Cecil acknowledges, however, that many judges are having problems carrying out their new responsibilities.

Sheila Jasanoff of Harvard University’s John F. Kennedy School of Government disagrees with Cecil’s generally upbeat assessment. In the years since Daubert, she says, “we’ve got an artificially elevated standard for evidence [admissibility] based on ideas about how science operates that are patently untrue.”

One point on which few Daubert analysts disagree is that judges and science experts usually come from different cultures and so have different vocabularies and goals. People studying the issue argue that the search for justice would benefit from the legal community learning more about science.

It started with nausea

In the 1980s, two women who had taken the drug Bendectin to combat morning sickness gave birth to children with severe defects. The drug is a combination of the antihistamine doxylamine and vitamin B6. William Daubert, the husband of one of the two women, and other members of the two families sued. The trial judge examined proposed evidence from nine experts and ruled that only the defendant’s—the drug maker’s—expert could testify.

This physician-epidemiologist had reviewed dozens of epidemiologic studies—which had used statistics to probe for connections between Bendectin use and health effects in large groups of women—and concluded that the data didn’t support a link between the drug and birth defects.

The plaintiffs’ experts had intended to refer to animal data, comparisons of the chemical structure of the drug with that of agents that cause fetal harm, and an unpublished reanalysis of epidemiologic studies. All these data were to show that the drug might cause birth defects. However, the judge decided that because there existed a wealth of published human data—epidemiologic studies that had included some 130,000 women—admitting any evidence other than the published epidemiology studies was unjustified.

The plaintiffs appealed to the Supreme Court, but in 1993, it affirmed the lower court’s decision. Moreover, it directed trial judges to become more proactive in culling unreliable or less-than-compelling scientific testimony from cases they oversee.

Two related Supreme Court opinions followed: General Electric Co. v. Joiner in 1997 and Kumho Tire Co. v. Carmichael in 1999. In the first case, the court ruled that judges could exclude the testimony even of experts with good credentials and espousing good science if it might confuse a jury by being insufficiently relevant to what caused the injury at issue in a case. The second opinion ruled that a judge could bar an expert from testifying if he or she used unusual criteria for interpreting data or events.

The influence of these decisions has been profound. Dixon reviewed all federal district court challenges to expert evidence in civil cases throughout a 2-decade period. He found that the challenges climbed from some 20 per year in the early 1980s to nearly 150 a year by the late 1990s, even as the number of cases rose by only 30 percent.

Not surprisingly, the rate at which judges excluded scientific evidence also went up during the 1990s, Dixon reports.

For instance, he cites a comparison between responses by judges surveyed by the Federal Judicial Center 2 years before the Daubert ruling and 5 years after it. Judges were less likely to admit expert evidence after Daubert, the survey found. In 1991, 75 percent said that they had accepted all expert evidence offered for their most recent trial, whereas in 1998, only 59 percent of judges had accepted all the proposed evidence. Moreover, when lawyers involved in the 1998 cases were asked how their jobs had changed since Daubert, the most common response, chosen by one-third of them, was “I made more motions … to exclude opposing experts.” There’s wide variability in what would-be evidence judges choose to exclude.

Some judges, Berger notes, “find all animal studies irrelevant”; others allow animal results in conjunction with findings on people. Many judges—but not all—throw out test-tube studies and analyses of chemical structures to gauge the activity of related compounds.

Philosopher Carl Cranor of the University of California, Riverside has considered how courts handle medical case studies, which are descriptions of individual patients’ experiences. He notes that the World Health Organization and the Institute of Medicine in Washington, D.C., accept such case studies “as evidence in making causal inferences,” especially about reactions to drugs, poisons, and chemical carcinogens.

Cranor examined all 64 of the federal torts since Daubert that dealt with toxic agents and mentioned case studies. Judges rejected case study–based testimony in 36 cases, he reports in the AJPH supplement.

Judges aren’t scientists

In Daubert, the Supreme Court instructed trial judges to ensure that any scientific evidence that they admit had been “derived by the scientific method.” The high court even offered guidelines for assessing such evidence, although it also cautioned that they were not to be viewed as “a definitive checklist or test.” The guidelines included whether testimony would be based on theories that had been tested, data that had been peer reviewed, or techniques with known error rates.

Berger says that few judges understand the scientific method. For example, she cites a 2001 study by Sophia Gatowski of the National Council of Juvenile & Family Court Judges in Reno, Nev. Titled “Asking the gatekeepers: A national survey of judges on judging expert evidence in a post-Daubert world,” it found that judges who had applied Daubert standards to evidence “had little understanding of the key concept of hypothesis testing or of the significance of error rates,” Berger says.

Adds Jasanoff, many judges seem to assume that the facts needed to clearly establish or reject causality in a tort case invariably exist somewhere. In fact, she says, because most scientific data were developed to test a hypothesis quite different from the one being litigated, no data may possess the good “fit” that judges desire.

Moreover, she maintains, judges often don’t recognize factors that might have limited the production of compelling evidence—for instance, that a defendant company might have hidden its workers’ medical or chemical-exposure records from outside researchers.

“Courts plainly feel that the hierarchy of evidence on causation starts with epidemiology,” says Michael D. Green of the Wake Forest University School of Law in Winston-Salem, N.C. He coauthored an assessment of how torts are being litigated nationally, due for release next year by the Philadelphia-based American Law Institute. His team has found that judges are reluctant to admit evidence from animal studies and other laboratory work, he says.

This is in contrast to researchers and regulators, who frequently make judgments about human hazards from data collected from animals and test tube experiments, Cranor observes. For his AJPH paper and a book due out next year, he reviewed carcinogenicity assessments by what he calls the “gold standard” adjudicator: the International Agency for Research on Cancer (IARC), headquartered in Lyon, France.

IARC has classified at least a few chemicals as “known human carcinogens,” even though no epidemiological result confirmed that designation, Cranor found. Nearly 50 percent of “probable human carcinogens” received that description from IARC on the basis of data from animal studies, often supported by test-tube or biochemical data.

Burden of proof

It’s in a defendant’s interest, Berger says, to challenge—under Daubert —virtually every piece of expert testimony proposed. And that’s what’s happening, she adds.

Today, plaintiffs must present the most-compelling witnesses available during a pretrial review or risk having their case dismissed by a judge, Berger and others argue. These exercises can end up resembling a trial itself, Berger adds—both in time and cost. Having to essentially try a case twice may limit tort litigation primarily to wealthy or class action claimants, Berger worries.

When Dixon examined some 400 federal district court cases that were tried between 1980 and 1999, he also found “a real shift, post-Daubert, in the reasoning judges used to exclude evidence.” Many of these trial judges began using terms offered by the Supreme Court as suggested criteria for such rulings, for instance, finding that evidence lacked “reliability” or “relevance.”

Those words are key, Berger contends, because although a trial judge’s ruling may be appealed, the Supreme Court has noted that appellate judges must defer to trial judges in their interpretation of evidence—unless a lower court’s decisions were blatantly erroneous. In other words, she explains, exclusions of expert evidence are generally irreversible, especially if the judge appears to have tried to uphold the Supreme Court’s criteria.

Yet trial judges often apply such criteria far differently than a scientist would, several papers in the AJPH supplement argue. For instance, the Daubert ruling “encourages judges to look at pieces of research individually,” observes David Michaels of the George Washington University School of Public Health and Health Sciences in Washington, D.C.

Researchers, by contrast, generally evaluate all apparently relevant data, then “weigh the evidence” supporting or challenging some alleged risk, notes science-policy analyst Sheldon Krimsky of Tufts University in Medford, Mass. Scientists would consider how the studies were designed, their size, whom or what they studied, and how closely their features match the plaintiff’s situation to determine how important findings are in resolving a question, he notes.

Admittedly, Krimsky observes in his AJPH paper, scientists don’t have set rules for assigning weights to various types of data. They apply their own criteria and the generally accepted standards of research.

Many judges appear uncomfortable about giving similar leeway to juries, Berger and Cecil note.

What such judges don’t seem to appreciate, says philosopher Susan Haack of the University of Miami (Fla.) School of Law, is that this weighing of disparate but often interlocking bits of scientific evidence is little different from assembling forensic and other conventional evidence to establish a defendant’s means, motive, and opportunity to have committed some crime.

Bridging cultures

Scientists question, test, evaluate, and retest various hypotheses, looking for an explanation that best fits the observations, says Douglas L. Weed of the National Cancer Institute in Bethesda, Md. They’re not expecting “truth,” he notes, because they know that “uncertainty flows through science like a river.”

“Consumers of science, of which the law is one, have another use for science—to help answer whether one thing caused another,” says Weed.

David E. Bernstein of the George Mason Law School in Arlington, Va., explains that torts ask juries to decide whether a defendant’s action “is more likely than not to actually have caused the [plaintiff’s] injury.” Yet seldom can science answer conclusively that one thing caused another, or even that there’s a 51 percent chance that it did.

The National Academies in Washington, D.C., recently convened a panel to investigate Daubert‘s impact. Its report is due out this fall. Weed, who served as the committee’s science chair, says the committee has found not only that science and the law represent two distinct cultures but also that the implications of judges’ excluding evidence under the authorization of Daubert are too important for scientists to ignore.

Moreover, he says, the panel saw a growing need “for getting science into the legal curriculum,” so judges might better understand what they can reasonably expect of scientific experts.

Berger, the legal chair of the panel, has been offering such programs for the past several years. Each of her 2-day-long “Science for Judges” sessions introduces 25 state and 25 federal judges to some topical development, such as what’s going on in biotechnology research or legal limits on how scientists can gather data. “Certainly, there would not be this program but for Daubert,” Berger says.

Michaels’ group at George Washington University administers the 3-year-old Project on Scientific Knowledge and Public Policy. Its objective is not only to enhance understanding by both scientists and the lay public of how science is used—or misused—in government decision making and the law but also to inform decision makers about the nature of scientific inquiry and opinion. Last year, the project began funding research into the impacts of Daubert on public policy and courts’ use of science.

What seems clear, says Michaels, who edited the AJPH supplement, is that because of the Daubert decision, the work, theories, and interpretation of data by even careful and credible scientists are often barred from trials. Restricting a jury’s access to such information can diminish the likelihood that justice will be served, he argues.

This exclusion of science, he adds, might also affect the conduct and stature of research, as “judges essentially tell scientists that certain of their avenues of inquiry are not valued.”

That’s why Michaels notes that, at least for scientists, “Daubert is the most influential Supreme Court ruling you’ve never heard of.”