To simulate chance occurrences, a computer can’t literally toss a coin or roll a die. Instead, it relies on special numerical recipes for generating strings of shuffled digits that pass for random numbers. Such sequences of pseudorandom numbers play crucial roles not only in computer games but also in simulations of physical processes.

Researchers have long known that the use of particular methods for generating random numbers can produce misleading results in simulations. In one famous case in 1992, physicists discovered that even “high-quality” random-number generators, which pass a battery of randomness tests, can yield incorrect results under certain circumstances.

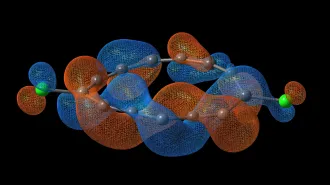

At that time, Alan M. Ferrenberg, a computational physicist at the University of Georgia, and his coworkers were interested in simulating the so-called Ising model, which features an abrupt, temperature-dependent transition from an ordered to a disordered state in a system in which neighboring particles have either the same or opposite spins.

To accomplish this goal, they selected a new spin-flipping algorithm developed by Ulli Wolff of the University of Kiel in Germany and a “subtract-with-borrow” random-number generator invented by George Marsaglia and Arif Zaman of Florida State University.

In preparation for simulating the three-dimensional Ising model, Ferrenberg tested this package on the two-dimensional version, which has a known answer, and he got the wrong result. When he substituted another random-number generator for the “subtract-with-borrow” scheme that he had used initially, Ferrenberg found that he came much closer to the correct answer–even though the substitute itself had well-known defects.

It was a very discouraging outcome. “Since there is no reason to believe that the model which we have investigated has any special idiosyncrasies, these results offer another stern warning about the need to very carefully test the implementation of new algorithms,” Ferrenberg and his coworkers concluded at that time. “In particular, this means that a specific algorithm must be tested together with the random-number generator being used regardless of the tests which the generator has passed.”

The uncertainty about how subtle, hidden patterns among digits spewed out by various random-number generators may influence simulation results presents researchers using so-called Monte Carlo methods with a serious dilemma, especially when the answer is not known.

Now, Stephan Mertens and Heiko Bauke of Otto-von-Guericke Universität in Magdeburg, Germany, have provided some mathematical insight into why the “subtract-with-borrow” random-number generator failed in the Ising model simulations and, more generally, why many random-number generators give wrong results in so-called cluster Monte Carlo simulations and related computational experiments.

Part of the problem stems from the fact that almost all random-number generators calculate a new random number from preceding values. The only true randomness in such schemes is in the choice of the starting value, or seed, which is only a few hundred bits at most.

The small amount of randomness in the seed is expanded by the generator to the 1010 or more random numbers used in a typical Monte Carlo simulation. Subtle biases in the generated sequence of random numbers, which themselves serve as inputs for the number-generating recipe, can give erroneous results when these random numbers are used with certain computational algorithms.

Such problems can arise even in the most basic Monte Carlo computations.

In a random-walk simulation, for example, a coin toss determines in which direction a walker moves along a lattice: north or south, east or west, and so on. Curiously, some popular random-number generators fail even in simulating a coin toss. Over time, they should produce roughly the same number of zeros and ones (heads and tails). Instead, random-number generators that are often used to produce such sequences tend to cluster zeros together, introducing a bias.

Technically, Bauke and Mertens report, “The problems arise because of the special role of the zero in the arithmetic of finite fields.”

The answer is to avoid or ignore zeros. For example, it would be better to use a random-number generator that produces a sequence of zeros, ones, and twos and then ignores the zeros to give a sequence of just ones and twos.

It pays to be extremely careful in working with random-number generators and to take nothing for granted. At the same time, with a theoretical understanding of what sorts of problems can arise with certain generators, it actually becomes safer to use those particular generators.

Algorithm guru Donald Knuth of Stanford University once advised: “. . . random numbers should never be produced by a random method. Some theory should be used.”

Mertens and Bauke now add: “. . . it is better to know and to control the deficiencies of a random number generator than to rely on fancy methods which are basically justified by empirical observations.”