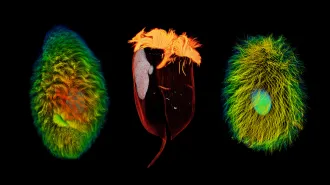

IN THE HOTHOUSE Lab experiments and crystals found in Colorado’s Green River Formation, shown, suggest that hot temperatures around 50 million years ago resulted from lower carbon dioxide levels in the atmosphere than previously thought.

USGS

The hottest time since dinosaurs roamed the planet was caused by nearly half as much carbon dioxide in the air as previously thought, crystals from Earth’s past suggest.

During the Eocene around 50 million years ago, climbing CO2 levels heated the planet by more than 5 degrees Celsius. By examining crystals grown in this “hothouse” climate, researchers discovered that Eocene CO2 levels were as low as 680 parts per million. That’s nearly half the 1,125 ppm predicted by previous, less accurate crystal experiments, the researchers report online October 23 in Geology.

“Earth’s climate may be more sensitive to increased CO2 than is currently thought,” says coauthor Tim Lowenstein, a geologist at Binghamton University in New York. In 2015, CO2 levels have reached 400 ppm and are on track to top 800 ppm by the end of the century. Current projections say that doubling CO2 will result in a 3 degree rise in global average temperature. The new work suggests that that prediction significantly underestimates the impact of greenhouse warming, Lowenstein says.

But the past doesn’t necessarily reflect the present, says paleoclimatologist Ethan Hyland of the University of Washington in Seattle. A lot has changed over the last 50 million years —such as new types and abundances of vegetation —that could change the impact of CO2 on the climate. “Sensitivity might not be a fixed parameter; it may be something that changes” over time, Hyland says.

During the lead-up to the Eocene warming peak, there was a sudden increase in atmospheric CO2, possibly the result of volcanic eruptions or a meteor impact (SN: 5/30/15, p. 15). This abrupt warming coinciding with a rise in atmospheric carbon seemed a good analog for modern climate change. Precise CO2 measurements from so long ago are tricky, however, because the ice core record extends back only about 1.5 million years.

In recent years, newer proxies for the presence of ancient CO2 have suggested that carbon concentrations during the Eocene were instead as low as 280 ppm. These proxies, however, rely largely on changes in biological systems — such as soil compositions and leaf anatomy —rather than physical processes. Life has evolved over millions of years, so these proxies have more uncertainty and are therefore given less weight than nahcolite, Lowenstein says.

With so much carbon confusion surrounding the Eocene, Elliot Jagniecki, now a geologist for ConocoPhillips, Lowenstein and colleagues reexamined Eugster’s experiments. The minimum carbon and temperature conditions for nahcolite formation overlap with the maximum conditions for the formation of the minerals trona and natron. Eugster had created conditions where the three minerals coexisted, then measured CO2 levels. Jagniecki’s experiments, however, took known CO2 concentrations and looked at what minerals formed. This small improvement in experimental technique led to a big change in thinking: At Eocene-like temperatures, nahcolite forms when carbon levels are as low as 680 ppm, the researchers found.

Lowenstein says that he is now hunting for Eocene-era nahcolite elsewhere in the world to confirm the team’s finding and provide more insights into how modern climate change will unfold.