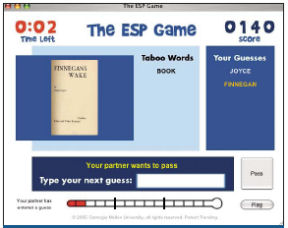

I’m online wrapped up on the ESP Game, and I’m finding it hard to stop. As each round ends, I’m eager to try again to rack up points. The game randomly pairs players who have logged on to the game’s Web site (www.espgame.org). Both players see the same image, selected from a large database, but they can’t communicate directly. Each player types in words that describe the image. When the words match, both players earn points and move to the next image. Each round lasts 150 seconds and displays up to 15 images. I keep hoping that my invisible, anonymous partner’s thoughts are in sync with mine—all the better to rise on the list of top players.

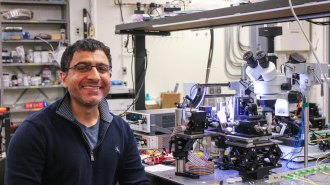

I’m having fun, but there’s more to this game than meets the eye. To its inventor, computer scientist Luis von Ahn of Carnegie Mellon University in Pittsburgh and his colleagues, the game provides an innovative way to label images with descriptive terms that make them easier to find online.

Most of the billions of images on the Web have incomplete captions or no labels at all, von Ahn says. Accurate labels would improve the relevance of image search results and make the information in images accessible to blind users. However, computers aren’t good at looking at images and determining what’s in them, and it’s boring for a person to label images.

“The ESP Game turns the tedious task of entering words that describe an image into something that’s fun,” von Ahn says.

Moreover, the game is addictive, he admits. Since it debuted in late 2003, more than 100,000 people have registered to play. Some players spend more than 40 hours a week accumulating points at the site.

Last fall, Google licensed the game and created its own version, called Google Image Labeler (images.google.com/imagelabeler/). “Image-search quality remains a top priority for Google,” says a company spokesperson.

The ESP Game is just the beginning of turning playtime to profit. Von Ahn is working on several new games to solve other problems, such as locating objects in images, filtering content, translating languages, accurately summarizing text passages, and developing common sense.

“Computers are really good at solving certain kinds of problems,” says Ben Bederson of the Human-Computer Interaction Lab at the University of Maryland at College Park. “This offers the opportunity to solve problems that computers just can’t do.”

“People around the world spend billions of hours playing computer games,” von Ahn says. “We can channel all this time and energy into useful work to solve large-scale computational problems and collect the data necessary to make computers more intelligent.”

Picture puzzles

Von Ahn first thought of harnessing human brainpower for computational purposes when, as a graduate student, he was working on a security scheme to help the Internet company Yahoo! solve a problem. Yahoo! permits people to sign up for free e-mail accounts, memberships in groups, and other services. However, people can take advantage of the system by using computer programs called bots to sign up hundreds of accounts automatically and then use the accounts to distribute the uninvited mass mailings known as spam.

Working with Carnegie Mellon’s Manuel Blum and others, von Ahn looked for a task that people could do easily but that computers would find difficult.

Suppose, for example, that a computer-generated image contains seven different words, randomly selected from a dictionary and displayed so that they overlap and appear against a complex, colored background. A person can almost always identify at least three of the words. A computer program would typically recognize none of them.

Blum coined the word captcha to describe such tasks. The word stands for “completely automated Turing test to tell computers and humans apart.” Traditionally, a Turing test is one in which a person asks questions of two hidden respondents and, on the basis of the answers, guesses which of them is a person and which is a computer. In the case of a captcha, a computer generates the test and judges responses to it, but, if given the test, another computer can’t pass it.

Many online companies now use captchas to control registration, confirm transactions, check voting in online polls, manage the sale of concert tickets, and other tasks.

While thinking about things that people can do but computers can’t, von Ahn realized that he could take advantage of human capabilities to solve problems such as image labeling. “I toyed around with a lot of possibilities until the ESP Game came about,” he says.

It took months to go from idea to working prototype to final version, as von Ahn and his colleagues incorporated various features to make the game more useful and more fun. For example, some images have lists of one or more taboo words, which players can’t use. This encourages players to go beyond the most obvious descriptive terms.

“Depending on the image, we can easily end up with 30 words, on average,” von Ahn says. He also collects data on the frequency with which players type in different words—information that may be helpful for improving image searches. He expects those data to be valuable also to sociologists and other researchers.

Von Ahn suggests that the game could be varied, for example, by permitting players to choose what sorts of images they want to see. If someone is interested in cars, images of cars will appear more often. “If you see images of things that you’re interested in, you’ll probably be able to give better labels,” von Ahn says. Instead of simply describing a vehicle as a car, a player could go further to identify it as a specific model. It’s a way of harnessing expertise that players might have.

Bederson offers one suggestion for making the game more appealing. “I would much, much rather play with people I know,” Bederson says. “The gaming world has shown that games get much more engaging, and people spend much more time playing them and getting into them more deeply, when they have relationships with the people they’re playing.”

As currently configured, the ESP Game is a cooperative venture. “You can turn it into a competitive game as well,” von Ahn says, which may appeal to different types of people.

Where’s Waldo?

The ESP Game can’t determine where in an image an object is located. Such location information would be helpful for training and testing computer algorithms for recognizing objects.

To approach this problem, von Ahn came up with a game that he called Paintball, in which players shoot at objects in an image. “That was a flop,” he says. “It wasn’t fun.”

Peekaboom (www.peekaboom.org), which debuted in the summer of 2005, succeeds where Paintball failed. Two randomly paired players are assigned the roles of Peek and Boom. Peek starts with a blank screen, while Boom sees an image and a related word that had been assigned by players in the ESP Game. To provide a clue to Peek, Boom clicks somewhere on the image. Then, a small piece of the image appears at that location on Peek’s screen. Peek then types in a guess for the word. Boom can see Peek’s guesses, say whether Peek is hot or cold, and provide other hints. When Peek correctly identifies the word, the players switch roles and go on to the next image-word pair. The players continue for 4 minutes.

Rapid identifications lead to high scores, so Boom has an incentive to reveal only the areas of an image necessary for Peek to guess the given word. So, if the word is “dog”, and an image has a dog and a cat, Boom would send only those parts representing the dog. Over the course of many rounds, researchers end up with a sense of which pixels belong to which object in any given image.

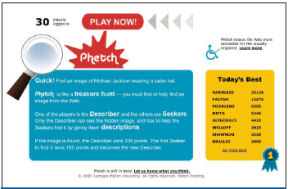

Von Ahn’s latest game to go live, Phetch (www.peekaboom.org/phetch), is an Internet scavenger hunt in which players look for images that fit certain descriptions. One player, called the narrator, types out a description of a picture randomly retrieved from a database containing 1 million Web images. Then, two-to-four other players, the seekers, use a built-in browser to find the image.

In each 5-minute round, the narrator receives points for each successful search and loses points if he or she decides to bypass an image that seems too difficult to describe. The first seeker to find the image receives points and becomes the narrator for the next image.

“It sounds like work,” von Ahn says, “but people seem to enjoy it.” In a week of testing, 130 Phetch players generated 1,400 captions. Players spent an average of 32 minutes with the game, but some played for up to 10 hours in a single session.

From the results of multiple games, researchers can select the best single caption for an image, determined by factors that include how quickly the image was retrieved. The intention is to provide captioned images to people who are visually impaired.

“You’re never going to get a paragraph that you would get out of Moby Dick,” von Ahn says. “The language that you get is similar to the language that you get out of instant messaging. But, at the end of the day, when you look at the caption, you get a really good idea of what’s in the image.”

Common sense

Von Ahn’s newest venture, now under development, is a game called Verbosity. It aims to build a database of common-sense facts—statements about the world that are known to and accepted by most people.

Researchers have long sought to collect common knowledge. In the Open Mind: Common Sense Project (openmind.media.mit.edu) at the Massachusetts Institute of Technology (MIT), for example, Internet users enter statements that they consider facts into a database of bits of information. Other activities include explaining why a statement is true, giving a cause-and-effect relationship, and paraphrasing a sentence.

The database currently holds more than 600,000 entries, linking many different objects, concepts, and actions. These entries may be used to train reasoning algorithms, which try to make inferences about the world. Scientists view such algorithms as a step toward making computer programs more intelligent.

In von Ahn’s game, one player gets a word and sends hints about it to the other player. The hints take the form of sentence templates with blanks. Suppose the word is “car” and the sentence template is, “It’s a type of _______.” The first player could then send the hint, “It’s a type of vehicle.” Another template might be, “You use this for _______.” The hints would constitute facts about cars.

This game isn’t yet ready for prime time because it’s tough to come up with an appropriate set of sentence templates. “To be useful to us, they have to be unambiguous, and they’ve got to be fun,” von Ahn says.

Verbosity is a great idea, says Henry Lieberman, a member of the Commonsense Computing group at the MIT Media Lab. Von Ahn, Lieberman says, is “a very clever game designer.”

Junia Anacleto, who runs an Open Mind project in Brazil, recently created a game that uses knowledge in the MIT common-sense database to generate clues for a guessing game called “O Que É?” (“What Is It?”). Teachers can customize the game to focus on specific topics.

Inspired by von Ahn’s work, Dustin A. Smith, one of Lieberman’s students, designed a computer game called Common Consensus, based in part on the structure of a venerable television game show called Family Feud. This Web-based game collects and validates common-sense knowledge about everyday goals. For example, a question might ask, “What are some things that you would use to watch a movie?” The players would reply with a list of objects, such as a DVD player or an iPod. The more players who mention the same object, the more points they get.

Art of fun

Some aspects of creating what von Ahn describes as “games with a purpose” are more art than science. There’s no simple formula for making something fun, for example.

“That’s something that still requires a lot of creativity,” von Ahn says. “The only way that we know that something is fun is to try it.” Moreover, people may not agree on what’s fun, and what’s fun today may not be fun tomorrow.

All von Ahn’s games have a time limit. “It makes players go faster, which is what I want,” von Ahn says. “It gives me more data.”

Von Ahn has also noticed that keeping the time short increases participation. Given 5- and 10-minute versions of a game, a 5-minute round is played more often, and more people play the game for longer than 10 minutes.

Cheating can bias or taint the results in which researchers are interested. “I worry about it a lot,” von Ahn says. “Before launching a game, I think very carefully about any way that I can imagine of cheating, and I come up with mechanisms to stop it.”

Bederson says that von Ahn “has done an admirable job at addressing these issues. I don’t think there’s any evidence that people have been able to subvert these systems.”

With all these factors to consider, developing a successful game can take as long as 18 months.

Von Ahn is developing a Web site that only features games in which players provide useful data for researchers. The most popular, well-known games would attract visitors, who might then be tempted to try other games.

Von Ahn sees applications of this sort of game beyond computer science and artificial intelligence. “It could be a new business model,” he suggests. “Rather than charging people to play your games, you let them play for free, and your business is the data that the games collect.”

Bederson is already looking toward games of the future. “The big challenge is how to scale this approach up to more-complex problems,” he says. Examples of such problems include summarizing or explaining literature, providing services in ways that meet an individual’s particular needs, or handling situations in which the truth isn’t known—all tasks that require human judgment.

Fun isn’t the only way to tap human brainpower. “People want to help the world, and they typically don’t know how,” Bederson says. “They’re often willing to do really hard things if they have legitimate reasons to think that they are doing good in the world.”

Imagine what people might be willing to tackle through a combination of entertainment and personal fulfillment.