Interview: Murray Gell-Mann

Special online feature

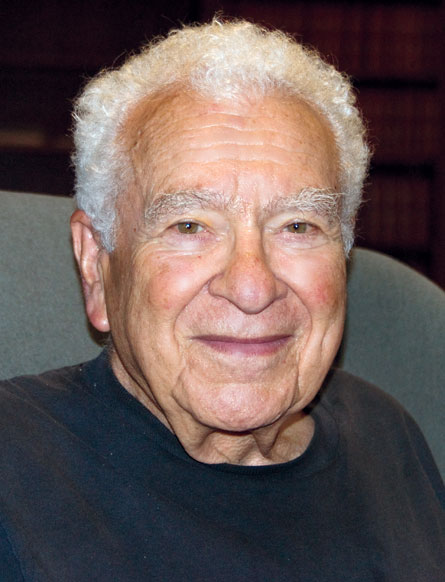

Shortly before his 80th birthday, on September 15, the physics Nobel laureate Murray Gell-Mann spoke with Science News Editor in Chief Tom Siegfried about his views on the current situation in particle physics and the interests he continues to pursue in other realms of science. Gell-Mann is most well known for introducing the concept of quarks, the building blocks of protons, neutrons and other particles that interact under the influence of the strong nuclear force. (SN: 9/12/09, p. 24) After many years as a professor of physics at Caltech, Gell-Mann moved in the mid-1980s to New Mexico as one of the founding members of the Santa Fe Institute, where he continues his research today.

You say in your book [The Quark and the Jaguar, 1994] that there is no evidence for any substructure of quarks. Is that still the case?

There’s no evidence for any substructure. There might be. It’s possible, but there’s no evidence for it.

Would you be surprised if somebody came up with a scheme for substructure that worked?

If it worked, it would work. It would be based on some evidence, but as far as I know right now there isn’t any evidence. There are parallels between the leptons and the quarks: very, very strong parallels. Three families in both cases. So it seems likely that if the quarks were someday to be proved composite, so would the electron, the neutrino. And there’s certainly no evidence for that. So far nothing has pointed in that direction of another layer of constituents underlying the quarks. Nothing points to that. But you can’t rule it out completely, of course. We know that the present theory, the standard model, is a low-energy approximation of some kind to a future theory, and who knows what will happen with a future theory? But at the moment nothing seems to point to composite quarks or composite leptons.

Do you still believe superstring theory is likely to be a profitable approach to making progress in particle physics?

I think it’s promising. I still think it’s promising, for the same reason. I didn’t work on string theory itself, although I did play a role in the prehistory of string theory. I was a sort of patron of string theory — as a conservationist I set up a nature reserve for endangered superstring theorists at Caltech, and from 1972 to 1984 a lot of the work in string theory was done there. John Schwarz and Pierre Ramond, both of them contributed to the original idea of superstrings, and many other brilliant physicists like Joel Sherk and Michael Green, they all worked with John Schwarz and produced all sorts of very important ideas. One of those changed radically during those years. Originally it was thought that string theory and superstring theory might lead to the correct theory of hadrons, strongly interacting particles. Particles that are connected in some way with the nuclear force. But there was a serious problem there, because superstring theory predicted a particle with zero mass, zero rest mass if you want to call it that, and spin 2, a spin of 2 quantum units. Well, no such hadron was known and it was pretty clear that there was no good way to fit it in. But then the suggestion was made in our group, and maybe elsewhere as well, that we’d been looking at the theory wrong — it was actually a theory of all the particles and all the forces of nature. And that meant changing the coupling strengths from a strong coupling to the very, very, very weak coupling of gravity. So that meant a factor of 1038 in the scale of the theory. The natural scale of the theory had to be altered by a factor of 1038. That’s a very considerable change. But doing that, we could interpret the particle of spin 2 and mass zero — it was the graviton, it was the quantum of gravitation, required if you’re going to have a gravity theory, for example Einstein’s gravity theory, which is the best one so far, and quantum mechanics. So the whole theory was reoriented then, toward being connected at least, with the long-sought unified theory of all the particles and all the forces.

Then it was found that if we did perturbation theory in the gravitational direction, we found that the radiative corrections in the theory were finite. Whereas all the other attempts to quantize Einstein’s general relativistic theory of gravitation led to huge infinities in the radiative correction terms. Very undesirable. But at least in the orders investigated, superstring theory as a basis for gravitation led to finite results in perturbation theory. Very striking. That still influences me to this day to believe that possibly superstring theory has something to do with the long- sought unified theory of all the forces and all the particles. Even though for decades people have been trying to do that, to construct that ultimate theory and so far there’ve been obstacles.

I am puzzled by what seems to me the paucity of effort to find the underlying principle of superstring theory-based unified theory. Einstein didn’t just cobble together his general relativistic theory of gravitation. Instead he found the principle, which was general relativity, general invariance under change of coordinate system. Very deep result. And all that was necessary then to write down the equation was to contact Einstein’s classmate Marcel Grossmann, who knew about Riemannian geometry and ask him what was the equation, and he gave Einstein the formula. Once you find the principle, the theory is not that far behind. And that principle is in some sense a symmetry principle always.

Well, why isn’t there more effort on the part of theorists in this field to uncover that principle? Also, back in the days when the superstring theory was thought to be connected with hadrons rather than all the particles and all the forces, back in that day the underlying theory for hadrons was thought to be capable of being formulated as a bootstrap theory, where all the hadrons were made up of one another in a self-consistent bootstrap scheme. And that’s where superstring theory originated, in that bootstrap situation. Well, why not investigate that further? Why not look further into the notion of the bootstrap and see if there is some sort of modern symmetry principle that would underlie the superstring-based theory of all the forces and all the particles. Some modern equivalent of the bootstrap idea, perhaps related to something that they call modular invariance. Whenever I talk with wonderful brilliant people who work on this stuff, I ask what don’t you look more at the bootstrap and why don’t you look more at the underlying principle. . . .

What else is going on today that interests you in particle physics?

Well, there are some very deep mysteries. One mystery has to do with the cosmological constant. You’ll remember the history of the cosmological constant is very checkered. Einstein believed that the universe was static. But in his theory of gravitation, where gravitational energy gravitates and so on — the phenomenon that gives rise to black holes — in that theory, of course, things tend to collapse. Things gravitate, attract one another, and that attractive energy gravitates and so on, and things have a tendency then to fall together and form black holes. Something has to resist all this attraction. So Einstein saw that in his equation for gravitation, his general relativistic theory of gravitation, you could put in another term, which amounted to a repulsive interaction that’s constant in space and time. And that repulsion energy, if you like, is called the cosmological constant. In quantum mechanics, if you have a quantum theory of gravitation, this would be the energy density of the vacuum. The vacuum in quantum field theory is not empty as you know, it’s an important battlefield, and it has a certain average energy density in the vacuum. This is a very important constant in the theory, and what it does physically is this: Let’s go back to Einstein’s ideas about the static universe. He introduced the cosmological term with a certain strength, a cosmological constant with a certain strength to cancel out the attractive energy of everything attracting everything by gravitation and allowed the universe to be static. But then he began hearing about how in Pasadena, California, Edwin Hubble was discovering that the clusters of galaxies were receding from one another. So the universe wasn’t static at all, it was expanding. Little objects around us aren’t expanding, the solar system isn’t expanding, our galaxy isn’t expanding, our cluster of galaxies isn’t expanding, but the clusters of galaxies are receding from one another and in that sense the universe is expanding. And then you don’t need this cosmological term, necessarily, because you can have a lot of attraction, but you say that things started out receding, the clusters of galaxies receding from one another, the universe is expanding, and then what gravity does is simply to slow down that expansion more and more and more and more and then possibly reverse it eventually — in the far, far, far distant future — might reverse it and cause the universe to recollapse, possibly.

However, as far as anyone could tell the value zero for the cosmological constant would fit the facts, and Einstein said, ‘oh, that’s probably what happens, it’s zero, and I‘ve done a terrible thing introducing this ugly constant into my beautiful theory. It’s actually zero, my original equation was right, we don’t need this term, the expansion of the universe has made it unnecessary to have this term, take it away.’ But to somebody trained in quantum mechanics and quantum field theory, and Einstein, as you know, was not a partisan of quantum field theory, but for somebody trained in quantum field theory this is a funny kind of answer. Because what is it? It’s the energy density of the vacuum, and there’s no special reason for that to be zero. If supersymmetry were exact, then the cosmological constant would really be zero by virtue of that symmetry principle. Supersymmetry may be correct, but it’s not exact. It’s a violated symmetry, and other than those conditions we wouldn’t understand why the cosmological constant would want to be zero. There’s no reason for that. If you’re a quantum mechanical person there’s no reason for it.

Well that’s how things stood until recently. Recently, however, they have measured what’s happening to the expansion of the universe over time, and it seems to be accelerating. So there is a cosmological constant, probably, after all …, responsible for this acceleration of the expansion of the universe. Now with that going on, the possibility of recollapse in the future sort of recedes — we probably aren’t involved with that anymore. Instead we have an accelerating expansion of the universe….

But now we get to the mystery. What kind of a magnitude does this constant have? Well, it’s enough to give very appreciable acceleration to the expansion of the universe, but now let’s put it in natural units. Put Planck’s constant divided by 2 pi equal to 1, the velocity of light equal to 1, and the gravitational constant equal to 1, and we have natural units. In those units this thing comes out tiny, 10-118 — that’s the biggest fudge factor in the history of science. 10118 times too small to have a value like 1 in natural units. It must be the exponential of something, of course, but still it’s quite impressive, it’s quite a large amount. So why is it like that? Why is it so tiny? Nobody knows.

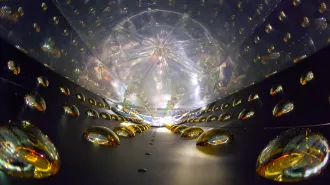

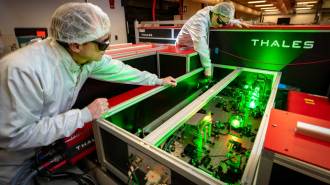

It’s the biggest mystery. But it’s connected with the violation of supersymmetry. And connected with the whole idea of supersymmetry. And of course if you’re going to have superstrings you have to have supersymmetry. It would simplest if supersymmetry were an exact symmetry, but we know it isn’t. Observationally, it can’t be. It’s a broken symmetry. And that’s the second mystery. How is it broken? One doesn’t really know that. Then the implications for experiment at the new accelerator near Geneva, Switzerland, the Large Hadron Collider, if they ever get it working, there will be searches for superparticles, because, as in superstring theory, supersymmetry requires that for every known particle there’s a superpartner, and that partner particle has the opposite statistics. In other words, if the particle obeys the exclusion principle the way the electron does, then the selectron, the superpartner of the electron, has to obey the antiexclusion principle. Such particles love to be in the same state at the same time, like the photon. That’s the basis of the laser — the basis of the laser is that photons love to be in the same state at the same time, and in a laser beam they are. So we have this broken symmetry. We don’t know how it’s broken, but if there’s to be any relevance to superstring theory we need to have this broken supersymmetry. And those particles have to exist.

And those superpartners play another role. There is a difficulty with the quantum field theory of the particles we observe in the standard model, and that is that there are a lot of mass ratios and coupling constant ratios that are very large, or very small, depending on how you look at them. And such large or small values not near 1 are unstable under radiative corrections. When you look at the higher order corrections in perturbation theory, such large or small ratios tend to move back toward 1 by virtue of the corrections. But that’s not true if you have supersymmetry. In fact, if you have exact symmetry there’s no such problem at all. With broken supersymmetry there’s not such a problem, provided it’s not broken too badly. In other words, the superpartners must not be too heavy. If they are too heavy compared to the regular particles that we know, then they don’t rescue the quantum field theory from this instability of large or small ratios. Some people call it the hierarchy problem, which bothers me — hierarchy comes from the Greek words for sacred rule, it refers to church government or something like that, the various links in the government of the church. I don’t know why one would call it that, but anyway I call it the instability of large or small ratios.

Is the implication that the superpartners cannot be so massive that you couldn’t detect them with LHC?

That’s the idea I’m getting to. It would be hard for them to be so massive that they couldn’t discover them at a properly constructed accelerator. Unfortunately, we lost the Texas accelerator [the Superconducting Super Collider, canceled in 1993], which would have been of suitably high energy probably, and we have to make do with the Large Hardron Collider, which has particles of a lower energy. And it’s harder, then, to discover superpartners, because you need to be above the threshold, the mc squared, for these particles. And the situation is made even worse by the recent accident at CERN, where it looks as if they will have to run at half energy for some years because of some problems they’ve encountered.… So we had first the decrement in energy for going from Texas to Switzerland, and then we had a further decrement in energy from this unfortunate accident at the machine, so I don’t know now whether we can really say they should find the superpartners, but let’s hope they do. If they do we will be a step ahead in every respect.

If the LHC gets to its highest energy and still finds no superpartner particles, does everybody go back to the drawing board?

Well, we’d have to see exactly how bad it is. I mean how high up you go and still don’t find anything and so on. But yes, one might have to discard this whole line of reasoning.

Do you think that is likely?

“I think it’s likely that broken supersymmetry will exist, that we will eventually understand the mechanism by which the supersymmetry is broken, and we’ll understand why the cosmological constant has the value that it does, assuming that we’re actually seeing a cosmological constant. I would guess all of those things, and I would guess superstring theory has some relevance to the search for the underlying unified theory. Exactly what relevance it has, we don’t know.

What other realms of science are you most interested in today?

“The thing I’m most excited about is in linguistics. For some reason in this country and in Western Europe, most tenured professors of historical and comparative linguistics hate the idea of distant relationships among human languages, or at least the idea that those can be demonstrated. And they set up extraordinary barriers to doing so, by making anyone who thinks that languages are related, what they call ‘genetically related’ in linguistics — it has nothing to do with biological genetics — in other words, one language descended from another and so on, so you get a sort of tree. They put a tremendous burden of proof on anyone who wants to say that languages are related in this way, by this common descent. And in that way I believe they are throwing away a huge body of very interesting and convincing evidence. And sometimes they come up with pseudomathematical arguments for throwing away these relationships. It’s all a matter of putting a huge burden of proof on anyone who wants to say the two things are related. If you can, find some ‘squeeze’ out of it by some kind of far-fetched explanation involving chance or the borrowing of words or the borrowing of grammatical particles, so-called morphemes. If you can find some outlandish explanation involving chance and borrowing, then you throw out the idea of genetic relationship and so on.…

But the evidence is actually pretty convincing for quite distant relationships. And in this collaboration between our group at the Santa Fe Institute and the group in Moscow, both consisting mostly of Russian linguists — because in Russia these ideas are not considered crazy — in that collaboration we seem to be finding more and more evidence that might point ultimately to the following rather interesting proposition: that a very large fraction of the world’s languages, although probably not all, are descended from one spoken quite recently…, something like 15 to 20,000 years ago. Now it’s very hard to believe that the first human language, modern human language, goes back only that far. For example, 45,000 years ago we can already see cave paintings in Western Europe and engravings and statues and dance steps in the clay of the caves in Western Europe, and we can see some quite interesting advanced developments in southern Africa that are even earlier, like 70 or 75,000 years ago or something like that. It’s very difficult for some of us to believe that human language doesn’t go back at least that far. So I don’t think that we’re talking about the origin of human language — I would guess that that’s much older. But what we’re talking about is what you can call a bottleneck effect, very familiar in linguistics….

And we see that with Indo-European, which spread widely over such a tremendous area; we see it with Bantu, which spread over a big fraction of the southern two-thirds of Africa. We see it with the spread of Australian. The Australian languages seem to be related to one another. Although there were people in Australia 45,000 years ago, it doesn’t look as if the ancestral language of the Australian languages could go back more than 10 or 12,000 years, maybe even considerably less. So there was a huge spread also. I personally suspect that it was some group from a certain area in New Guinea that we can point to that gave the language ancestral to the Australian languages. Communication between Australia and New Guinea was easier then.

Anyway, since these things are so common in history, in the history of language, why not believe that a big fraction of the world’s languages all go back to a single one, relatively recently? Anyway, we’re accumulating more and more and more evidence. Eventually I think everybody will be convinced that these relationships really exist. In the meantime we’re fighting one of these battles.

Battles of new ideas against conventional wisdom are common in science, aren’t they?

It’s very interesting how these certain negative principles get embedded in science sometimes. Most challenges to scientific orthodoxy are wrong. A lot of them are crank. But it happens from time to time that a challenge to scientific orthodoxy is actually right. And the people who make that challenge face a terrible situation. Getting heard, getting believed, getting taken seriously and so on. And I’ve lived through a lot of those, some of them with my own work, but also with other people’s very important work. Let’s take continental drift, for example. American geologists were absolutely convinced, almost all of them, that continental drift was rubbish. The reason is that the mechanisms that were put forward for it were unsatisfactory. But that’s no reason to disregard a phenomenon. Because the theories people have put forward about the phenomenon are unsatisfactory, that doesn’t mean the phenomenon doesn’t exist. But that’s what most American geologists did until finally their noses were rubbed in continental drift in 1962, ’63 and so on when they found the stripes in the mid-ocean, and so it was perfectly clear that there had to be continental drift, and it was associated then with a model that people could believe, namely plate tectonics. But the phenomenon was still there. It was there before plate tectonics. The fact that they hadn’t found the mechanism didn’t mean the phenomenon wasn’t there. Continental drift was actually real. And evidence was accumulating for it. At Caltech the physicists imported Teddy Bullard to talk about his work and Patrick Blackett to talk about his work, these had to do with paleoclimate evidence for continental drift and paleomagnetism evidence for continental drift. And as that evidence accumulated, the American geologists voted more and more strongly for the idea that continental drift didn’t exist. The more the evidence was there, the less they believed it. Finally in 1962 and 1963 they had to accept it and they accepted it along with a successful model presented by plate tectonics….

Another example is Fred Hoyle persuading a lot of people that there was no early universe. He had this idea of continuous creation of matter stabilizing the universe, so even though it was expanding it was still stable — because material was constantly being fed in by creation, a creation mechanism. So there was no early universe. Well, my colleagues at Caltech were very much involved with finding out where the chemical elements were generated, and Fred Hoyle’s idea, which they accepted completely, forced them to study the generation of chemical elements in the stars. A beautiful piece of work. However, it also misled them because when it came to the very light elements, nucleogenesis in the stars didn’t work. For the light elements they didn’t know what to do. They asked me. I said, well, George Gamow has explained the generation of the light elements, they were generated in the early universe, and they were. And they said, ‘oh, no, Fred Hoyle has taught us that there was no early universe.’ And they just wouldn’t give on this. Willy Fowler, who won the Swedish [Nobel] prize for this kind of work, later actually acknowledged that he asked me and that I had been right. I was very pleased by that. He said he was sorry that he took Fred Hoyle so seriously. But I couldn’t talk him out of it at the time….

It’s not the rule. I have to emphasize, this is not the rule. But it happens from time to time. Mostly challenges to scientific orthodoxy are wrong. But not always…. In my work — the quarks, a lot of people thought the quarks were a crank idea. Well, it violated three fundamental principles in which a lot of people believed. One of them was that the neutron and proton were elementary, they were not composed of anything simpler. There couldn’t be particles that were permanently trapped inside observable things like the neutron and proton. That was a crazy idea, they thought, and of course the quarks have that, probably. And finally, the idea of particles with fractional charges, fractional electric charges in units of the proton charge. That was considered to be a crank idea, too. So the quarks had three strikes against them, from these three principles, all wrong of course. It keeps happening over and over again, Now I believe that this situation in linguistics is a similar situation, that the establishment in this country and in western Europe, most of these people, by no means all, but most of them, are just convinced that these distant relationships are wrong and they place this enormous burden of proof on anyone who wants to see distant genetic relationships among languages, and all the evidence is just thrown away. The evidence is very persuasive, actually, already, but it’s getting much more persuasive. The more the work continues, the more things keep pointing, and they keep pointing toward superfamilies composed of the known families, the acknowledged families, and then super superfamilies, and then finally one single super–super-superfamily that covers a very big fraction of the world’s languages. Provisionally we call that Borean. Borean is not such a good name anymore, because it has to do with the north wind, Boreas. It’s no longer so northern, this ancestral tongue. The languages that descend from it include a lot that are found very far south. Still, though, we might as well continue to use this provisional word Borean, and it will just be one of many bad, bad names for things.