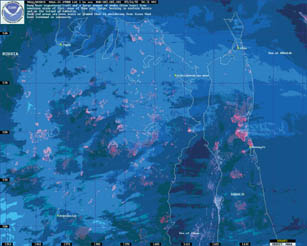

Last year’s wildfire season, one of the worst in the past half-century, didn’t waste any time getting started.

On Jan. 1, 2000, Florida chalked up the year’s first fire–a small blaze that was under control before it could spread more than an acre. Come late summer, firefighters were faced with a string of hot, dry days in which hundreds of thousands of acres were ablaze across more than a dozen states. Fire fighting resources were stretched to their limit. At the peak of the season, more than 500 new wildfires broke out each day.

The National Interagency Fire Center in Boise, Idaho, estimates the cost of suppressing fires in the United States last year at more than $1.6 billion, a figure that surpassed the amount spent in the previous 3 years combined. Despite the efforts of firefighters, including volunteers from other nations and military units assigned to battle blazes, more than 850 homes and other structures were lost. As people move out of cities and into rural areas susceptible to wildfire, such losses are likely to grow.

Some researchers are developing ways to better forecast regional fire risk, while others are constructing detailed computer models to simulate the behavior of a fire once it’s been ignited. The collective aim: to decrease the devastation to people and property wrought by fire and simultaneously trim the taxpayer’s fire fighting bill.

Staggering statistics

The statistics are staggering. More than 92,000 fires scorched a total of about 7.4 million acres in the United States last year. A number of factors coincided to fan the flames.

Snow cover across the United States in the spring, which followed North America’s warmest winter on record (SN: 8/5/00, p. 87), was much less extensive than normal. This helped dry out living trees and underbrush, as well as the dead needles, leaves, and limbs on the ground beneath the trees. A waning La Niña–in which sea-surface temperatures along the equatorial Pacific Ocean are cooler than normal–brought hot, dry weather to much of the United States. The resulting powder keg exploded when lightning from summer thunderstorms provided the sparks.

Although unusual for recent times, last year’s statistics don’t approach the long-term averages tallied during the early part of the last century. Each year between 1919 and 1949, more than 142,000 fires claimed more than 29 million acres. During the past 40 years, those yearly averages dropped to about 136,000 fires and 3.9 million acres burned. Despite the fall in the fire-swept acreage, the blazes’ economic consequences have been increasing.

Spurred by last year’s spate of fires, a panel assembled at the National Academy of Sciences (NAS) in Washington, D.C., last month to discuss a type of fire called the intermix fire. It looms as one of the most vexing problems on the national fire scene. Unintended consequences of suburban sprawl, intermix fires occur where subdivisions or clusters of individual homes encroach upon realms naturally prone to wildfires.

Although intermix fires have become a focus of recent attention, the phenomenon isn’t new, says Stephen J. Pyne, a biologist at Arizona State University in Tempe and a member of last month’s NAS discussion.

A century ago, he notes, new residents of U.S. rural regions were susceptible to wildfires because they were moving into tinder-rich areas yet to be cleared for agriculture. Today, urbanites who are recolonizing the rural landscape without modifying the land find themselves in a similar situation. Because they typically don’t cut, weed, graze, or prune more than a small area surrounding their homes, they live within the natural buildup of combustible material.

Plopping wood-frame homes down near, or even in the midst of, such a tinderbox is simply asking for trouble. “The wild and the urban next to each other are like matter and antimatter, needing only a spark to set them off,” Pyne says.

One technique used to reduce the risk to people and property in such fuel-rich environments is the so-called prescribed fire. In theory, these fires are a preemptive strike to remove flammable material that could otherwise fuel uncontrollable blazes.

But in practice, prescribed fires don’t always succeed. One of last year’s most damaging fires, a 46,000-acre conflagration that led to the evacuation of Los Alamos, N.M., and burned more than 200 structures there, started out as a prescribed fire (SN: 5/20/00, p. 324).

Although prescribed fires do serve their purpose in specific areas, William A. Patterson III, a fire scientist at the University of Massachusetts in Amherst and another panel member, questions their ultimate effectiveness when considering the landscape as a whole. “There’s going to be more fires, and there’s nothing we can do about it,” he says.

Patterson teaches fire managers and others how to conduct prescribed fires, but he says their potential benefits are limited. These deliberately set fires substitute for only 10 percent of the fires that an ecosystem normally experiences, and Patterson holds that prescribed fires’ main benefit is to train people how to fight wildfires.

Furthermore, he notes, prescribed fires have a stigma like that attached to landfills, airports, and power stations: Many people acknowledge the need but don’t want them close to home.

“Even people who understand the need for prescribed burning don’t like it if it interferes with their trip to the Grand Canyon or their daughter’s outdoor wedding, or if the one fire that’s set to protect them actually gets away and burns their home,” Patterson says.

Minimizing damage

So, how do we protect people if they insist on moving out into fire country and placing themselves in harm’s way? Researchers believe one way to minimize the damage is to get better at predicting the risk of fire.

For example, correlations between aspects of climate and the size and number of fires might help scientists project the probability of fire in a certain region several weeks to several months in advance. Among other benefits, such information could help determine well in advance where firefighters and equipment will be needed.

Some of the downward trend in numbers of wildfires across the decades may stem from changes in population distribution and fire fighting policy. Analysis of lake sediments in the American West, however, points to another possible driver behind these trends: climate change.

Cathy Whitlock, a geographer at the University of Oregon in Eugene, has studied sediment cores taken from lakes in the Pacific Northwest and the northern Rocky Mountains. By counting the layers of charcoal particles trapped in each centimeter-thick section of the cores, Whitlock and her colleagues can estimate the frequency of major fires in the area surrounding the lake. Typically, only fires that roar through a forest and kill all trees–stand-replacement fires–are intense enough to widely distribute the 100-micron particles of charcoal that Whitlock seeks.

Whitlock is careful to avoid experimental pitfalls. For example, by taking cores exclusively from small, deep lakes, the researchers get a complete record of sediments from bodies of water that would not have completely dried up during an extended drought. Also, by choosing lakes that aren’t stream-fed, the scientists count only the charcoal that drops directly onto the lake or washes down from nearby hills.

Although the frequency of fires varies from place to place, Whitlock says the overall trends are consistent. Yellowstone National Park provides a good example, she notes. Cores taken from the park’s Cygnet Lake in Wyoming reveal that major fires don’t occur at regular intervals.

However, Whitlock looked at the averages. During the late stages of the last ice age, from about 17,000 to 11,000 years ago, there were four major fires every 1,000 years or so. In a subsequent warmer and drier period, between 11,000 and 7,000 years ago, more than 10 big fires occurred every millennium. In the past 2,000 years, fewer than three large charcoal-forming blazes have occurred per millennium.

Combine these findings with climate data, and something telling emerges, says Whitlock. The trends in fire frequency in a particular region closely track year-to-year and decade-to-decade variations of precipitation. Major fires tend to occur more often during periods of low precipitation.

These long-term trends, discovered from the lake sediments, are backed up by government data compiled over the past 20 years. A research team led by Anthony L. Westerling, a climate researcher at the Scripps Institution of Oceanography in La Jolla, Calif., analyzed data collected by several of the government agencies responsible for monitoring fires. These include the Bureau of Land Management, the Department of Agriculture’s Forest Service, and the Bureau of Indian Affairs.

Westerling’s team considered both straightforward factors, such as the precipitation that a region received, and more complicated parameters, such as the Palmer Drought Severity Index (PDSI). This measure combines precipitation, temperature, soil-moisture conditions, and other factors. The PDSI is positive when soil moisture is above normal for a particular area, and it’s negative when the soil is drier than normal.

In the dry shrubby areas and grasslands in Nevada and in central Oregon and Washington, the researchers found that fire risk at the peak of fire season is only weakly related to current climate conditions. It’s more strongly linked to conditions 10 to 18 months before, they note.

In California’s Sierra Nevada range, the fire season is generally more severe when the PDSI was positive the summer before than when the PDSI was negative. Also, as expected, there’s a higher fire risk when the current PDSI is negative than when it’s positive. Westerling suggests an explanation: Wet conditions in a region during the previous year contribute to an accumulation of fuel, while dry conditions in the current year increase risk of fires.

Computer model predictions

Predictions such as these can be supplemented with computer models that forecast the spread of a wildfire once it’s ignited. At Los Alamos (N.M.) National Laboratory, Jon M. Reisner and his colleagues are refining a simulation that combines chemical and physical descriptions of fire behavior with computational fluid dynamics–the techniques used to model the flow of air around aircraft and missiles.

To simulate an environment, the programmers assemble computerized cubic elements that are 1 meter on a side. For example, a tall stack of single elements made of wood could simulate a tree, and large clumps of elements might represent buildings.

Other factors that influence fire behavior—such as temperature, wind speed, the moisture content of fuel, and the presence of clouds—can be included, too, Reisner says. As elements burn and produce heat, the simulation’s complicated aerodynamic equations help show how fast and in which directions the fire will spread to other simulation elements.

The Los Alamos researchers have successfully modeled several real fires, Reisner says—including, after the fact, portions of the one that led to the evacuation of their own lab last May.

While computer programs like this one could provide an early warning for communities at risk from a known fire or help firefighters develop a strategy for battling a blaze, the models might be most useful for determining when and where it’s prudent to light a prescribed fire.

As the Los Alamos calamity illustrates, fire experts could use such help.

Warmth from a distant fire

While it’s obvious that climatic conditions can affect the extent and frequency of wildfire, the converse is true as well. As major wildfires burn, they send huge amounts of carbon dioxide and other planet-warming greenhouse gases into the atmosphere. Fires can rapidly oxidize the carbon slowly sequestered by vegetation from atmospheric carbon dioxide and send it right back into the air.

Trees in the United States stockpile about 288 million tons of carbon dioxide each year. However, the U.S. Forest Service estimates that last year’s wildfires emitted about 100 million tons of the gas, more than a third of a typical year’s sequestration (SN: 12/16/00, p. 396).

Although media attention last year focused on conflagrations in the western states, far beyond their northern horizon even larger fires raged almost unnoticed.

In a typical year, the boreal forests that stretch across Alaska and Canada lose about three times the acreage that’s lost in the lower 48 states. For example, the 351 fires that raced across portions of Alaska last year amounted to less than one-third of 1 percent of the nation’s total number of fires. But they blackened in excess of 750,000 acres, accounting for more than 10 percent of the nation’s fire-stricken land.

In a fire-heavy year, fires in the boreal forests of North America, Russia, and Mongolia contribute up to 10 percent of the carbon dioxide returned to the atmosphere from fires worldwide, says fire ecologist Eric S. Kasischke of the University of Maryland in College Park. This is no small amount, he told an audience at the American Geophysical Union annual meeting in San Francisco last December. In North America, from May to August in a typical year, the quantity of fire-spawned carbon dioxide equals fully 50 percent of the amount of the gas spewing from vehicle tailpipes, he says. The full scope of the fire problem in Russia is unknown.

Kasischke notes that about a third of the boreal forest in Siberia isn’t protected against fire or monitored because the region is so sparsely populated.

Boreal forest fires can affect Earth’s climate in other ways, too. Many of these ecosystems grow in a thin, seasonally thawed surface atop permanently frozen soil. Layers of lichens grow among the needles, leaves, and branches that have fallen from the trees to the ground. This thick mat of organic matter, or duff, acts just like a layer of thermal insulation, says Kasischke.

During intense blazes, about half the carbon burned comes from the duff. After this layer burns away, the exposed soil—no longer shaded by trees, because they’ve burned, too—can absorb sunlight, warm up, and dry out. This increases the oxygen in the soil, which oxidizes the carbon sequestered there and returns it to the atmosphere.

In areas where the climate has warmed considerably since the last fire, the permafrost may never recover, Kasischke says. In such cases, a different set of species can move in to replace those burned out. These species often sequester carbon less effectively than the earlier residents did.

The long-term effects of such ecosystem shifts may aggravate global warming, says Kasischke.