Science fiction fans know what a 3-D display ought to look like.

The film Forbidden Planet showed them more than half a century ago. On a distant world once inhabited by an advanced alien civilization, human scientist Dr. Morbius discovers a table that can create holographic videos. He calls up a ghostly projection of his daughter that’s smaller than but otherwise identical to the girl herself.

“Aladdin’s lamp in a physics laboratory,” says an awed spacefarer peering over Morbius’ shoulder.

Compared with this Krell technology, the magic of today’s 3-D televisions and movie screens are a bit lacking. Just ask moviegoers whose eyes felt strained as they watched Avatar from behind a pair of goofy glasses. Or move your head side to side while playing Nintendo’s latest portable gaming device, the 3DS: You will see that Mario’s world just doesn’t rotate like the real world would.

But a handful of research teams are hoping to create a 3-D experience that’s glasses-free, comfortable and as in-your-face as watching the Super Bowl from the front row at the stadium. By combining existing techniques with a few new tricks, the researchers are finding better ways to fool the brain into thinking the action is right there in the room.

A screen currently under development reveals an object’s sides when you peek around it. And an in-the-works teleconferencing system made of a spinning mirror can conjure up floating faces worthy of the Wizard of Oz. Other approaches bypass the trickery completely and go straight for the tried-and-true 3-D experience of holography: A postcard-sized Princess Leia made her debut earlier this year (SN Online: 1/26/11), and the military recently acquired a prototype table akin to Dr. Morbius’.

“The technology is getting closer to creating something that looks like a sculpture made out of light,” says Gregg Favalora, a veteran 3-D display designer who works for the consulting company Optics for Hire in Arlington, Mass.

Scaling up some of these technologies to make affordable flat screen televisions will take years, if it ever happens. But in the meantime, these new approaches may find their way into niche markets that can benefit from the richer experience 3-D promises.

From both sides now

The human brain has a built-in talent for working out depth from flat images. Even an old-fashioned movie looks somewhat three-dimensional on a normal TV set. Shadows on a bone tossed into the sky in 2001: A Space Odyssey, for example, reveal it to be an honest-to-goodness bone, not a cardboard cutout. And when chariots racing around a hippodrome in Ben-Hur partially block each other from view, the audience knows who’s in the lead.

Today’s commercial 3-D movie screens and televisions make objects leap out at the audience by displaying two overlapping images. Each image captures a different perspective, offset by the space between the eyes. Special glasses filter the pictures — which often have slightly different colors or light that bends in a different way — so the left eye sees one image and the right sees another. The brain puts these two scenes together to infer depth. It’s an old trick that dates to the first half of the 19th century, when English scientist Sir Charles Wheatstone used mirrors to redirect side-by-side images, one into each pupil.

Portable gaming devices, cell phones and cameras of today can achieve the same effect without glasses. These technologies slice the two images into ribbons that get stitched together like zebra stripes. Each eye sees a different set of stripes thanks to a barrier with vertical slots. Because the trick requires the eyes to be in just the right spot, it works particularly well for small screens held at a fixed distance.

But, glasses or not, no consumer technology provides a 3-D view that turns like the real world does when you move your head to the side. Moviegoers all share the same point of view, regardless of where they’re sitting — meaning an important clue normally used to compare object distances is missing.

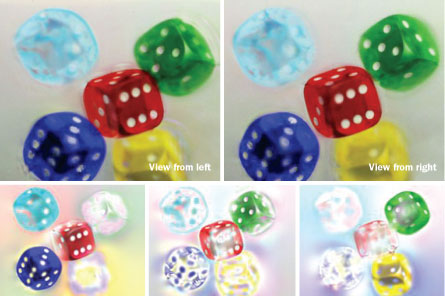

Douglas Lanman of MIT’s Media Lab and his colleagues are attempting to solve the problem with objects that rotate as a person walks by. Sitting in the lab is a glowing white screen that would look at home illuminating an X-ray of a lung in a doctor’s office. The screen’s light shines through a transparency showing a shimmering butterfly, a jade Chinese dragon, a handful of dice in mid-tumble.

Even at first glance, the colorful 3-D images are captivating. But move your head, and they seem to know that you’re there. Each image turns gracefully because it is made from up to five different images printed on different layers stacked on top of each other. These images add up to reveal a slightly different scene when looked at from different angles, thanks to a mathematical method adapted from CT scanners. While the scanners construct 3-D views by adding together flat X-ray images, Lanman’s display works backward, decomposing 3-D views into flat ones.

“With some clever computation and clever optics, we can display objects that you can actually see around,” he says.

Four LCD screens stacked on top of one another show videos from up to seven viewpoints via the same trick. The display will be presented this month in Hong Kong at a meeting of the Association for Computing Machinery’s Special Interest Group on Computer Graphics and Interactive Techniques.

A second approach out of Lanman’s lab mashes together many pairs of perspectives into a single image on an LCD screen. A pattern on a second overlying screen flickers faster than the eye can see, filtering the image for different viewing angles. A prototype display built out of 22-inch computer monitors calculates the pattern needed for every frame of a video of a car, but the computing power required limits screen size.

“We’re trying to find ways to take these ideas and make them more practical,” says Lanman.

Easier on the eyes

But Lanman’s screens face a problem that’s universal among 3-D displays already on the market. Their beauty stirs conflict in the eyes of the beholder.

When you look at something close to you, your eyes naturally swivel inward. At the same time, the thickness of the eyes’ lenses changes to focus light bouncing off the object. These two mechanical tweaks (called vergence and accommodation) are coordinated by the brain to provide a proper sensation of depth.

When you watch a 3-D movie on a flat screen, though, this synchronization goes haywire. The eyes aim where the image appears to be, but the lenses adjust to the distance of the screen. Cross your eyes and you’ll feel the disconcerting effects of this conflict: a blurry image, eyestrain and sometimes headaches.

“No one knows exactly how many people experience this discomfort,” says Martin Banks, a vision scientist at the University of California, Berkeley. “All we can say is that it’s enough that we’re paying attention to it.”

The farther an object appears to pop out in front of or behind the screen, the more likely that the image will stress out the eyes. Every screen has a “zone of comfort” that depends on its size and distance from the audience, Banks’ team reported in January in Burlingame, Calif., at the Stereoscopic Displays and Applications meeting.

One way to ease this stress is to show multiple viewpoints of a scene to each eye simultaneously. Seeing two different views can create the illusion that the light comes from a spot in front of the screen, tricking the lenses into making adjustments that match the swivel of the eyes.

At the University of Southern California in Los Angeles, Paul Debevec has figured out a way to pack hundreds of different viewing angles together to eliminate eyestrain. He’s ditching flat screens in favor of a rapidly flickering projector. It bounces images off a pair of aluminum plates jointed together like an A-frame tent, a double-sided mirror of sorts that spins 900 times per minute.

At one instant, the mirror shoots one image at an observer’s right eye. A split second later, the mirror has spun a bit and can target the left eye with a different image, creating the illusion of 3-D. The mirror and projector are synchronized so every viewer sees different pairs of images depending on that person’s point of view.

During a demo of the device, Debevec films someone and sends the video data to a faraway projector. The person’s face materializes within the whirl of the mirror. The depth of the display, limited to a few inches by the size of the aluminum plate, makes the face look like a mask rather than a full bust.

“It’s not really in full 3-D,” Debevec says. “It’s more like high relief.”

Debevec hopes to develop the device into a teleconferencing system that is comfortable on the eye and accommodates a moving viewer.

Holovision

For full 3-D that really gets inside your head — images that rotate every which way, leap out of the screen as far as you’d like and take it easy on the eyes — there’s nothing that beats the realism that a hologram can provide.

“The holographic display is the closest to how human beings see around themselves,” says Nasser Peyghambarian, a physicist at the University of Arizona in Tucson. “It’s the Holy Grail of all displays.”

From comic books to credit cards, people have been playing around with still holograms for decades. These images are the children of lasers, born when two beams interfere with each other while striking a light-sensitive material. This coupling imprints a fringe pattern that, when illuminated, bends light to create an object in exquisite detail.

Peyghambarian, like many before him, hopes to give life to these still images. He’s creating a new kind of holographic plastic that can be rapidly imprinted, erased and imprinted again.

His first prototype, a transparent plastic screen slightly larger than a playing card, updated only once every two seconds, a far cry from movies’ 24 to 30 frames per second. Since reporting on the device last year in Nature (SN: 12/4/10, p. 8), he has increased the size to a foot on each side, but still hasn’t achieved the 10 frames per second he is shooting for.

At MIT, engineer Michael Bove has used mostly inexpensive, off-the-shelf parts to make small holographic videos that update 15 times per second. He is in talks with the electronics industry about developing a television that might cost no more than a few hundred dollars to build. Currently, though, the display is fuzzy and in just one color.

For any holographic display, the bottleneck is the sheer amount of data going into each image. Increase the size of the image, the number of colors, how often it refreshes or how steep an angle it can be seen from, and the required processing power explodes, quickly reaching unmanageable proportions.

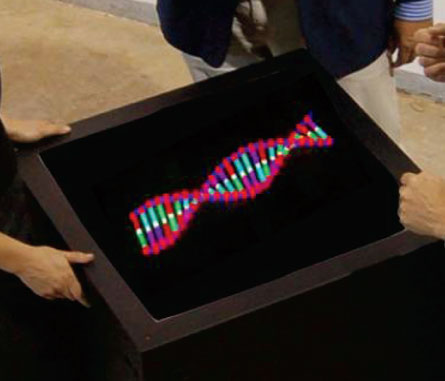

The largest holographic video display to date, measuring 6 feet diagonally, belongs to the military. For five years, the Defense Advanced Research Projects Agency, or DARPA, funded the development of a tabletop display that projects videos up to a foot high, visible from angles greater than 45 degrees above the table.

“People looking at these images do what we call the ‘holodance,’ ” says Mark Lucente, a consultant based in Austin, Texas, and former researcher at Zebra Imaging, the company that built the device. “They start to move their heads to the side, up and down, the same as if someone’s showing you a sculpture.”

Achieving the effect requires the equivalent of 27 high-end computer work stations crunching 10 gigabytes of holographic data per second. Instead of lasers writing on plastic, the hologram pattern is generated by modulators that turn the data into light and an array of tiny devices that shape the direction and intensity of the light as it emerges from the table.

In August, the first prototype was installed at the Air Force Research Laboratory at Wright-Patterson Air Force Base in Ohio. Darrel Hopper, a researcher at the lab, will test whether the display helps people make sense of complicated scenes — from skies filled with planes to aerial views of tanks on the ground.

“The volume of inherently 3-D data sets we use has grown exponentially and will continue to do so,” Hopper says. “We need people to be able to interact with this data as if it were a true 3-D object.”

Seeing clearly

Whether any of these new 3-D displays will make the transition into the home remains to be seen. Other technologies developed over the years have come and gone, such as a colorful device developed by the now-defunct Actuality Systems that looked like a glowing crystal ball.

“The market for these technologies wasn’t big enough,” says Nick Holliman, who studies 3-D displays at Durham University in England.

He thinks that new glasses-free approaches will be adopted first by specialized groups. Beyond the military, car designers and oil companies are interested in 3-D displays. Hospitals may also be a natural fit.

Several electronics companies are backing the development of glasses-free television sets. Philips has created a display that exploits lenses to scatter different views of a scene around a room. And Toshiba has unrolled a prototype of a 55-inch device that will cost $10,000 or more. But the screens, marketed to businesses and advertisers, offer viewers only nine different perspectives.

Ultimately, the introduction of new 3-D technologies into the home may be stymied by a problem that even the cleverest engineer can’t solve: “There hasn’t been a huge wave of 3-D content yet into the marketplace,” says Stephen Baker, vice president of industry analysis for NPD Group, a market research company headquartered in Port Washington, N.Y.

Realistic 3-D television displays won’t be worth much if there’s nothing to watch. In the end, moviemakers must choose to film in three dimensions and network sports producers need to decide that basketball games really do look better with a little depth.