Body In Mind

Long thought the province of the abstract, cognition may actually evolve as physical experiences and actions ignite mental life

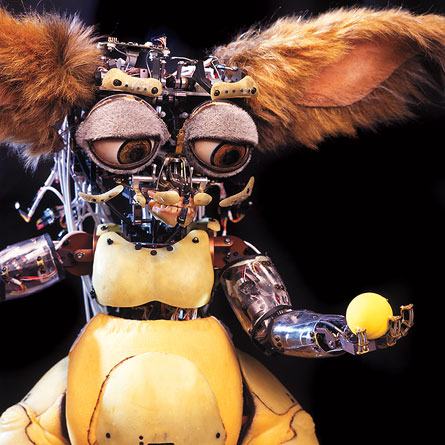

With gargantuan ears, gleaming brown eyes, a fuzzy white muzzle and a squat, furry body, Leonardo looks like a magical creature from a Harry Potter book. He’s actually a robot powered by an innovative set of silicon innards.

Like a typical 6-year-old child, but unlike standard robots that come preprogrammed with inflexible rules for thinking, Leonardo adopts the perspectives of people he meets and then acts on that knowledge. Leonardo’s creators, scientists at the Massachusetts Institute of Technology’s Personal Robots Group and special effects aces at the Stan Winston Studio in Van Nuys, Calif., watch their inquisitive invention make social strides with a kind of parental pride.

Consider this humanlike attainment. Leo, as he’s called for short, uses sensors to watch MIT researcher Matt Berlin stash cookies in one of two boxes with hinged, open covers. After Berlin leaves the room, another experimenter enters and creeps over to the boxes, a hood obscuring his face. The mysterious intruder moves the cookies from one box to the other and closes both containers before skulking out. Only Leo can unlock the boxes, by pressing buttons on a panel placed in front of him.

Berlin soon returns and vainly tries to open the original cookie box. He asks Leo to unlock it for him. The robot shifts his gaze from one box to the other, his mental wheels seemingly turning. Then Leo unlocks the second box. The robot has correctly predicted that Berlin wants the cookies that were put in the first box, and that Berlin doesn’t realize that someone moved those cookies to the other box.

Leo sits on the cusp of a new scientific approach to untangling the nature of biological intelligence and cognitive feats such as memory and language.

For the past 30 years, standard theories of cognition have assumed that the brain creates abstract representations of knowledge, such as a word that represents a category of objects. This abstract knowledge gets filed in separate neural circuits, one devoted to understanding and using speech, for example, and another involved in discerning others’ thoughts and feelings. If that’s so, then cognition operates on a higher level apart from more mundane brain systems for perception, action and emotion. Mental life must occur in three discrete steps: Sense, think and then act.

The new approach, often called embodied or grounded cognition, turns standard thinking on its head. It argues that cognition is grounded in interactions among basic brain systems, including those for perception, action, memory, emotion, reward and goal management.

These systems increasingly coordinate their activity as an individual gains experience performing tasks jointly with other people. Complex thinking capacities—in particular, a feel for anticipating what’s about to happen in a situation—form out of these myriad interactions within and between individuals, somewhat like the novel products of chemical reactions.

In short, people often act in order to think and learn, using immediate feedback to adjust their behavior from one moment to the next.

According to this view, bodily states—say, smiling—stimulate related forms of cognition, such as feeling good or remembering a pleasant experience. Researchers emphasize that the ability to think about an observed action or event, such as a friend biting into a peach, stems from neural reenactments of one’s perceptual, motor and emotional states—biting into your own peach.

“It’s really through the body, and the dynamic coupling of neural systems for perception, action and introspection, that cognition emerges,” says developmental psychologist Linda Smith of IndianaUniversity in Bloomington.

Leo has been created with the new approach in mind. He represents a new wave of artificial intelligence designed to learn rather than follow rules.

Although grounded cognition lacks an overarching theory to guide research, supportive findings are rapidly accumulating. Speakers at the annual meeting of the Cognitive Science Society, held in Washington, D.C., in July, described several strains of this work.

Studies suggest that toddlers rapidly learn words by coordinating their activity and attention with what their parents do. Other work indicates that bodily experiences orchestrate the widespread, but apparently not universal, belief that right-handedness and the right side of space are good, while left-handedness and the left side of space are bad.

Then there’s the budding field of social robotics, in which machines such as Leonardo manage to interact with and learn from people. This new generation of robots may eventually provide key insights into the way human minds develop, says psychologist Lawrence Barsalou of Emory University in Atlanta.

“I predict that in the next 30 years grounded processes will be shown to play a causal role in cognition,” Barsalou says.

Nearly all prescientific views of the mind, going back to ancient Greek philosophers, assumed that knowledge resides in mental images that are based on what we perceive, he adds. That idea has found new life in embodied cognition.

Grow-bot

Ancient philosophy is all Greek to Leo. That’s because he’s a social robot, not an academic type. Leo contains a built-in emotional empathy system that enables him to figure out the goals and intentions of people he meets.

Berlin and his MIT colleagues, led by computer scientist Cynthia Breazeal, were inspired by a notion—imported from embodied cognition—that imitation is the sincerest form of empathy. People understand those they interact with by imitating their behavior, either overtly or via imagination, in order to generate personal feelings and memories that inform empathic judgments.

Leo’s architecture reflects that idea. The robot contains a mechanism that orchestrates the appraisal and imitation of observed facial expressions. In laboratory interactions with people, Leo learns to associate particular facial expressions with his corresponding reactions. Leo’s reactions are guided by sensors that tag incoming information as positive or negative, strongly or weakly arousing, and new or familiar.

The robot also contains hardwired sensors that similarly appraise acoustic features of human speech, such as pitch. This vocal feedback reinforces links that Leo makes between others’ facial expressions and his own feelings.

Another built-in system directs Leo’s attention to nearby objects and to signs of movement, as well as to a person’s gaze and other body language. The same system allows Leo to review his own recent actions and reactions, and even what his goals were when he performed those actions.

Interplay among Leo’s sensory, motor and attention systems during social interactions eventually yields new thinking skills, Berlin says. Think of this process as cyber-cognitive growth. Leo’s new achievements include discerning a partner’s emotional reaction to a never-before-seen object in order to guide his approach to that object. He has also succeeded in coordinating the direction of his gaze with that of a partner while working on a joint task.

Such feats allow Leo to learn from human tutors in relatively subtle ways. In one task, Leo sits in front of a touch-sensitive computer screen that allows him to manipulate a variety of blue, red, green and yellow block shapes. A human volunteer sits across from Leo after getting instructions from an experimenter to work silently with the robot and generate a specific block figure, such as a blue and red sailboat.

People put in Leo’s position use a tutor’s nonverbal cues to assemble correct block figures about 90 percent of the time. In a series of trials with 18 tutors that he’d never met, Leo assembled three-quarters of the predesignated shapes.

“Leo makes mistakes at times, but he’s able to use an internal architecture organized around understanding his environment from another’s perspective to learn from social interactions,” Berlin says.

Traditional artificial intelligence has largely focused on programming disembodied expert systems to carry out mental operations using specific sets of built-in rules. At the Cognitive Science Society meeting, psychologist John Anderson of CarnegieMellonUniversity in Pittsburgh expressed optimism that integrating basic insights from such systems—including his own, known as ACT-R—will illuminate how the brain creates the mind.

Berlin and his colleagues disagree because they see the mind as a product of interactions among basic systems, not preset rules. The MIT group wants to focus on how Leo’s cognition develops over time. A new set of social robots designed by the team may offer further insights into the bodily origins of social thought. These machines move about on wheels and feature humanlike heads and torsos, relatively nimble arms and hands, and an internal architecture like Leo’s.

Name game

For Leo and his cybernetic relatives to flourish, scientists need to flesh out developmental principles in the ultimate social learners—infants and young children. New studies directed by Indiana’s Smith, cognitive scientist Chen Yu and their colleagues suggest that cognitive development rises, like steam from a boiling pot, out of daily collaborations between children and their caretakers. Such experiences prod children to notice things that go together, such as realizing that the same sounds come out of mom’s mouth whenever she holds up a particular toy. Neural systems for perception, action and other noncognitive functions become increasingly intertwined, prompting learning, similar to the learning that researchers have observed with Leo.

“That’s all there is to cognition,” Smith somewhat defiantly told an audience at the cognitive science meeting. Symbolic representations of knowledge in the brain, cherished by many cognitive scientists, simply don’t exist, in her view.

Smith explores how toddlers learn words by looking at the world from their pint-sized perch. Children sit across from their mothers at small tables and play with toys. Youngsters and adults wear headbands equipped with tiny cameras that show each person’s shifting visual perspective. A high-resolution camera mounted above the table provides a bird’s-eye view of the action. Mothers also wear headsets that record what they say.

Over five to 10 minutes of continuous play, parents try to engage their children and teach them the names of each toy in whatever way the parents deem appropriate.

In a recent study, five parents played with their 17- to 20-month-old children while trying to teach them made-up names, provided by the researchers, for nine plastic, simply shaped objects.

After the play period, an experimenter placed groups of three toys in front of each child, looked directly at the youngster and asked for one object by name. The experimenter would say, “I want the dax! Get me the dax!”

The researchers attributed word understanding to children who looked at the correct object when it was named.

Children’s visual take on the exercise differed considerably from that of adults. Kids rotate their heads to shift visual attention, yielding a bouncy, unstable perspective on toys that are typically held close to the face as the parent’s body looms above. Adults primarily shift their gaze while holding the head still, giving them a stable platform from which to look down on their children.

Word learning in Smith’s study depended far more on when mothers named toys than on how many times they uttered a toy’s name. Toddlers recognized some object names mentioned only once or twice by their mothers. Other names uttered five or six times elicited no reactions from children later on.

But if a child and mother simultaneously looked at a toy as it was named, even if only once, the youngster was especially likely to recognize the word for that toy at testing.

Word learning also hinged on parents speaking a toy’s name as children held that toy in their hands. A third learning aid consisted of mothers naming toys while children held their heads relatively still, a sign of sustained attention.

Another head-camera study from Smith’s team, published in the June–September Connection Science, finds that toddlers learn new words particularly quickly if they and their mothers take turns during playtimes. Turn-taking refers to mutually coordinated activity, such as a mother keeping her head still while a child’s hands move or a child stopping activity while a mother holds up a toy.

Parents take the lead in promoting either turn-taking or disjointed activity during play, the researchers say.

These findings challenge an influential hypothesis that toddlers infer that an adult who utters a word must be thinking about and referring to a specific object. Instead, 1- to 2-year-olds notice how certain words get spoken by adults when specific objects get picked up and manipulated, Smith contends.

Taking sides

Adults weave far more complex forms of thought out of physical experience than children do, says Daniel Casasanto of the Max Planck Institute for Psycholinguistics in Nijmegen, the Netherlands. His latest research suggests that people with different kinds of bodies think differently about abstract concepts such as goodness and badness.

Right- and left-handers intuitively associate positive concepts with the side of space on which they act most dexterously, and negative concepts with the side of space where they have difficulty, Casasanto reported at the cognitive science meeting.

Cultures everywhere celebrate right-sidedness and denigrate the left side. Consider the English phrases “the right answer,” “my right-hand man,” “out in left field” and “two left feet.” Linguists who support embodied cognition have argued for more than 20 years that verbal metaphors—say, being “high on life” or feeling “down in the dumps”—reflect universal bodily experiences, such as standing tall when proud versus slouching when dejected.

Yet left-handers’ physical experiences yield a “left is best” perspective that clashes with cultural beliefs and common metaphors shaped by a right-handed majority, Casasanto hypothesizes.

He conducted experiments with 886 college students. About 11 percent reported being left-handed. In one task, participants were told to draw a “good” animal in one box and a “bad” animal in another box. Boxes either appeared on the left or right side of a page or one above the other. Righties routinely put good animals on the right and bad ones on the left; lefties did the opposite. Everyone, regardless of handedness, put good animals above bad animals.

Concepts of up and down are universally associated with positive and negative bodily states, respectively, whereas ideas about the merits of right and left are shaped by the different physical experiences of right- and left-handers, Casasanto proposes.

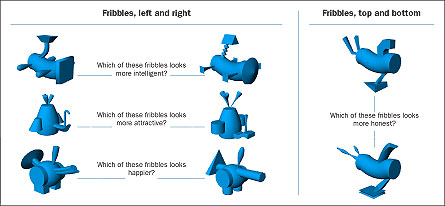

In a second task, right- and left-handers viewed pairs of similar-looking, computer-generated alien creatures and chose one as representative of certain characteristics, such as being “more intelligent” or “less honest.” Righties generally favored creatures displayed to the right and disliked creatures on the left; again, lefties took the opposite approach.

The same split between right- and left-handers characterized preferences for similar-sounding shopping choices or job applicants described in boxes on the right and left sides of a computer screen.

Conceptions of time are rooted in physical experience as well, according to Casasanto and Lera Boroditsky of StanfordUniversity. People often talk about time using spatial language, as in the phrases “taking a long vacation” and “moving the meeting forward two hours.” The metaphorical link between space and time grows out of a deeper, nonverbal tendency for people to incorporate spatial information into time estimates, the researchers contend in the February Cognition.

In one experiment, participants watched a series of lines on a computer screen expand to various lengths over durations that ranged from one to five seconds. Some lines grew to different lengths over the same amount of time. Volunteers judged lines that traveled a relatively short distance to have taken less time than they actually did. Lines that covered long distances were judged to have taken more time than they actually did.

In contrast, participants’ estimates of line lengths were not altered by differences in the amount of time lines took to grow.

Such findings fit with the view that fundamental abstract concepts, such as how time works, stem from perception and behavior, not cultural dictums or vivid metaphors, remarks computer scientist Jerome Feldman of the University of California, Berkeley.

“Cognition is being reunited with perception, action and language, but nobody understands how all of the pieces fit together,” Casasanto says.

Perhaps some day in the not-too-distant future, a group of social robots will tire of watching computer scientists hide food from each other and start arguing among themselves about the nature of cognition. Just imagine the experiments that they’d dream up.