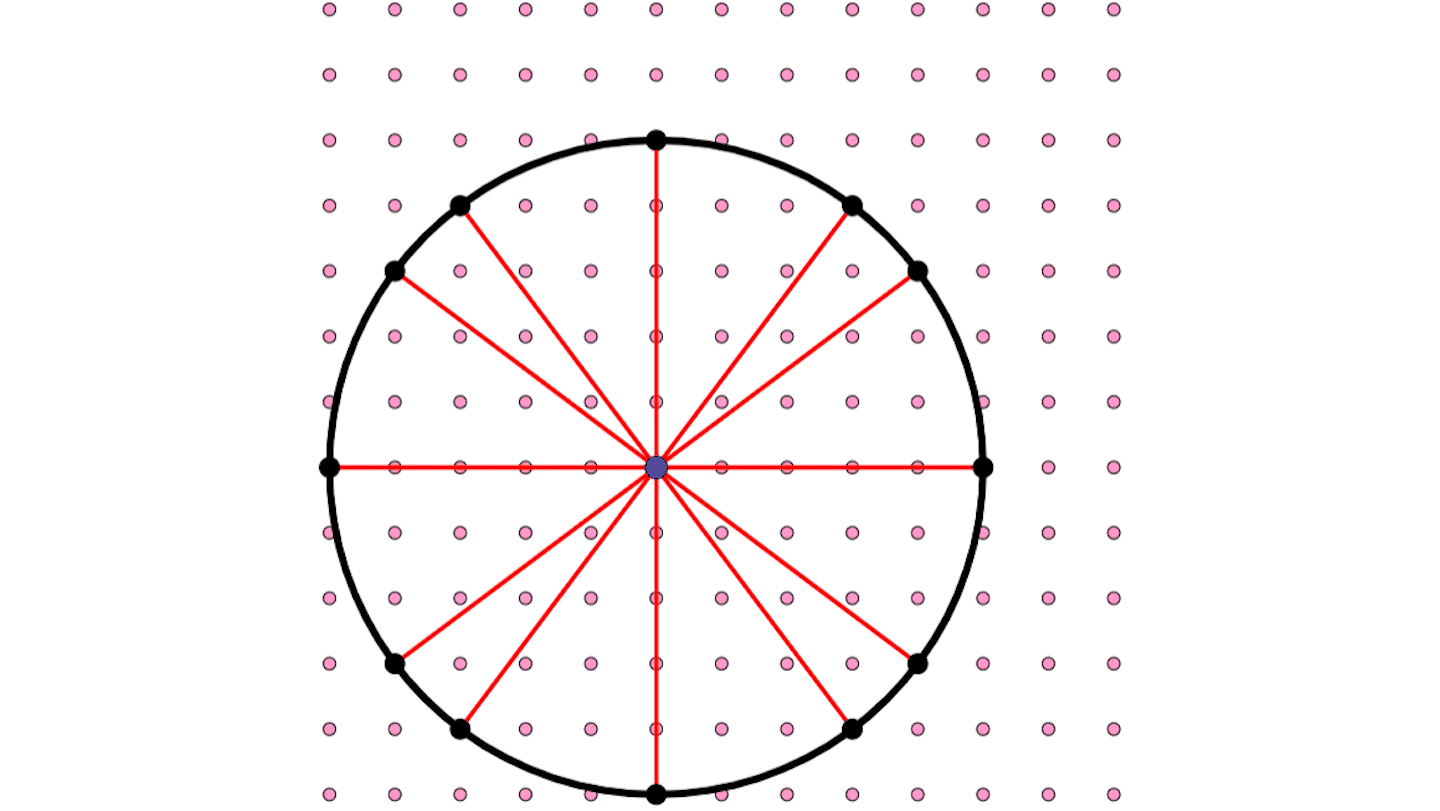

Using only the numbers 1, 2, 3, and 4, arrange four sets of these numbers into a four-by-four array so that no column or row contains the same two numbers. The result is known as a Latin square.

Here are two examples of Latin squares of order 4:

| 1 | 2 | 3 | 4 |

| 2 | 1 | 4 | 3 |

| 3 | 4 | 1 | 2 |

| 4 | 3 | 2 | 1 |

| 1 | 2 | 3 | 4 |

| 3 | 4 | 1 | 2 |

| 4 | 3 | 2 | 1 |

| 2 | 1 | 4 | 3 |

In effect, each row (and each column) is a permutation of four distinct numbers (or symbols).

Such arrays have proved useful for a variety of purposes. Suppose, for example, you wanted to check the resistance to wear of four brands of automobile tires. Putting one tire of each brand on the four wheels of one car isn’t good enough because the amount of wear may differ in those four positions and vary from week to week owing to different weather conditions. A better experiment would be to use four tires for four weeks and to interchange the four positions from week to week according to a four-by-four Latin square.

Latin squares are also linked to algebraic objects known as quasigroups. A quasigroup is defined in terms of a set, Q, of distinct symbols and a binary operation (called multiplication) involving only the elements of Q. A quasigroup’s multiplication table turns out to be a Latin square. Each element of the set Q occurs exactly once in each row and each column of the table.

The so-called quasigroup completion problem concerns a table that is correctly but only partially filled in. The question is whether the remaining blanks in the table can be filled in to obtain a complete Latin square (or a proper quasigroup multiplication table).

Computer scientists have proved that the quasigroup completion problem belongs to a category of difficult computational problems described as NP-complete. As the number of elements, n, increases, a computer’s solution time grows exponentially in the worst case. In effect, systematically solving such a worst-case problem involving many elements can take an enormous amount of time, even on the fastest available computers.

In typical cases, however, solution times can vary tremendously and depend on the specific search algorithm used to find an answer. Many search problems take much less time to solve than worst-case scenarios would suggest.

Moreover, researchers have discovered that, just as water abruptly freezes when the temperature dips below the freezing point, the quasigroup completion problem exhibits a computational phase transition–a shift from cases for which a Latin square can be found to those for which no solution is possible when the fraction of initially filled-in squares in the table has a certain threshold value.

“At the phase transition, problem instances switch from being almost all solvable to being almost all unsolvable,” Carla P. Gomes of Cornell University and her coworkers report in a recent issue of the Journal of Automated Reasoning. That transition occurs when about 42 percent of the blanks in the table are initially filled in.

Interestingly, the problems that take longest to resolve one way or the other (soluble versus insoluble) lie at the phase transition, where there are enough constraints (filled-in squares) to limit the number of good choices but not enough to show immediately that the case is hopeless.

Gomes has set up a Web page at http://www.cs.cornell.edu/Info/People/gomes/QUASIdemo.html where you can try out various instances of the quasigroup completion problem. In this demonstration, Gomes uses a 10-by-10 grid and 10 different colors.

You can select a sample pattern of colored-in squares as your starting point, generate a random, colored array filling a specified fraction of the grid, or manually create your own partial Latin square. Clicking on the button labeled “RUN Stochastic” instructs the computer to solve the problem following a built-in algorithm (a randomized backtrack search procedure, in this case). Clicking again presents a different solution.

On certain runs, the computer solves the problem easily, Selman observes. On other runs, it may fail to complete the pattern within the allotted time. Varying the fraction of filled-in squares also alters computation times. When the fraction is close to the critical value, computation times become very large.

Such experiments provide insights into the factors that make certain computational problems particularly difficult and may suggest improved search strategies for solving a variety of problems.