When Kenneth M. Ford considers the future of artificial intelligence, he doesn’t envision legions of cunning robots running the world. Nor does he have high hopes for other much-touted AI prospects–among them, machines with the mental moxie to ponder their own existence and tiny computer-linked devices

implanted in people’s bodies. When Ford thinks of the future of artificial intelligence, two words come to his mind: cognitive prostheses.

It’s not a term that trips off the tongue. However, the concept behind the words inspires the work of the more than 50 scientists affiliated with the Institute for Human and Machine Cognition (IHMC) that Ford directs at the University of West Florida in Pensacola. In short, a cognitive prosthesis is a computational tool that amplifies or extends a person’s thought and perception, much as eyeglasses are prostheses that improve vision. The difference, says Ford, is that a cognitive prosthesis magnifies strengths in human intellect rather than corrects presumed deficiencies in it. Cognitive prostheses, therefore, are more like binoculars than eyeglasses.

Current IHMC projects include an airplane-cockpit display that shows critical information in a visually intuitive format rather than on standard gauges; software that enables people to construct maps of what’s known about various topics, for use in teaching, business, and Web site design; and a computer system that identifies people’s daily behavior patterns as they go about their jobs and simulates ways to organize those practices more effectively.

Such efforts, part of a wider discipline called human-centered computing, attempt to mold computer systems to accommodate how humans behave rather than build computers to which people have to adapt. Human-centered projects bear little relationship to the traditional goal of artificial intelligence–to create machines that think as people do.

As a nontraditional AI scientist, Ford dismisses the influential Turing Test as a guiding principle for AI research. Named for mathematician Alan M. Turing, the 53-year-old test declares that machine intelligence will be achieved only when a computer behaves or interacts so much like a person that it’s impossible to tell the difference.

Not only does this test rely on a judge’s subjective impressions of what it means to be intelligent, but it fails to account for weaker, different, or even stronger forms of intelligence than those deemed human, Ford asserts.

Just as it proved too difficult for early flight enthusiasts to discover the principles of aerodynamics by trying to build aircraft modeled on bird wings, Ford argues, it may be too hard to unravel the computational principles of intelligence by trying to build computers modeled on the processes of human thought.

That’s a controversial stand in the artificial intelligence community. Although stung by criticism of their failure to create the insightful computers envisioned by the field’s founders nearly 50 years ago, investigators have seen their computational advances adapted to a variety of uses. These range from Internet search engines and video games to cinematic special effects and decision-making systems in medicine and the military. And regardless of skeptics, such as Ford, many researchers now have their sights set on building robots that pass the Turing Test with flying colors.

“I’m skeptical of people who are skeptical” of AI research, says Rodney Brooks, who directs the Massachusetts Institute of Technology’s artificial intelligence laboratory. He heads a “hard-core AI” venture aimed at creating intelligent, socially adept robots with emotionally expressive faces. Brooks also participates in a human-centered project focused on building voice-controlled, handheld computers connected to larger systems. The goal is for people to effectively tell the portable devices to retrieve information, set up business meetings, and conduct myriad other activities (SN: 5/3/03, p. 279: Minding Your Business).

Cognitive prostheses represent a more active, mind-expanding approach to human-centered computing than Brooks’ project does, Ford argues. “This line of work will help us formulate what we really want from computers and what roles we wish to retain for ourselves,” he says.

Flight vision

In the land of OZ, which lies entirely within a cockpit mock-up at IHMC, aircraft pilots simulate flight with unaccustomed ease because they see their surroundings in a new light.

IN PLANE VIEW. A typical cockpit setup, left, contrasts with that of the OZ system, right. OZ combines data from dials and gauges into a visual depiction of the aircraft and external conditions. Here, the aircraft approaches a runway, represented by three green dots. |

IHMC’s David L. Still has directed work on the OZ cockpit-display system over the past decade. The movie-inspired name comes from early tests in which researchers stood behind a large screen to run demonstrations for visitors, much as the cinematic Wizard of Oz controlled a fearsome display from behind a curtain.

For its part, Still’s creation taps into the wizardry of the human visual system. In a single image spread across a standard computer screen, OZ shows all the information needed to control an aircraft. An OZ display taps into both a person’s central and peripheral vision. The pilot’s eyes need not move from one gauge to another, says Still.

A former U.S. Navy optometrist who flies private planes, Still participated in research a decade ago that demonstrated people’s capacity to detect far more detail in peripheral vision than had been assumed.

“OZ decreases the time it takes for a pilot to understand what the aircraft is doing from several seconds to a fraction of a second,” Still says. That’s a world of difference to pilots of combat aircraft and to any pilot dealing with a complex or emergency situation.

The system computes key information about the state of the aircraft for immediate visual inspection. The data on the six or more gauges in traditional cockpits are translated by OZ software into a single image with two main elements. On a dark background, a pilot sees a “star field,” lines of bright dots that by their spacing provide pitch, roll, altitude, and drift information. A schematic diagram of an airplane’s wings and nose appears within the star field and conveys updates on how to handle the craft, such as providing flight path options and specifying the amount of engine power needed for each option. Other colored dots and lines deliver additional data used in controlling the aircraft.

In standard training, pilots learn less-intuitive rules of thumb for estimating the proper relationship of airspeed, lift, drag, and attitude from separate gauges and dials. With the OZ system, a pilot need only keep certain lines aligned on the display to maintain the correct relationship.

Because OZ spreads simple lines and shapes across the visual field, pilots could still read the display even if their vision suddenly blurred or if bright lights from, say, exploding antiaircraft flak, temporarily dulled their central vision or left blind spots.

Experienced pilots quickly take a shine to OZ, Still says. In his most recent study, 27 military flight instructors who received several hours training on OZ reported that they liked the system better than standard cockpit displays and found it easier to use. In desktop flight simulations, the pilots maintained superior control over altitude, heading, and airspeed using OZ versus traditional gauges.

OZ provides “a great example” of a human-centered display organized around what the user needs to know, remarks Mica Endsley, an industrial engineer and president of SA Technologies in Marietta, Ga. The company’s primary service is to help clients in aviation and other industries improve how they use computer systems.

If all goes well, OZ will undergo further testing with veteran pilots as well as with individuals receiving their initial flight training. The system will then be installed in an aircraft for test flights.

What a concept

In an age of information overload, it would be nice for people to have a simple, concise way to tease out the information they really want from, say, the World Wide Web. Today’s search engines usually drag along a vast amount of irrelevant data, says Alberto Canas, IHMC’s associate director.

Enter concept maps.

Concept-mapping software developed by Canas and his colleagues provides a way for people to portray, share, and elaborate on what they know about a particular subject. Concept maps consist of nodes–boxes or circles with verbal labels–connected by lines with brief descriptions of relationships between pairs of nodes. Clicking on icons that appear below the nodes opens related concept maps or a link to a relevant Web site.

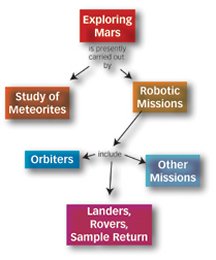

For instance, scientists at NASA’s Center for Mars Exploration created a Mars concept map. A red box at the top contains the words “Exploring Mars” and connects to boxes arrayed below it, with labels such as “Search for Evidence of Life” and “Human Missions.” Icons positioned below the boxes link to a variety of Mars-related Web sites.

A concept-map maker needs to possess a thorough grasp of his or her subject, says Canas. Such a map draws connections between essential principles in a particular realm of knowledge. IHMC researcher Joseph Novak invented a paper-and-pencil version of concept maps nearly 30 years ago.

These tools aren’t just jazzed-up outlines of topics within a story. In a concept map, nodes contain nouns and the connecting lines are associated with verbs, thus forming a web of propositions related to a central idea. In the Mars concept map, for example, the line between “Exploring Mars” and “Study of Meteorites” brackets the phrase “is presently carried out by.”

In one of their incarnations, concept maps provide an alternative way to organize information on Web sites so that topics can be explored quickly and efficiently rather than through haphazard hunts on search engines, Canas notes.

With this technology, people can convey their expert knowledge and reasoning strategies to others. In one case, concept maps developed by IHMC scientists working with Navy meteorologists plumb the knowledge of experienced Gulf Coast weather forecasters and are now used to train new Navy forecasters.

Perhaps the greatest potential for concept maps lies in education. Elementary and high school students in a handful of countries now use a software system developed at IHMC to build their own concept maps about academic topics and then share them over the Internet with distant classes. The computer system ferrets out and displays related claims from different maps. It also poses questions to provoke further thought about the topic. Students then critique each other’s maps and, if necessary, revise them.

“It’s hard work to learn enough to build a good concept map,” Canas says, “but it leads to far more understanding about a topic than simply memorizing information, as so often happens.”

Brahms at work

In the workplace, computers are virtuosos of information storage. However, a computer system known as Brahms sings a different tune. It discerns revealing patterns in people’s work behaviors and simulates ways to get their jobs done more effectively.

The Brahms system illuminates the informal practices and collaborations that occur during the workday, according to IHMC computer scientist William J. Clancey. “A Brahms model is a kind of theatrical play intended to provoke conversation and stimulate insights in groups seeking to analyze or redesign their work,” he says.

Clancey and other scientists began working on Brahms in 1993. The system builds on social science theories that regard each person’s behaviors as being structured by broad pursuits, which researchers often call activities. In an office, common activities include coffee meetings with a supervisor, reading mail, taking a break, and answering phone messages. Activities provide a forum for addressing specific job tasks. A morning coffee meeting, for example, may determine who will make an important sales call later in the day.

Brahms creates a computerized cartoon of how a work group’s members perform their activities and tasks. The system’s software is based on simulations of interactions among virtual individuals.

Brahms got its first tryout with workers at a New York telecommunications firm in 1995. Since then, Clancey has imported the system to NASA, where he helps six-person crews practice for future Mars-exploration missions. Brahms portrays how a crew’s members go about their daily business so that mission-support planners can evaluate the procedures for critical activities, such as maintaining automated life-support systems and conducting scientific experiments.

After a crew in training spent 12 days in 2002 working out of a research station in the Utah desert, Brahms translated extensive data on crew behavior into a portrayal of how each person divvied up his or her time and moved from one place to another during each day. Ensuing simulations indicated that simple scheduling changes–eating lunch and dinner at slightly earlier times and eliminating afternoon crew meetings–would markedly boost the time available for scientific work and other critical duties.

Brahms remains a work in progress, Clancey notes. He wants to use the system to study how pairs of scientists on the Mars crews collaboratively adjust their plans in the field as they make unexpected discoveries.

David Woods of Ohio State University in Columbus welcomes that prospect. Traditional artificial intelligence programs treat plans as rigid specifications for a series of actions, says Woods, who studies how people use computers in air-traffic control and other complex jobs. In such difficult endeavors, however, plans often get modified as circumstances change and surprises crop up.

“Researchers are just beginning to look at plans as ways in which people prepare themselves to be surprised,” Woods says. In other words, the best-laid plans are those that are loose enough to allow for innovation.

Big systems

The sprawling IHMC facility in downtown Pensacola has witnessed its own surprising transformation. Before Ford and his computer-savvy colleagues arrived in 1990, the building housed the local sheriff’s department and a jail.

IHMC now represents freedom, at least of the academic sort. People trained in computer science, the social sciences, philosophy, engineering, and medicine mingle and collaborate. The only requirement: Treat computers not as isolated systems of chips and codes, but as parts of larger systems also characterized by how individuals think, the type of organizations in which they work, and the settings in which labor occurs.

It’s this big-system perspective that informs OZ, concept maps, and Brahms. “Building cognitive prostheses keeps human thought at the center of our science,” Ford says.

****************

If you have a comment on this article that you would like considered for publication in Science News, send it to editors@sciencenews.org. Please include your name and location.

To subscribe to Science News (print), go to https://www.kable.com/pub/scnw/

subServices.asp.

To sign up for the free weekly e-LETTER from Science News, go to http://www.sciencenews.org/subscribe_form.asp.