COUNTING FISH A management approach based on chaos theory could help prevent collapses of sardines (shown) and other valuable fishes.

© Ralph A. Clevenger/CORBIS

There’s something fishy going on with Pacific sardines. The pint-size swimmers, whose abundance sustained California’s famed Cannery Row for decades, all but disappeared from coastal waters in the 1950s. Numbers remained low until the late 1980s, when enough fish finally reappeared to make commercial harvesting worthwhile again. By then, sardines in the highly productive California Current were carefully managed: Nobody wanted another crash.

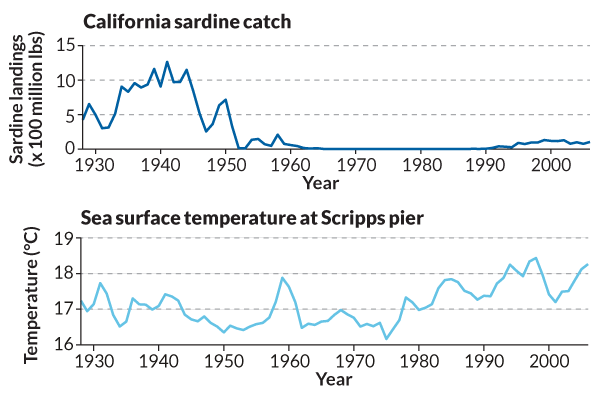

Scientists still debate what causes sardine numbers to rise and fall. Overfishing certainly played a part in the collapse; the first catch limits weren’t set until the 1960s, after the population had already declined steeply. Research suggests that a cooling of the eastern Pacific Ocean also played a key role in the 1950s crash. Sardines like warm water, and the eastern Pacific flips between cooler and warmer conditions every few decades. The thinking goes that a cool period starting in the mid-1940s, combined with decades of overfishing, sank the sardine.

Based on this understanding, the Pacific Fishery Management Council developed a temperature-dependent method to predict population changes and set harvest limits for sardines in the California Current. In 2010, however, scientists analyzed data from the previous two decades and published a study questioning the correlation between sardine population growth and sea surface temperature. As a result, the council removed ocean temperature from the mathematical models they use to forecast sardine population growth.

The council’s decision frustrates George Sugihara, a theoretical biologist at the Scripps Institution of Oceanography in La Jolla, Calif. In his view, the simulations that fishery scientists use to predict population changes and set quotas are fundamentally flawed. When constructing these models, scientists typically assume that a given population of fish will grow, reproduce and die at known rates for the rest of time. The simulations can include environmental variables like sea surface temperature, but mostly rely on what scientists know about the biology of individual fish species.

As a result, the predictions these models make don’t reflect the dynamic complexity of the environment in which fish actually live, Sugihara says. The simulations can’t capture how a population’s growth rate might change in response to the other fish species living in the ocean, for example, or to the amount of zooplankton, or to wind speeds or, for that matter, to fishing itself. He says “it’s like trying to understand reality by just looking at one page” of a book.

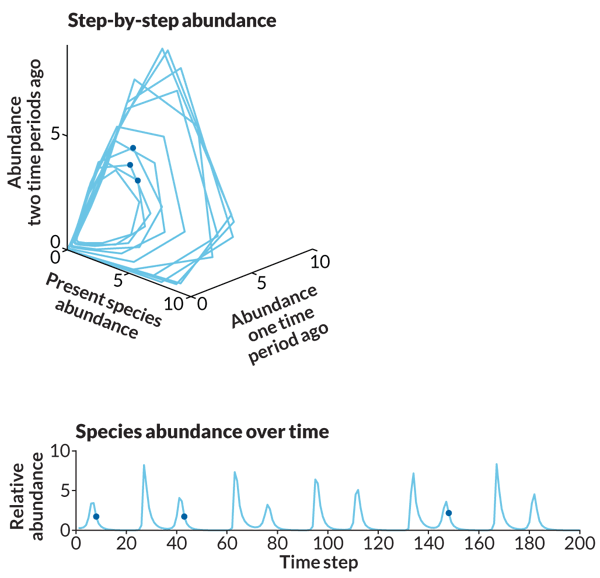

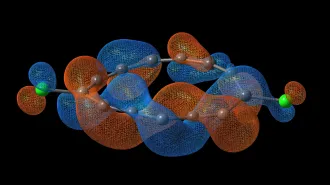

Sugihara has developed a radically different approach that he says can reveal all the pages. His method doesn’t require any assumptions about fish growth rates. Instead, it uses particularly rich troves of population and environmental data from the past to predict the near future. The technique allows researchers to make pictures of what they call the past “states” of a population, which are based on variables like the numbers of adult, juvenile and baby fish, as well as the environmental factors that conventional models leave out. Sugihara and his colleagues then look for times when a population was in a state similar to its present one — for example, when fish numbers and weather were comparable — and study how the population fluctuated then. The present population will change in similar ways, Sugihara says, at least for a few years.

Using such methods, Sugihara and colleagues weighed in on the sardine conundrum. They found that if they removed just one measurement from their model — sea surface temperature as recorded at the Scripps pier — it became less accurate at tracking observed Pacific sardine populations off the

California coast over the previous 50 years. The researchers concluded that ocean temperature changes do cause fluctuations in sardine numbers, even though the relationship between the two is not readily obvious.

On the strength of this result, Sugihara’s team called on managers to reverse course and once again factor in ocean temperature when forecasting sardine population change. Other researchers have made similar recommendations for different reasons, and the council is now reviewing its rules.

Solving the sardine puzzle is an important coup. But Sugihara has his sights on a much larger prize: changing how the world’s fisheries are managed. He thinks his techniques should complement, if not replace, the mathematical models scientists typically use to estimate how many fish can be taken without causing their populations to decline. Methods currently in use, Sugihara thinks, are based on simplistic assumptions about nature and could make fished populations vulnerable to collapse.

Nature is simply too complex, he argues, its connections too subtle, for human-made simulations to reproduce. As conservationist John Muir wrote in his 1911 book My First Summer in the Sierra, “When we try to pick out anything by itself, we find it hitched to everything else in the universe.”

Putting chaos to work

To put this wisdom into action, Sugihara first has to convince the scientists who advise fishery managers that his techniques can work in real-world practice. While these scientists are intrigued by Sugihara’s work, they are also hesitant to adopt methods that can seem, in the words of Alec MacCall, a senior ecologist at the National Oceanic and Atmospheric Administration who has collaborated with Sugihara, “almost magical.”

For the soft-spoken but intensely driven Sugihara, there is no magic. It’s just a matter of abandoning a linear view of nature, he says. In a linear model, effects scale up or down with their causes. For instance, if next year’s sardine population depends solely on this year’s, one could expect a doubling of spawning adults to yield twice as many fish larvae, some fraction of which would eventually become adults. Many commonly used fishery models rely on such assumptions, and allow scientists to neatly arrive at a number of adults needed to sustain a given population into the future.

In a nonlinear approach, however, small changes can have large effects. For example, a small rise in ocean temperature could send fish stocks soaring or plummeting. Or harvesting too many of the largest individuals could send the entire population into convulsions. In a 2006 Nature paper, Sugihara and colleagues discovered nonlinear effects in fish populations. The team analyzed data on fish living off the coast from San Diego to San Francisco, and found that populations of exploited fish species varied more over time than those of unexploited species living in the same waters. The reason, the researchers determined, is that fishermen tend to harvest the largest individuals, which makes the entire population unstable.

The upshot of nonlinearity is that seemingly disparate phenomena like ocean temperatures and fish populations can be connected even if they don’t appear to be. As a result, you can’t try to manage just one part of the environment as if it isn’t being prodded and perturbed by everything around it, Sugihara says. “It’s kind of wishful thinking that the world is a stable place,” he says.

This wishful thinking has deep roots, going back at least to Isaac Newton and the mechanistic universe that emerged from his laws of motion. But in the late 1880s, French mathematician Henri Poincaré dealt a major blow to such dreams of predictability when he announced that the solar system, the classic test case for Newtonian motion, is actually nonlinear. In other words, it is impossible to calculate the precise trajectories of the sun, planets and other bodies whizzing through the void, because they are continually pushing and pulling on each other.

In the 1960s the American mathematician Edward Lorenz came to a similar conclusion about wind and rain. To communicate the challenge of making long-range weather predictions, Lorenz later coined the term “butterfly effect,” which suggests that something as small as a butterfly flapping its wings can influence storms across the globe. Indeed, even with today’s modern satellites and computer simulations, seemingly insignificant differences in starting conditions can lead to vast divergences in the results weather models give when they’re run for more than a week or two out.

A decade later Robert May, an Australian physicist turned biologist at Princeton, applied nonlinearity to the study of life. At the time, May was studying a mathematical technique frequently used to predict how a population will change in time. Ecologists had long assumed that any population will grow toward a stable value known as its carrying capacity, at which the population’s demand for resources matches the amount of resources available, and it levels off.

But May found that if he made the growth rate — the number of offspring an individual has in a year — higher, the final population did not stabilize at a predictable number. Instead, the end point began alternating between two wildly different values as the growth rate rose. Increase the rate a bit more, and the final population bounced between four values, then eight, then 16…. Eventually, any discernible order disappeared. May showed his results to University of Maryland mathematician James Yorke, who gave the phenomenon a memorable name: chaos. “Jim Yorke is fond of saying we weren’t the first people to discover chaos, but we were the last,” May says.

May published his findings in a short 1976 paper in Nature. The work has since been cited thousands of times, as scientists from far-flung disciplines have absorbed the disturbing lesson that even simple natural systems can evade prediction. But while scientists had little trouble finding chaos once they knew to look for it, they have had less success making it useful.

Making chaos useful is what drives Sugihara. Attracted by May’s intensely mathematical approach to ecology, Sugihara came to Princeton in the late 1970s and earned his Ph.D. in May’s lab in 1983. He eventually headed to Scripps, but he and May kept in touch, and in 1990 they coauthored a paper in Nature that finally showed how chaos could be applied. The crucial insight, for which May gives Sugihara all the credit, was that chaos, though it precludes long-term prediction, is not the same thing as randomness. Randomness — the white noise on old television sets, for example — is just that, informationless noise. Chaos, by contrast, contains information, and that information can be used to predict the near future of a nonlinear system. May calls this short-term predictability the “flip side” of chaos.

Sugihara has built the rest of his career around applying the flip side of chaos to disparate fields — finance, fish, heart rhythms, atmospheric circulation and more recently gene activity and paleoclimate. Strangely, his ideas have probably found their warmest welcome far from the scientific world. Sugihara was recruited by Deutsche Bank, where he worked from 1997 to 2002 modeling short-term stock futures and, by all accounts, earning a salary unheard of among his scientist colleagues.

But after earning what May calls “more money than he’d ever need,” Sugihara returned to Scripps in 2002, eager to apply the nonlinear methods with which he had achieved success in finance to thorny problems in conservation. He and colleagues used a technique called nonlinear time series analysis to study whether environmental factors affecting fish in the North Pacific Ocean were chaotic or just random. They found that while physical variables like temperature fluctuated randomly, actual fish populations behaved chaotically. Based on this finding, Sugihara and colleagues published a 2005 Nature paper that called on fishery managers to adopt a more “precautionary” approach, which would make fish populations resilient to abrupt shifts in environmental factors like temperature.

Managing uncertainty

In the United States, fisheries are overseen by regional management councils that set catch limits for different species based on advice from scientists at NOAA’s National Marine Fisheries Service. The Magnuson-Stevens Act, which Congress enacted in 1976 and last reauthorized in 2006, requires these scientists to set limits at no higher than a number called “maximum sustainable yield,” defined as the largest number of a given type of fish that fishermen can take without causing the population to decline. This number is typically based on linear models that use current population and knowledge about how fast fish grow and reproduce to estimate future years’ populations. These models have immense power: They determine how many fish will be caught, how much money fishermen can make and, to a significant extent, how marine life will fare on a planet full of fish-eating humans and domestic animals.

Thanks to the concept of maximum sustainable yield, many scientists and conservationists consider U.S. fisheries management a relative success. Under the Magnuson-Stevens Act, catch limits are in place for nearly every fished species in federal waters, defined as between three and 200 miles from shore. Managers must also create recovery plans for any stock found to be severely depleted. As a result, dramatic overfishing disasters like the collapse of Canada’s North Atlantic cod fishery in the early 1990s rarely happen in the United States.

But Sugihara believes the maximum sustainable yields that managers use are little more than guesswork. The models that spit them out rely on fish populations varying around a stable equilibrium, and that, says Sugihara, is a “fiction.” In the real world, a sudden change in temperature or a drop in food supply could send a population on an entirely new trajectory. Especially with climate change likely to stress marine life in unpredictable ways, Sugihara thinks fishing year after year to a theoretical (and scientifically questionable) maximum sustainable yield could easily tip a vulnerable population toward collapse.

Sugihara advocates a different approach that would help make fish populations resilient to environmental and human-made disruptions. Rather than treat fish growth and reproduction rates as static quantities, Sugihara says, the ideal management scheme would nimbly adapt to the predictions that nonlinear time series analysis makes. For instance, if scientists detected an environmental shift likely to imperil a certain fish population, they could advise managers to quickly ratchet down catch limits. “I think it would be more dynamic, making constant adjustments to try to keep things from crashing,” he says.

Providing the kind of predictive power Sugihara dreams of means overcoming several challenges. For one thing, fish, unlike stock prices, are notoriously hard to quantify. The creatures live mostly invisible lives underwater and cover vast areas. Catch numbers have traditionally been a means of estimating fish populations, but those data can reflect the number of fishermen on the water and the fishing technology used as much as actual fish numbers. Over the past few decades, scientists have made major advances in gathering data on marine life; Sugihara and his colleagues have built many of their results on a dataset produced by the California Cooperative Oceanic Fisheries Investigations, which has taken measurements on fish and environmental variables almost yearly since 1950. But nonlinear time series analysis requires data stretching back at least 30 or 40 years, which don’t exist for many species in many places, especially in the developing world.

Data can always be collected, of course, but a more fundamental challenge is the long timescales on which fish populations change. While banks can capitalize on a prediction of a stock’s value minutes or even seconds in advance, fishery managers need projections for a species’ population years out. Nonlinear time series analysis does a decent job of forecasting next year’s population, and perhaps the one after that, but beyond two years, says NOAA’s MacCall, “it’s worthless.” This is an especially serious limitation for managing long-lived species like rockfish, whose populations change over decades, not years.

A long road to implementation

Sugihara is used to his ideas encountering resistance. In the mid-2000s, he spent a lot of time promoting a derivatives market in fishing bycatch, which would have borrowed a quantitative tool from the financial industry to reduce the catch of nontarget species. The system would have capped the amount of bycatch fishermen were permitted; they could then sell credits in a regulated marketplace, much like in cap-and-trade systems commonly used to limit pollution. Managers implemented what Sugihara calls a “watered-down” version of his plan for salmon caught by Alaskan pollock fishermen, but such tradeable credits haven’t caught on broadly. For one thing, the idea struck people as an inappropriate way to manage what are, after all, living beings, says MacCall. “A lot of people felt that was distasteful.” When derivatives based on home mortgages contributed to the 2008 stock market crash, any appetite anyone might have had for such complex financial schemes vanished.

This time around, Sugihara is packaging his ideas more conventionally. He has led workshops where fisheries scientists can try using his methods with their own data, to see if environmental factors like temperature can help predict how populations of the fish species they manage vary. At one recent workshop, initially skeptical scientists studying Atlantic menhaden discovered that Sugihara’s method could account for half the population’s past variability over a certain period. The scientists had thought ups and downs in their data were just random. Because menhaden managers currently lack any workable model, says Ethan Deyle, a graduate researcher in Sugihara’s lab, this species may provide nonlinear methods’ first real-world test.

Sugihara and his students are also building software packages to help fishery professionals become more comfortable with nonlinear time series. Making these programs user-friendly so they provide just the right amount of information is crucial, says Michael Fogarty, a NOAA fishery scientist who has collaborated with Sugihara and is enthusiastic about his work.

Despite these efforts, getting nonlinear time series analysis incorporated into actual practice has proven a slow and, for Sugihara, often frustrating process. A collaboration Sugihara has launched with Fisheries and Oceans Canada is just getting off the ground. Meanwhile, fishery scientists in the United States say they will need more time to convince managers that the technique is solid enough to base catch limits on. Not even Sugihara thinks nonlinear time series analysis will replace conventional linear models any time soon. “It’s not like saying this guy has a better pickax, so forget this shovel,” he says. “That’s not something that’s realistic.”

Andrew Rosenberg, a former regional administrator for the National Marine Fisheries Service, thinks Sugihara’s techniques could help correct errors that routinely creep into population forecasts from linear models. “His methods aren’t a replacement for what’s being done in fisheries, but they’re a really important check,” says Rosenberg, who now heads the Center for Science and Democracy at the Union of Concerned Scientists.

Managers’ conservative attitudes toward unproven methods is understandable, given the high stakes for people who fish for a living, says Fogarty. “People don’t want their livelihoods used as an experiment.” But like many fisheries scientists, he and MacCall wish managers were less reluctant to incorporate new ideas.

“I think what [Sugihara has] done has opened up a different way of looking at things,” MacCall says. “A way the traditional viewpoint never, ever would have considered.”