Videocalling needed more than a pandemic to finally take off. Will it last?

The COVID-19 pandemic made spending hours on video calls second nature to wide swaths of the public – at work and at school.

Luis Alvarez/Getty Images

Eileen Donovan, an 89-year-old mother of seven living in a Boston suburb, loved watching her daughter teach class on Zoom during the coronavirus pandemic. She never imagined Zoom would be how her family eventually attended her funeral.

Donovan died of Parkinson’s disease on June 30, 2020, leaving behind her children, 10 grandchildren and six great-grandchildren. She always wanted a raucous Irish wake. But only five of her children plus some local family could be there in person, and no extended family or friends, due to coronavirus concerns. This was not the way they had expected to mourn.

For online attendees, the ceremony didn’t end with hugs or handshakes. It ended with a click on a red “leave meeting” button, appropriately named for business meetings, but not much else.

It’s the same button that Eileen Donovan-Kranz, Donovan’s daughter, clicks when she finishes an English lecture for her class of undergraduate students at Boston College. And it’s the same way she and I ended our conversation on an unseasonably warm November day: Donovan-Kranz sitting in front of a window in her dining room in Ayer, Mass., and me in my bedroom in Manhattan.

“I’m not going to hold the phone during my mother’s burial,” she remembers thinking. Just a little over a year ago, it would have seemed absurd to have to ask someone to hold up a smartphone so that others could “attend” such a personal event. Donovan-Kranz asked her daughter’s fiancé to do it.

The COVID-19 pandemic has profoundly changed the way people interact with each other and with technology. Screens were for reminiscing over cherished memories, like watching VHS tapes or, more recently, YouTube videos of weddings and birthdays that have already happened. But now, we’re not just watching memories. We’re creating them on screens in real time.

As social distancing measures forced everyone to stay indoors and interact online, multibillion-dollar industries have had to rapidly adjust to create experiences in a 2-D world. And although this concept of living our lives online — from mundane work calls to memorable weddings or concerts — seems novel, both scientists and science fiction writers have seen this reality coming for decades.

In David Foster Wallace’s 1996 novel Infinite Jest, videotelephony enjoys a brief but frenzied popularity in a future America. Entire industries emerge to address people’s self-consciousness on camera. But eventually, the industry collapses when people realize they prefer the familiar voice-only telephone.

Despite multiple efforts by inventors and entrepreneurs to convince us that videoconferencing had arrived, that reality didn’t play out. Time after time, people rejected it for the humble telephone or for other innovations like texting. But in 2020, live video meetings finally found their moment.

It took more than just a pandemic to get us here, some researchers say. Technological advances over the decades together with the ubiquity of the technology got everyone on board. But it wasn’t easy.

Initial attempts

On June 30, 1970 — exactly half a century before Donovan’s death — AT&T launched what it called the nation’s first commercial videoconferencing service in Pittsburgh with a call from Peter Flaherty, the city’s mayor, to John Harper, chairman and CEO of Alcoa Corporation, one of the world’s largest producers of aluminum. Alcoa had already been using the Alcoa Picturephone Remote Information System for retrieving information from a database using buttons on a telephone. The data would be presented on the videophone display. This was before desktop computers were ubiquitous.

This was not AT&T’s first videophone, however. In 1927, U.S. Secretary of Commerce Herbert Hoover had demonstrated a prototype developed by the company. But by 1972, AT&T had a mere 32 units in service in Pittsburgh. The only other city offering commercial service, Chicago, hit its peak sales in 1973, with 453 units. AT&T discontinued the service in the late 1970s, concluding that the videophone was “a concept looking for a market.”

About a decade after AT&T’s first attempt at commercialization, a band called the Buggles released the single “Video Killed the Radio Star,” the first music video to air on MTV. The song reminded people of the technological change that occurred in the 1950s and ’60s, when U.S. households transitioned away from radio as televisions became more accessible to the masses.

The way television achieved market dominance kept videophone developers bullish about their technology’s future. In 1993, optimistic AT&T researchers predicted “the 1990s will be the video communication decade.” Video would change from something we passively consumed to something we interacted with in real time. That was the hope.

When AT&T launched its VideoPhone 2500 in 1992, prices started at a hefty $1,500 (about $2,800 in today’s dollars) — later dropping to $1,000. The phone had compressed color and a slow frame rate of 10 frames per second (Zoom calls today are 30 frames per second), so images were choppy.

Though the company tried to enchant potential customers with visions of the future, people weren’t buying it. Fewer than 20,000 units sold in the five months after the launch. Rejection again.

Building capacity

Last June, to commemorate the 50th anniversary of AT&T’s first videophone launch, William Peduto, Pittsburgh’s mayor, and Michael G. Morris, Alcoa’s chairman at the time, spoke over videophone, just as their predecessors had done.

Several scholars, including Andrew Meade McGee, a historian of technology and society at Carnegie Mellon University in Pittsburgh, joined for an online panel to discuss the rocky history of the videophone and its 2020 success. McGee told me a few months later that two things are crucial for a product’s actual adoption: “capacity and circumstance.” Capacity is all about the technology that makes a product easy to use and affordable. For videophones, it’s taken a while to get there.

When the Picturephone, which was launched by AT&T and Bell Telephone Laboratories, premiered at the 1964 World’s Fair in New York City, a three-minute call cost $16 to $27 (that’s about $135 to $230 in 2021). It was available only in booths in New York City, Chicago and Washington, D.C. (SN: 8/1/64, p. 73). Using the product required planning, effort and money — for low reward. The connection required multiple phone lines and the picture appeared on a black-and-white screen about the size of today’s iPhone screens.

These challenges made the Picturephone a tough sell. Marketing researchers Steve Schnaars and Cliff Wymbs of Baruch College at the City University of New York theorized why videophones hadn’t taken off decades before in Technological Forecasting and Social Change in 2004. Along with capacity and circumstance, they argued, critical mass is key.

For a technology to become popular, the researchers wrote, everybody needs the money and motivation to adopt it. And potential users need to know that others also have the device — that’s the critical mass. But when everyone uses this logic, no one ends up buying the new product. Social networking platforms and dating apps face the same hurdle when they launch, which is why the apps create incentive programs to hook those all-important initial users.

Internet access

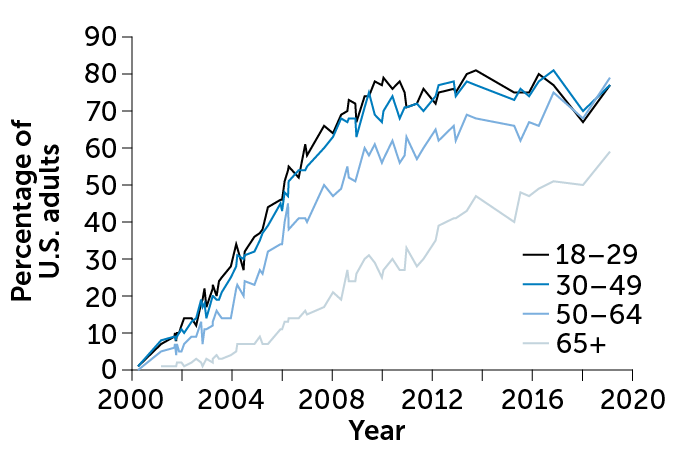

Even in the early 2000s, when Skype made a splash with its Voice over Internet Protocol, or VoIP, enabling internet-based calls that left landlines free, people weren’t as connected to the internet as they are today. In 2000, only 3 percent of U.S. adults had high-speed internet, and 34 percent had a dial-up connection, according to the Pew Research Center.

By 2019, the story had changed: Seventy-three percent of all U.S. adults had high-speed internet at home; with 63 percent coverage in rural areas. Globally, the number of internet users also increased, from about 397 million in 2000, to about 2 billion in 2010 and 3.9 billion in 2019.

But even after capacity was established, we weren’t glued to our videophones as we are today, or as inventors predicted years ago. Although Skype claimed to have 300 million users in 2019, Skype was a service that people typically used on occasion, for international calls or as something that took advance planning.

One long-time barrier that the Baruch College researchers cite from an informal survey is the aversion to always being “on.” Some people would have paid extra to not be on camera in their home, the same way people would pay extra to have their phone numbers left out of telephone books.

“Once people experienced [the 1970s] videophone, there was this realization that maybe you don’t always want to be on a physical call with someone else,” McGee says. Videocalling developers had predicted these challenges early on. In 1969, Julius Molnar, vice president at Bell Telephone Labs, wrote that people will be “much concerned with how they will appear on the screen of the called party.”

A scene from the 1960s cartoon The Jetsons illustrates this concern: George Jetson answers a videophone call. When he tells his wife Jane that her friend Gloria is on the phone, Jane responds, “Gloria! Oh dear, I can’t let her see me looking like this.” Jane grabs her “morning mask” — for the perfect hair and face — before taking the call.

That aversion to face time is one of the factors that kept people away from videocalling.

It took the pandemic, a change in circumstance, to force our hand. “What’s remarkable,” McGee says, “is the way in which large sectors of U.S. society have all of a sudden been thrust into being able to use videocalls on a daily basis.”

Circumstance shift

Starting in March 2020, mandatory stay-at-home orders around the world forced us to carry on an abridged form of our pre-pandemic lives, but from a distance. And one company beat the competition and rose to the top within a matter of months.

Soon after lockdown, Zoom became a verb. It was the go-to choice for all types of events. The perfect storm of capacity and circumstance led to the critical mass needed to create the Zoom boom.

Before Zoom, a handful of companies had been trying to fill the space that AT&T’s videophone could not. Skype became the sixth most downloaded mobile app of the decade from 2010 to 2019. FaceTime, WhatsApp, Instagram, Facebook Messenger and Google’s videochatting applications were and still are among the most popular platforms for videocalls.

Then 2020 happened.

Zoom beat its well-established competitors to quickly become a household name globally. It gained critical mass over other platforms by being easy to use.

“The fact that it’s been modeled around this virtual room that you come into and out of really simplifies the connection process,” says Carman Neustaedter of the School of Communication, Art and Technology at Simon Fraser University in Burnaby, Canada, where his team has researched being present on videocalls for work, home and health.

Zoom reflects our actions in real life — where we all walk into a room and everyone is just there. Casual users don’t need to have an account or connect ahead of time with those we want to talk to.

Beyond design, there were likely some market factors at play as well. Zoom connected early with universities, claiming by 2016 to be at 88 percent of “the top U.S. universities.” And just as K–12 schools worldwide started closing last March, Zoom offered free unlimited meeting minutes.

In December 2019, Zoom statistics put its maximum number of daily meeting participants (both paid and free) at about 10 million. In March 2020, that number had risen to 200 million, and the following month it was up to 300 million. The way Zoom counts those users is a point of contention.

But these numbers still provide some insight: If the product wasn’t easy and helpful, we wouldn’t have kept using it. That’s not to say that Zoom is the perfect platform, Neustaedter says. It has some obvious shortcomings.

“It’s almost too rigid,” he says.

It doesn’t allow for natural conversation; participants have to take turns talking, toggling the mute button to let others take a turn. Even with the ability to send private and direct messages to anyone in the room, the natural way we form groups and make small talk in real life is lost with Zoom.

It’s also not the best for parties — it’s awkward to attend a birthday party online when only one out of 30 friends can talk at a time. That’s why some people have been enticed to switch to other videocalling platforms to host larger online events, like graduations.

For example, Remo, founded in 2018, uses visual virtual rooms. Everyone gets an avatar and can choose a table after seeing who else is there, to talk in smaller groups. Instead of Zoom breakout sessions where you’re assigned a room and can’t enter another one on your own, a platform like Remo allows you to virtually see all the rooms and pick one, exit it and go to another one all without the help of a host.

The rigidity also results in Zoom fatigue, that feeling of burnout associated with overusing virtual platforms to communicate. Videocalling doesn’t allow us to use direct eye contact or easily pick up nonverbal cues from body language — things we do during in-person conversations.

The psychological rewards of videocalling — the chance to be social — don’t always outweigh the costs.

Jeremy Bailenson, director of the Virtual Human Interaction Lab at Stanford University, laid out four features that lead to Zoom fatigue in the Feb. 23 Technology, Mind and Behavior. Along with cognitive load and reduced mobility, he blames the long stretches of closeup eye gazing and the “all-day mirror.” When you constantly see yourself on camera interacting with others, self-consciousness and exhaustion set in.

Bailenson has since changed his relationship with Zoom: He now hides the box that lets him view himself, and he shrinks the size of the Zoom screen to make gazing faces less imposing. Bailenson expects minor changes to the platform will help reduce the psychological heaviness we feel.

Other challenges with Zoom have revolved around security. In April 2020, the term “Zoombombing” arose as teleconferencing calls on the platform were hijacked by uninvited people. Companies that could afford to switch quickly moved away from Zoom and paid for services elsewhere. For everyone else who stayed on the platform, Zoom added close to 100 new privacy, safety and security features by July 2020. These changes included the addition of end-to-end encryption for all users and meeting passcodes.

Anybody’s guess

In Metropolis, the 1927 sci-fi silent film, a master of an industrial city in the dystopian future uses four separate dials on a videophone to put a call through. Thankfully, placing a videocall is much easier than it was predicted to be. But how much will we use this far-from-perfect technology once the pandemic is over?

In the book Productivity and the Pandemic, released in January, behavioral economist Stuart Mills discusses why consumers might keep using videocalling. This pandemic may establish habits and preferences that will not disappear once the crisis is over, Mills, of the London School of Economics, and coauthors write. When people are forced to experiment with new behaviors, as we did with the videophone during this pandemic, the result can be permanent behavioral changes. Collaboration through videocalling may remain popular even after shutdowns lift now that we know how it works.

Events that require real-life interactions, such as funerals and some conferences, may not change much from what we were used to pre-pandemic.

For other industries, videocalling may change certain processes. For example, Reverend Annie Lawrence of New York City predicts permanent changes for parts of the wedding industry. People like the ease of getting a marriage license online, and she’s been surprisingly in demand doing video weddings since the pandemic started. Before, getting booked for officiating a wedding would require notice months in advance. “Now, I’ve been getting calls on Friday to ask if I can officiate a wedding on Saturday,” she says.

Other sectors of society may realize that videocalling isn’t for them, and will leave just a few processes to be done online. Jamie Dimon, CEO of JPMorgan Chase, for example, stated in a March 1 interview with Bloomberg Markets and Finance that he thinks a large portion of his staff will permanently work in the office when that becomes possible again. Culture is hard to build on Zoom, relationships are hard to strengthen and spontaneous collaboration is difficult, he said. And there’s research that backs this.

But none of these changes or reversions to our previous normal are a sure bet. We may find, just like in Wallace’s satirical storyline, that videocalls are just too much stress, and the world will revert back to phone calls and face-to-face time. We may realize that even when the technology gets better, the lifting of shutdowns and return to in-person life may mean fewer people are available for videocalls.

It’s hard to say which scenario is the most likely to play out in the long run. We’ve been terribly wrong about these things before.