Moral dilemma could put brakes on driverless cars

In emergencies, who should be saved: Passengers or pedestrians?

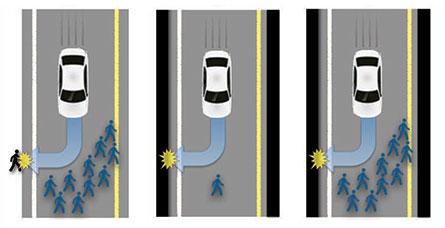

CAR CONFLICT Driverless cars will need to be programmed to handle emergency situations. New surveys find that people have conflicting opinions on whether automated vehicles should protect pedestrians over car passengers.

Jason Doiy/iStockphoto

Driverless cars are revved up to make getting from one place to another safer and less stressful. But clashing views over how such vehicles should be programmed to deal with emergencies may stall the transportation transformation, a new study finds.

People generally approve of the idea of automated vehicles designed to swerve into walls or otherwise sacrifice their passengers to save a greater number pedestrians, say psychologist Jean-François Bonnefon of the Toulouse School of Economics in France and his colleagues. But here’s the hitch: Those same people want to ride in cars that protect passengers at all costs, even if pedestrians end up dying, the researchers report in the June 24 Science.

“Autonomous cars can revolutionize transportation,” says cognitive scientist and study coauthor Iyad Rahwan of the University of California, Irvine and MIT. “But they pose a social and moral dilemma that may delay adoption of this technology.”

Such conflict puts makers of computerized cars in a tough spot, Bonnefon’s group warns. Given a choice between driverless cars programmed for the greater good or for self-protection, consumers will overwhelmingly choose the latter. Regulations to enforce the design of passenger-sacrificing cars would backfire, the scientists suspect, driving away potential buyers. If so, plans for easing traffic congestion, reducing pollution and eliminating many traffic accidents with driverless cars would be dashed.

Further complicating matters, the investigators say, automated vehicles will need to respond to emergency situations in which an action’s consequences can’t be known for sure. Is it acceptable, for instance, to program a car to avoid a motorcycle by swerving into a wall, since the car’s passenger is more likely to survive a crash than the motorcyclist?

“Before we can put our values into machines, we have to figure out how to make our values clear and consistent,” writes Harvard University philosopher and cognitive scientist Joshua Greene in the same issue of Science.

But moral dilemmas have long dogged human civilizations and are sometimes unavoidable, says psychologist Kurt Gray of the University of North Carolina in Chapel Hill. People may endorse conflicting values depending on the situation — say, saving others when taking an impersonal perspective and saving oneself when one’s life is on the line.

Workable compromises can be reached, Gray says. If all driverless cars are programmed to protect passengers in emergencies, automobile accidents will still decline, he predicts. Despite being a danger to pedestrians on rare occasions, those vehicles “won’t speed, won’t drive drunk and won’t text while driving, which would be a win for society.”

Bonnefon’s team examined attitudes toward driverless vehicles in six online surveys conducted between June and November 2015. A total of 1,928 U.S. participants completed surveys.

Participants generally disapproved of automated vehicles sacrificing a passenger to save one pedestrian, but approval rose sharply with the number of pedestrians’ lives that could be saved. For instance, about three-quarters of volunteers in one survey said it was more moral for an automated car to sacrifice one passenger rather than kill 10 pedestrians. That trend held even when volunteers imagined they were in a driverless car with family members.

Bonnefon doubts those participants were simply trying to impress researchers with “noble” answers. But opinions changed when participants were asked about their views of driverless cars in actual practice. Responders to another survey similarly rated pedestrian-protecting automated cars as more moral, but most of those same people readily admitted that they wanted passenger-protecting cars for themselves. Other participants considered driverless cars that swerved to avoid pedestrians as good for others to drive but had little intention to buy one.

Volunteers expressed weak support for a law forcing either human drivers or automated cars to swerve to avoid pedestrians. Even in a hypothetical case where a passenger-sacrificing automated car saved the lives of 10 pedestrians, participants rated their willingness to have such sacrifices legally enforced at only 40 on a scale of 0 to 100. A final survey found that participants were much less likely to consider buying driverless cars subject to pedestrian-protecting regulations after being presented with situations in which they were riding alone, with an unspecified family member or with their child.