Artificial intelligence is mastering a wider variety of jobs than ever before

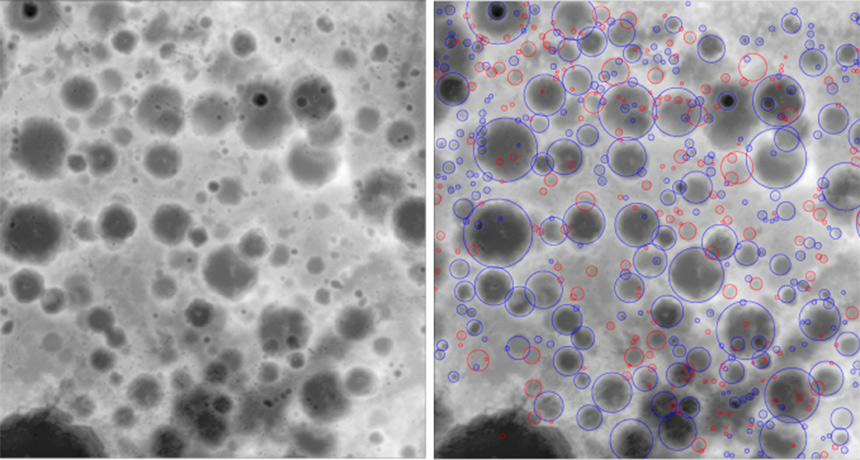

COUNTING CRATERS An AI that inspected images of lunar terrain (left) found previously discovered craters (right, blue circles), as well as potential new ones (red circles). In 2018, AI bested humans at following fauna, diagnosing disease, mapping the moon and more.

A. Silburt et al/Arxiv.org 2018

![]() In 2018, artificial intelligence took on new tasks, with these smarty-pants algorithms acing everything from disease diagnosis to crater counting.

In 2018, artificial intelligence took on new tasks, with these smarty-pants algorithms acing everything from disease diagnosis to crater counting.

Coming to a clinic near you

In April, the U.S. Food and Drug Administration permitted marketing of the first artificial intelligence that diagnoses health problems at primary care clinics without specialist supervision (SN: 3/31/18, p. 15). The program, which inspects eye images for signs of diabetes-related vision loss, could be a boon for people in remote or low-resource areas where ophthalmologists are scarce. Other eye-inspecting AI programs are learning to recognize everything from age-related vision loss to heart problems.

Moon mapping

One artificial intelligence is a celestial cartographer after Galileo’s own heart. The algorithm studied a third of the moon’s surface to learn what craters look like (SN Online: 3/15/18). When playing crater “I Spy” with a different third of the lunar landscape, the AI found 92 percent of previously discovered craters and spotted about 6,000 pockmarks that humans had missed. If focused on rocky planets and icy moons, this program could give new insight into the solar system’s history.

Ear to the ground

Artificial intelligence that predicts where earthquake aftershocks will hit could help people in high-risk areas better prepare for these dangerous seismic shake-ups. A program that studied characteristics of over 130,000 earthquakes and their aftershocks learned to predict aftershock locations much more accurately than traditional techniques (SN Online: 8/29/18).

Seeing is not believing

Of course, smarter artificial intelligence isn’t always good news. One AI that raised eyebrows in 2018 generates realistic fake video footage by making the subject of one video mirror the motions and expressions of someone else in a different clip (SN: 9/15/18, p. 12). In the wrong hands, this AI could be a powerful tool for spreading misinformation (SN: 8/4/18, p. 22).

Megafauna paparazzi

Automated camera traps, which snap photos of animals in their natural habitats, can help researchers and conservationists track animal behavior. But these wildlife-watching systems take more photos than any human has time to review. Enter a master naturalist artificial intelligence that learned to identify wildlife by studying 1.4 million hand-labeled images collected by the Snapshot Serengeti citizen science project. This algorithm, described in June in the Proceedings of the National Academy of Sciences, labels the number, species and activity of animals in each new picture.

Savvy navigator

Designing artificial intelligence to mimic the activity in specific regions of the brain could help scientists better understand how our minds work. An AI programmed with virtual versions of specialized brain cells called grid cells found shortcuts through a virtual maze better than an AI without the mapping cells (SN: 6/9/18, p. 14). The grid cell–equipped AI’s nimble navigation suggests that grid cells in mammal brains do more than give animals an internal coordinate system. The nerve cells may also help us map the shortest route to our destination.

Competing companies join forces

A new artificial intelligence lets competing drug companies share information without revealing secrets (SN Online: 10/18/18). This secure setup using a novel cryptography system may encourage drug companies to pool their resources, creating larger libraries of training data to beget smarter AI. Programmers have used the system to train an AI that predicts which drugs will interact with which proteins in the human body to develop new medical treatments. AI could also use this system to analyze confidential hospital health records to devise patient treatment plans and make prognoses.

Say what?

Humans are naturally good at ignoring background babble to focus on what a single person is saying. Computers, not so much. But now, an artificial intelligence analyzes both audio and visual cues, like lip movements, to pick out what individual speakers are saying in noisy videos (SN: 7/7/18, p. 16). Such keen-eared AI could write more accurate captions and power virtual assistants that better understand voice commands in loud settings.