Is redoing scientific research the best way to find truth?

During replication attempts, too many studies fail to pass muster

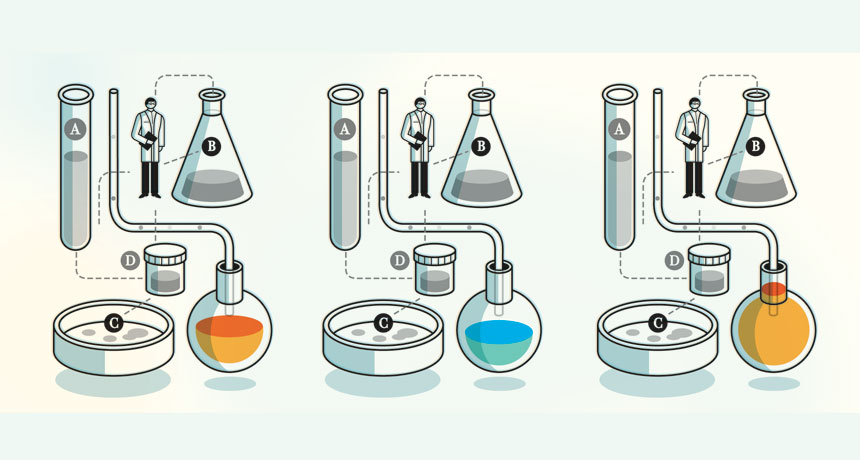

REPEAT PERFORMANCE By some accounts, science is facing a crisis of confidence because the results of many studies aren’t confirmed when researchers attempt to replicate the experiments.

Harry Campbell

R. Allan Mufson remembers the alarming letters from physicians. They were testing a drug intended to help cancer patients by boosting levels of oxygen-carrying hemoglobin in their blood.